How to Automatically Scrape LinkedIn Posts Using Make.com (Step-by-Step Guide)

Learn the easiest and most affordable way to automatically scrape LinkedIn posts using make.com and Apify. This step-by-step guide shows you how to collect LinkedIn posts into Google Sheets for competitor analysis, portfolio tracking, and more.

In this guide, we'll learn how to set up an automated system to scrape the latest LinkedIn posts from a list of profiles and save them to a spreadsheet using Make.com, Apify, and Google Sheets. This process can help you track updates from competitors, portfolio companies, or any LinkedIn profiles you want to monitor. You will see how to connect these tools and automate the collection and organization of LinkedIn post data.

Let's get started

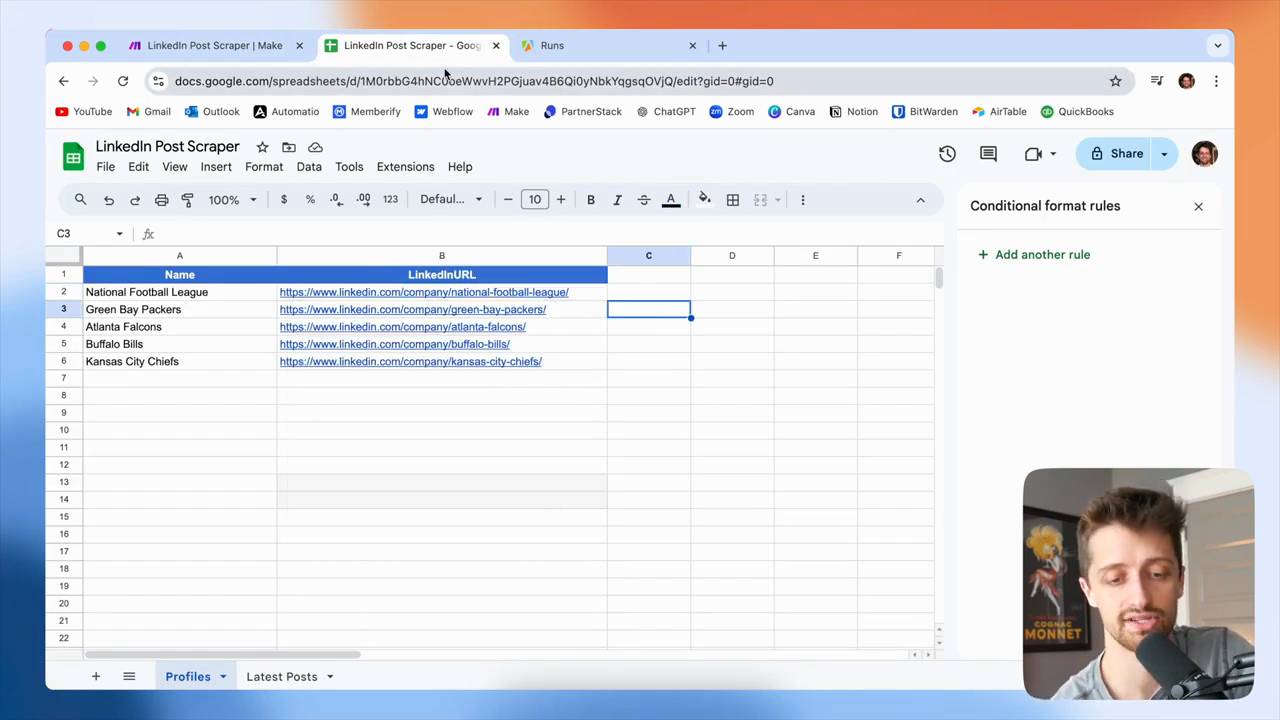

Hello, everyone. Today, I will show you the best and likely cheapest way to automatically scrape LinkedIn posts using make.com. Here we go. Today, we will build a system that takes a list of LinkedIn URLs and scrapes each profile for their latest posts. All collected posts will be added to a spreadsheet.

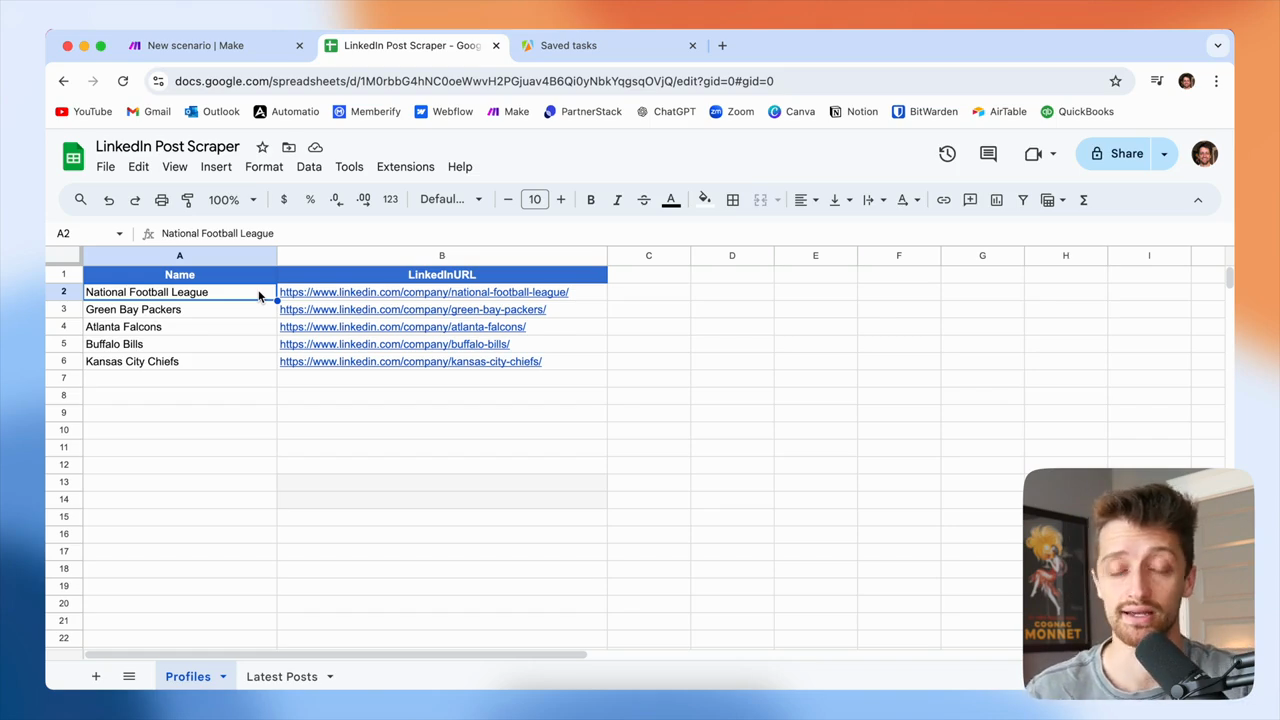

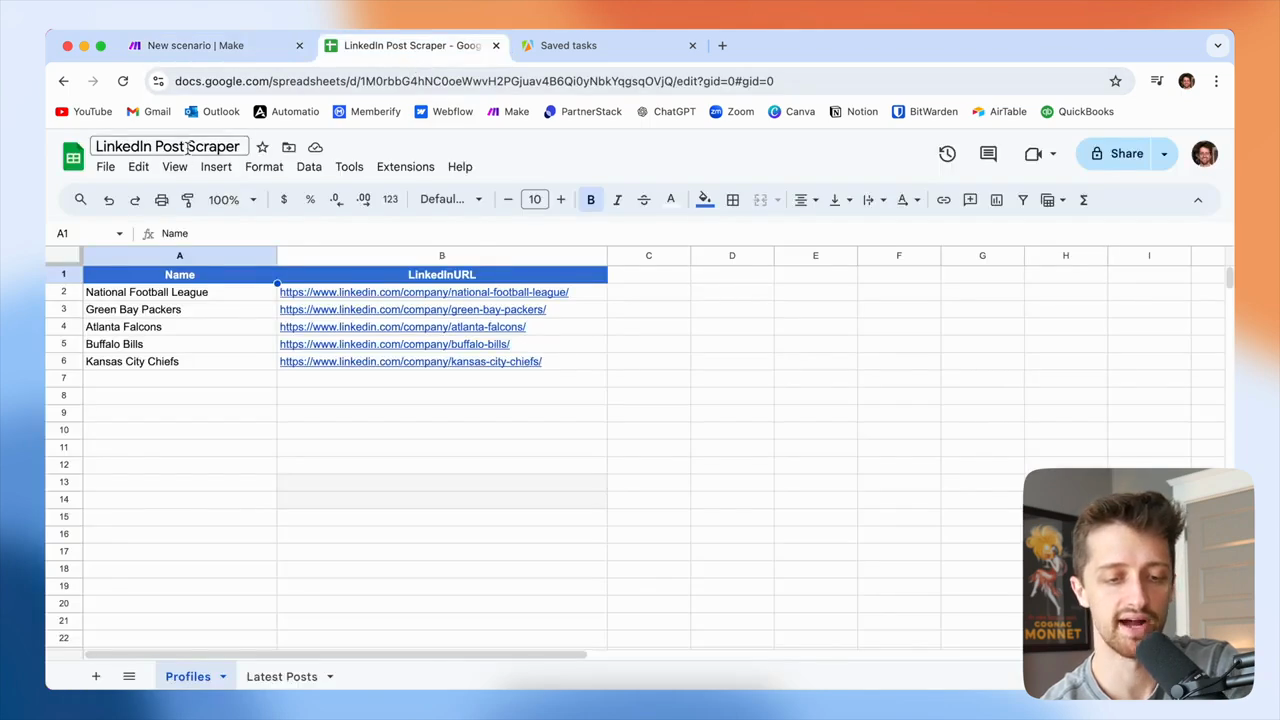

I will use Google Sheets in this guide, but you can use Airtable or any system you prefer. You can see how a system like this could be very valuable to you in several ways.

In this example, I will pretend to be an NFL fan who wants to keep up with my favorite team's latest LinkedIn posts. If you're in the finance industry and want to monitor 10, 20, 30, or even 100 of your top competitors, you can track what they're doing and posting on LinkedIn.

You can run this automation weekly to collect all their latest posts in a spreadsheet. Then, you can organize and analyze the data as you prefer.

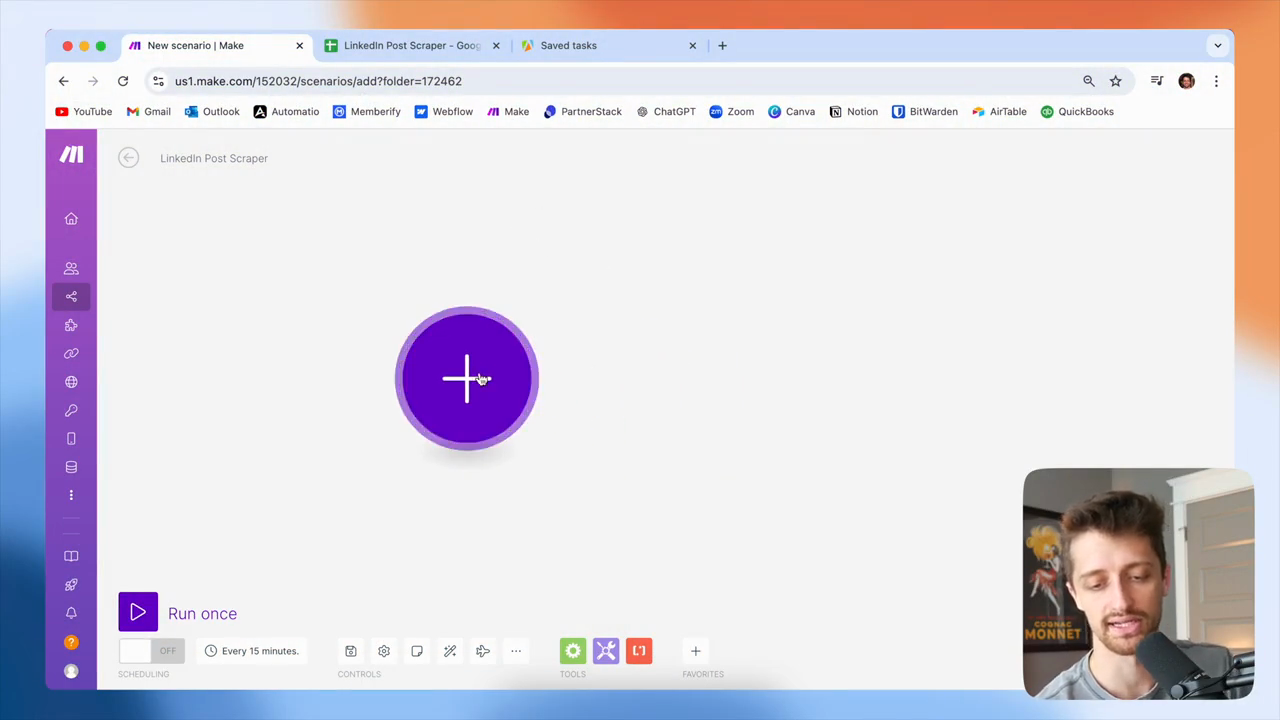

Some companies use systems like this to track their portfolio companies. This helps them monitor what the companies they own are working on and stay updated. There are countless possible use cases here. I will show you a basic example that you can adapt to your business model as needed. Okay? Let's open make.com and start building.

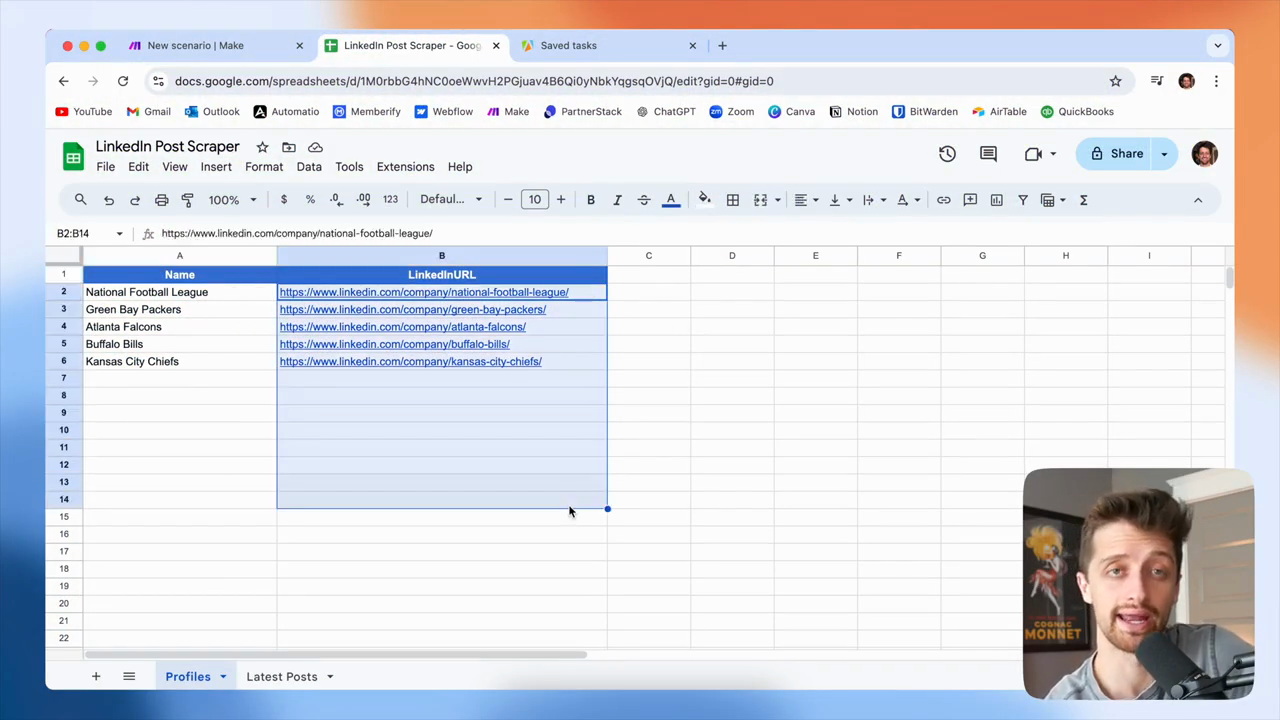

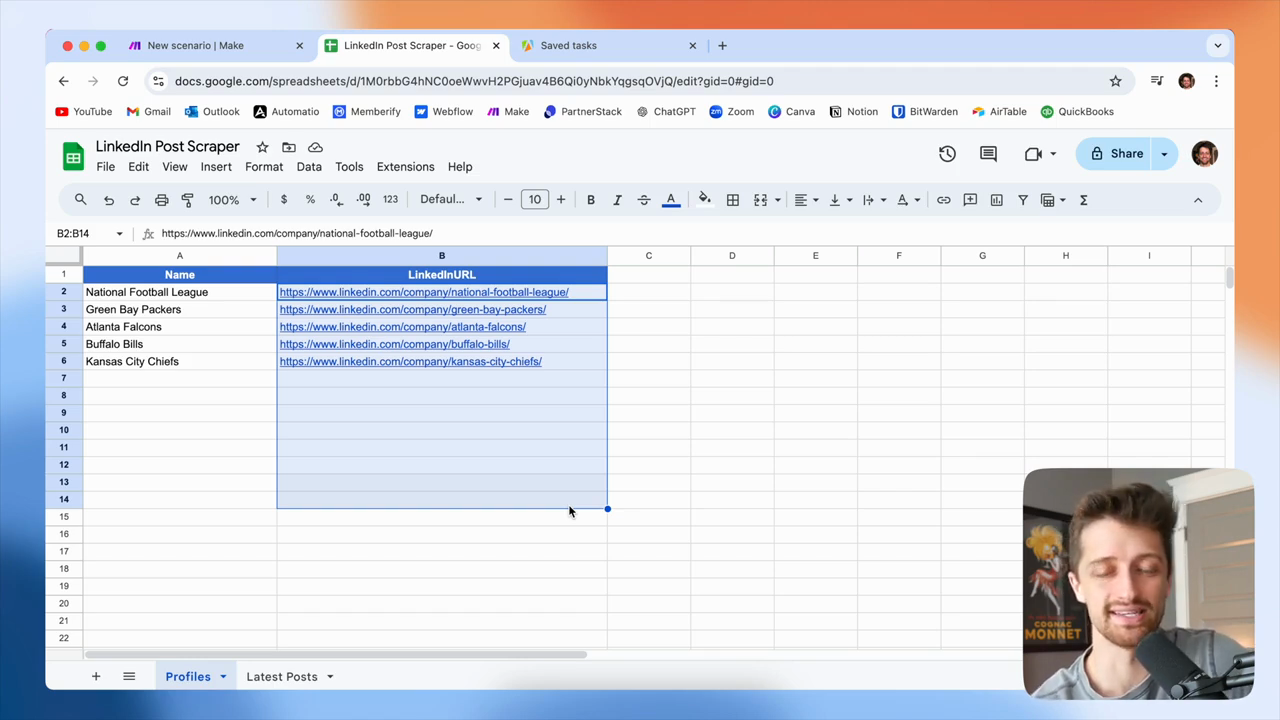

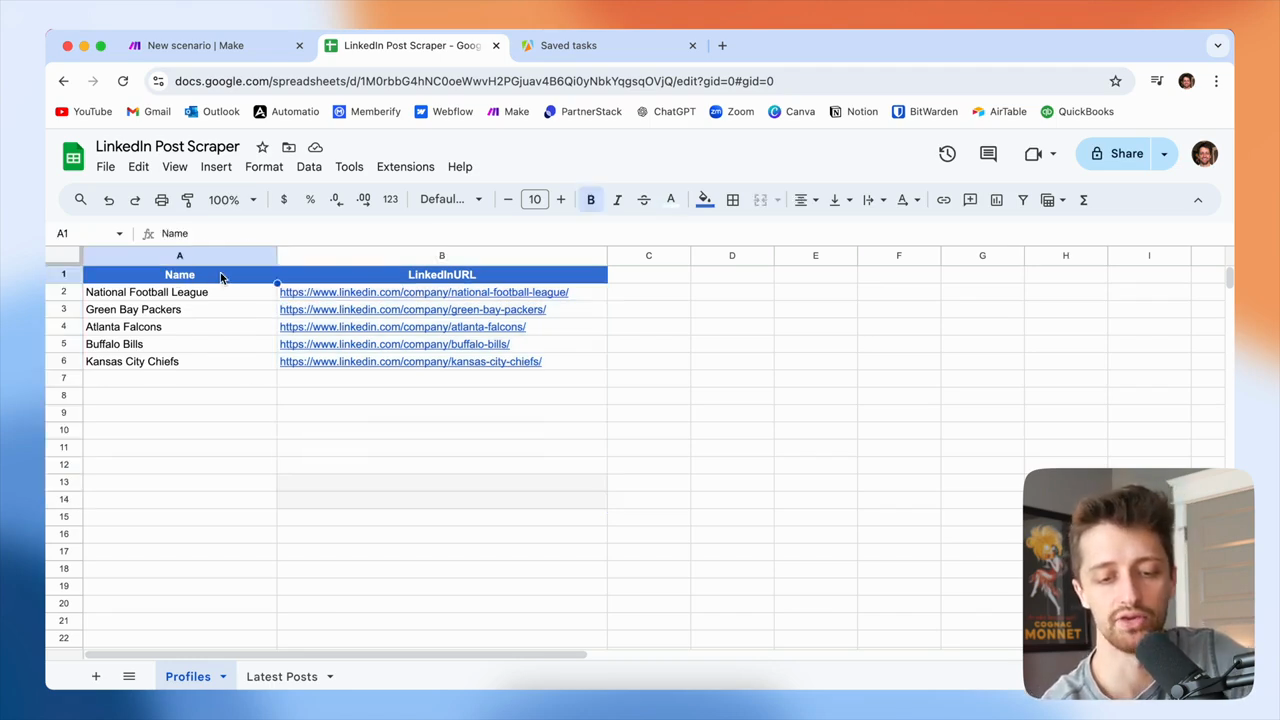

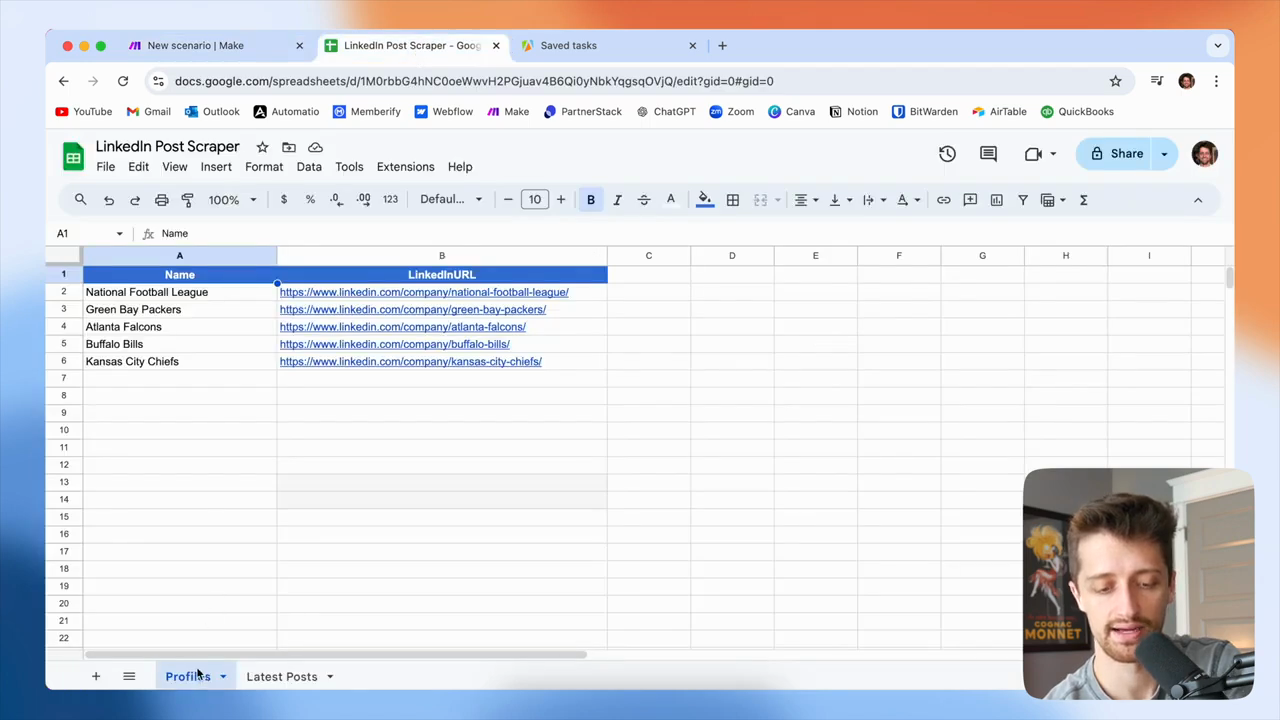

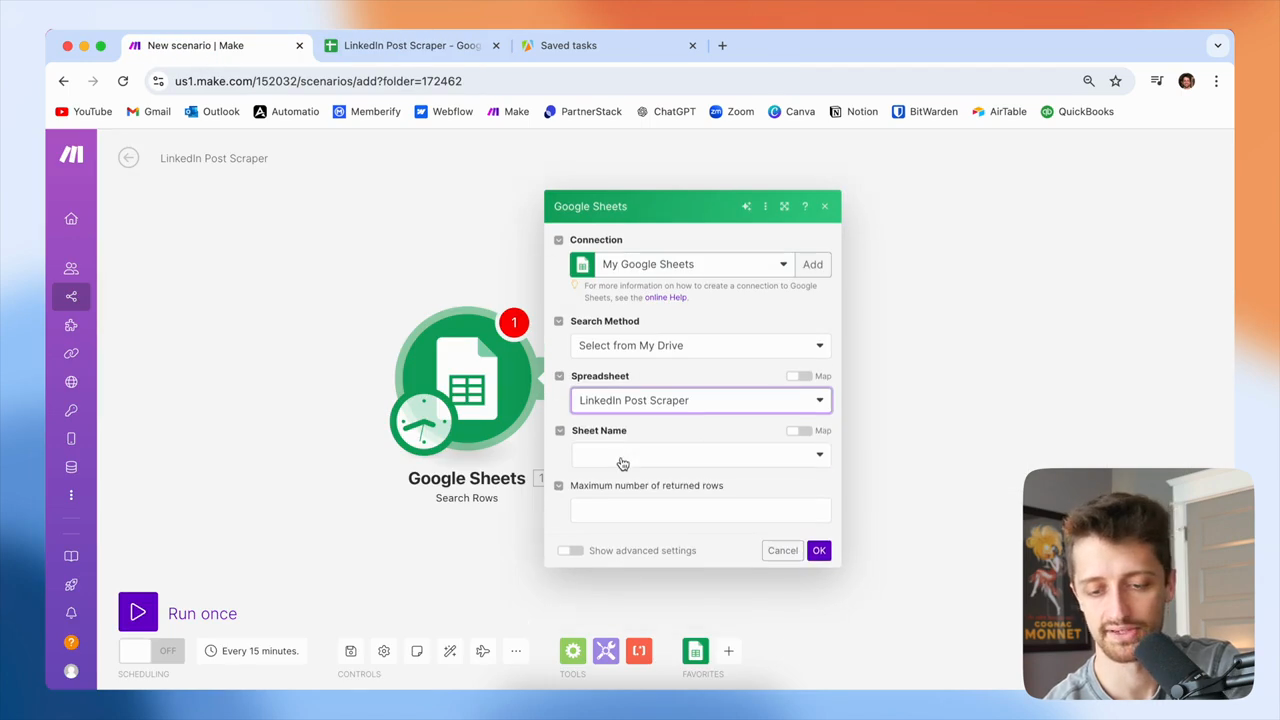

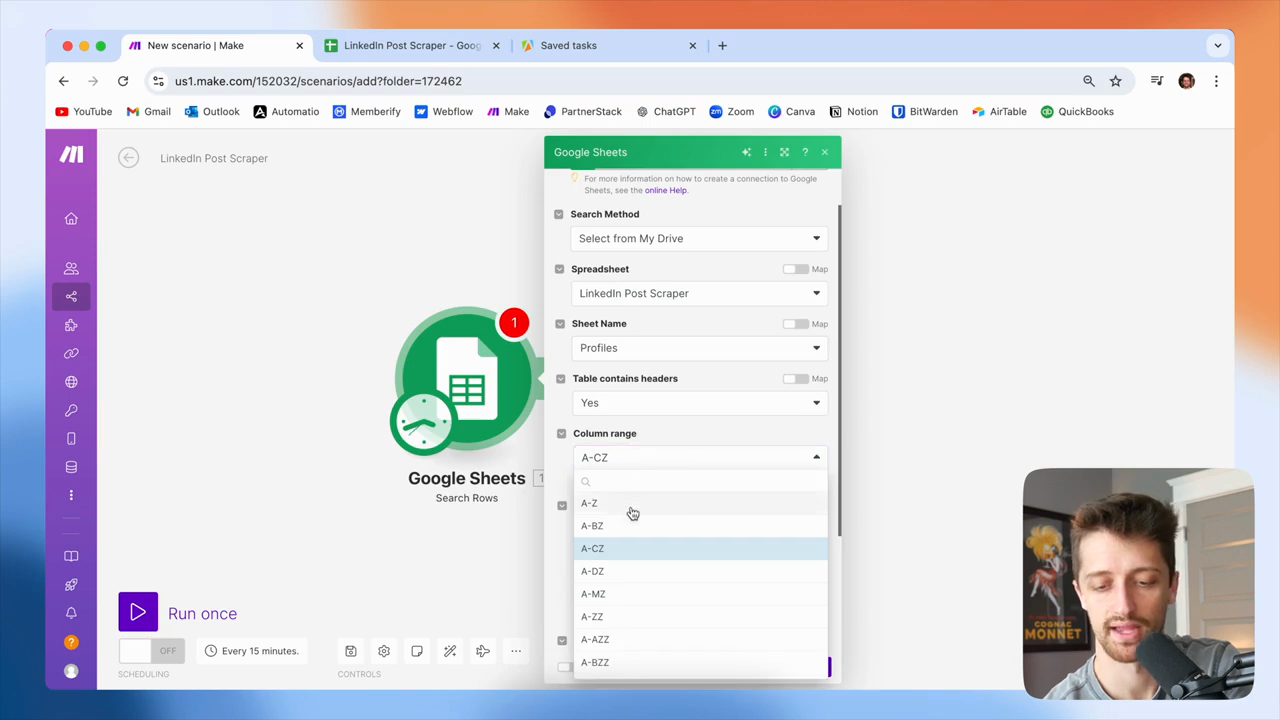

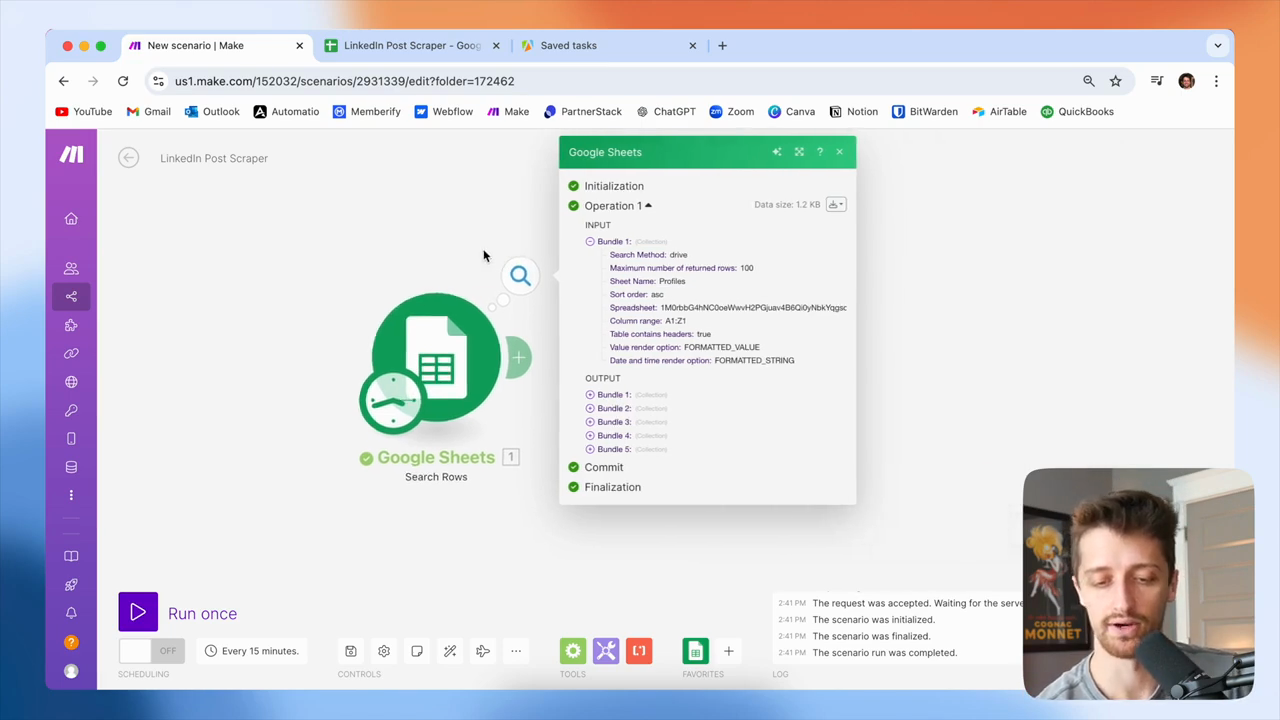

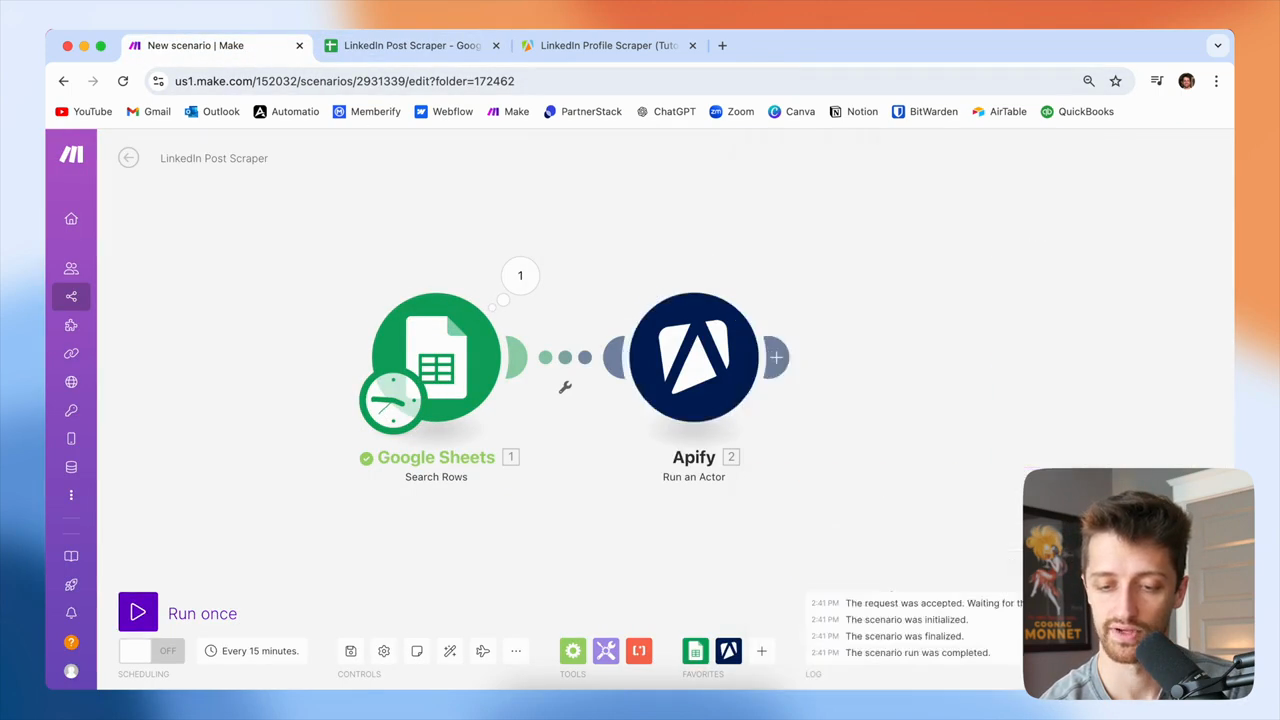

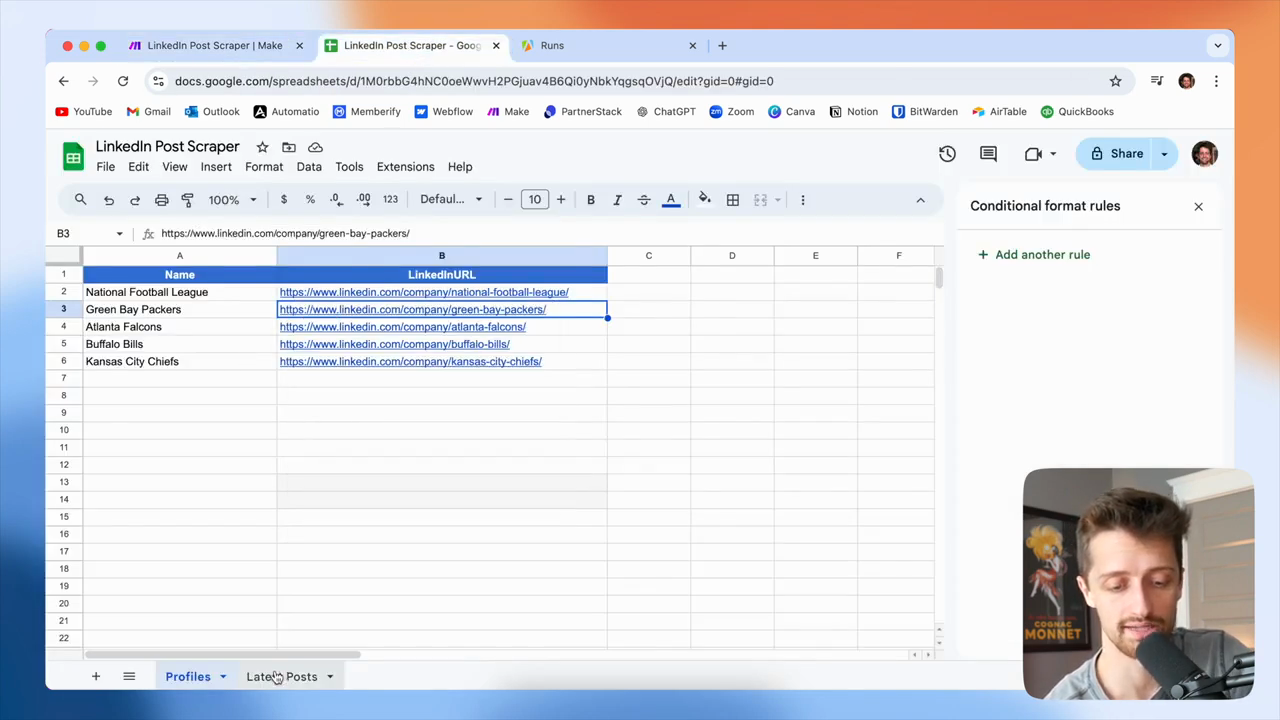

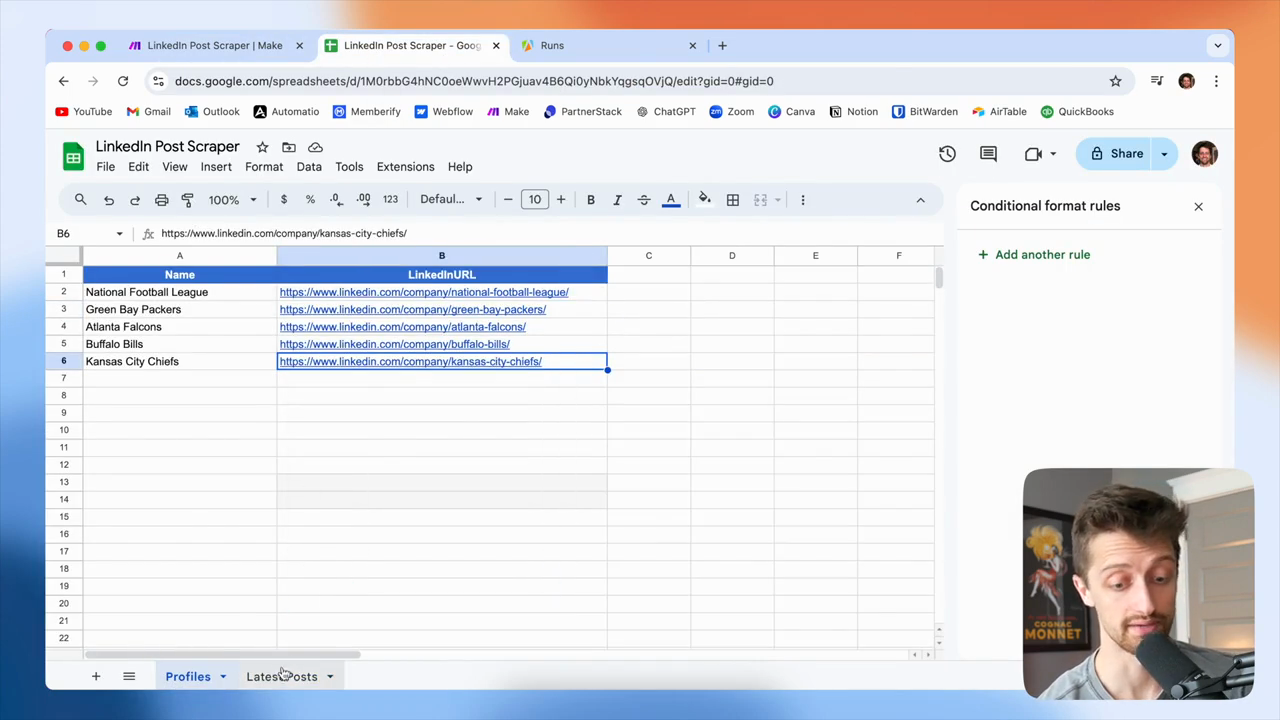

Today, we will be using Google Sheets.

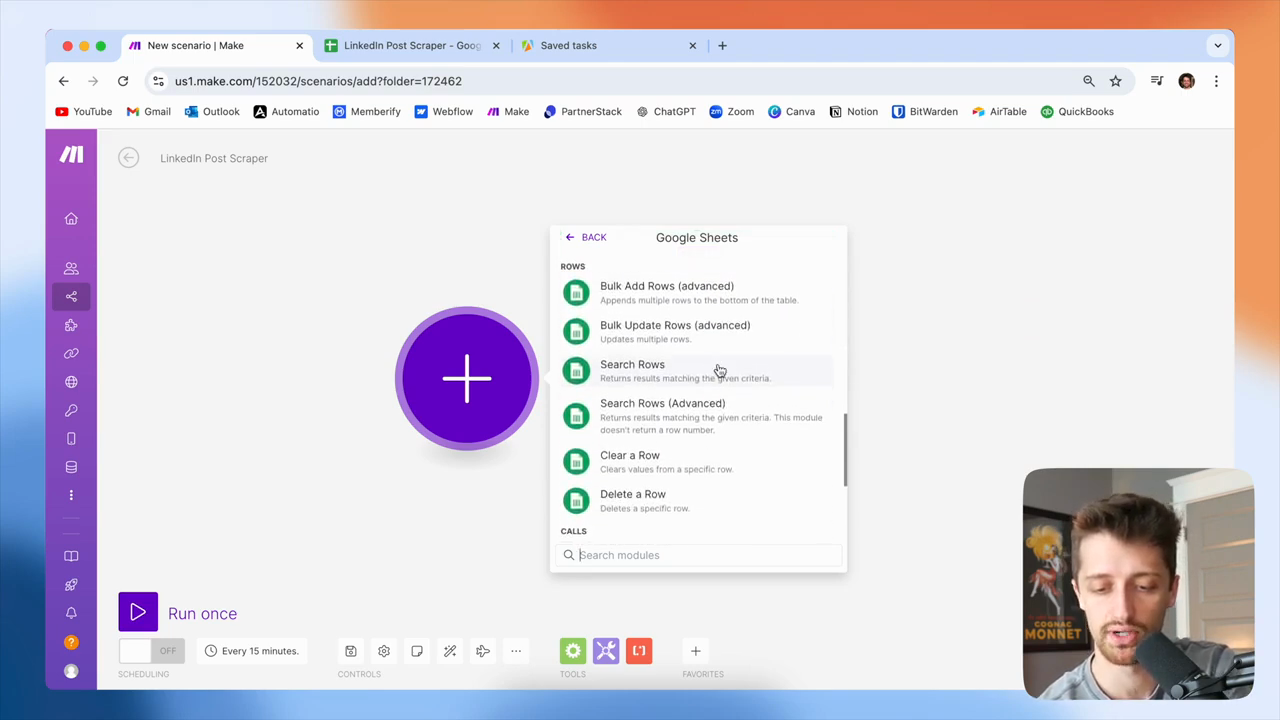

Google Sheets will be our trigger. We are going to search the rows.

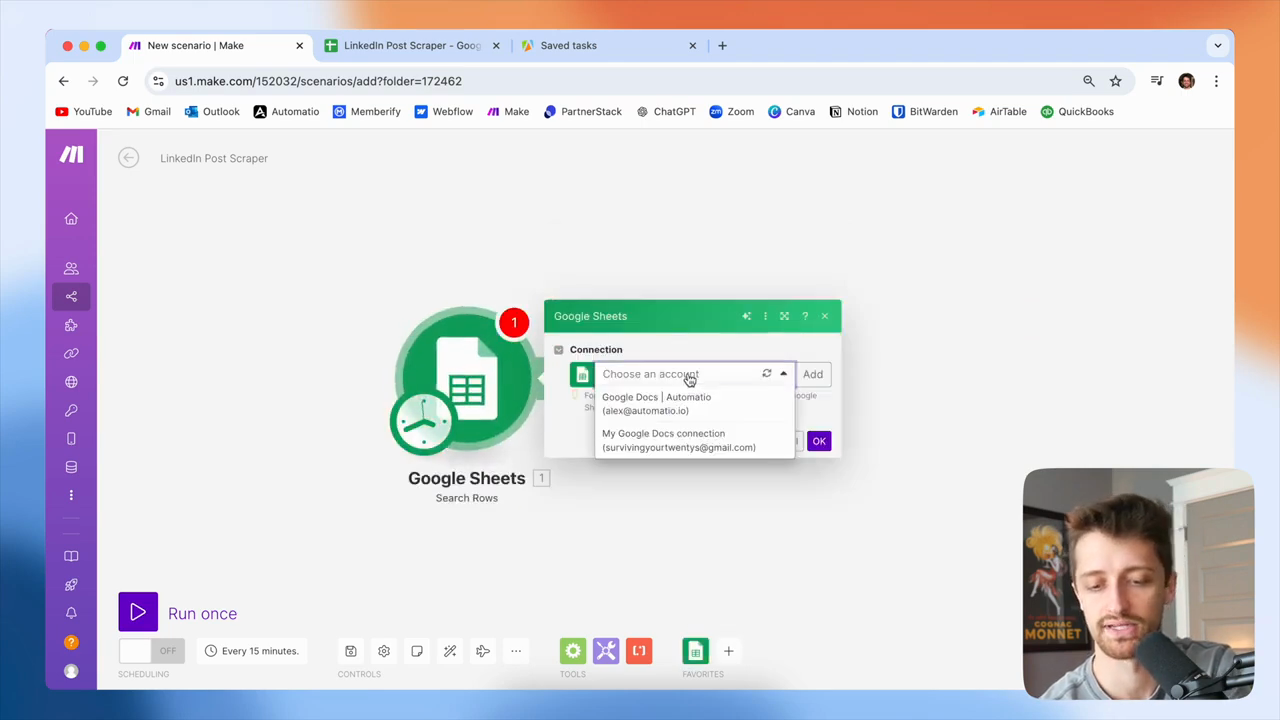

Then I'll use the link to my Google Sheets account.

All right, now I'll search for this unique file—my LinkedIn post scraper file.

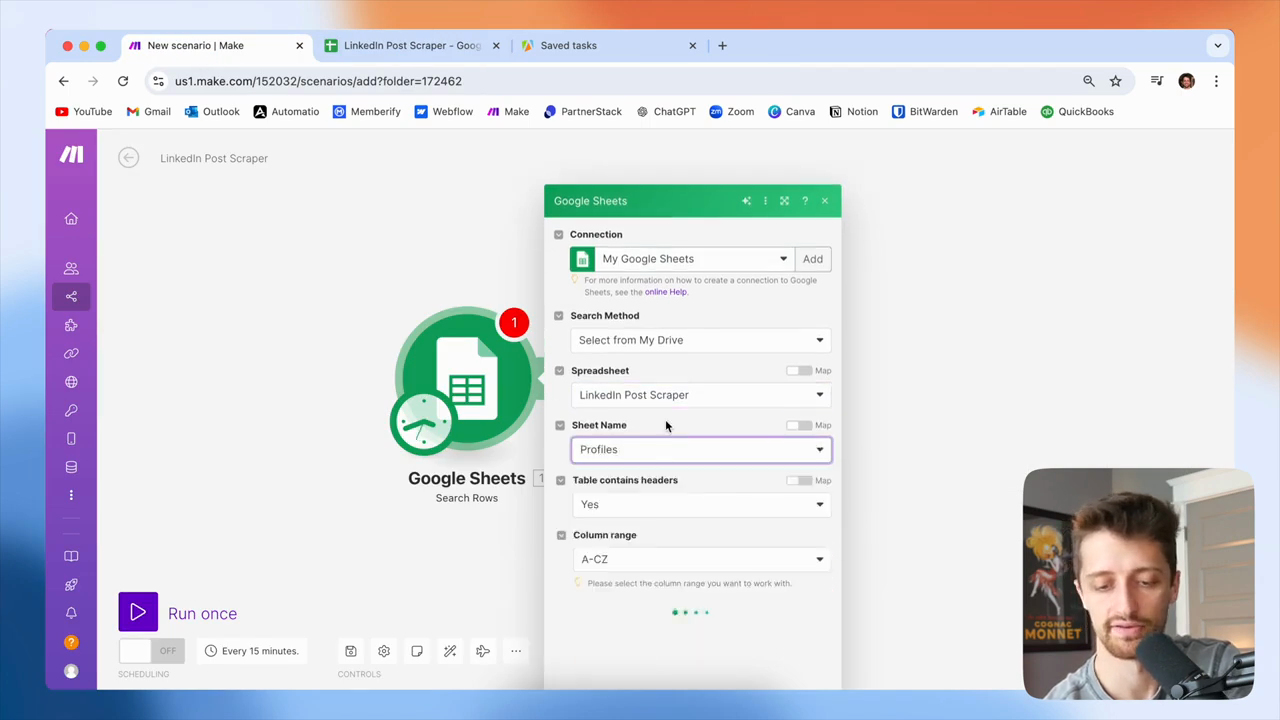

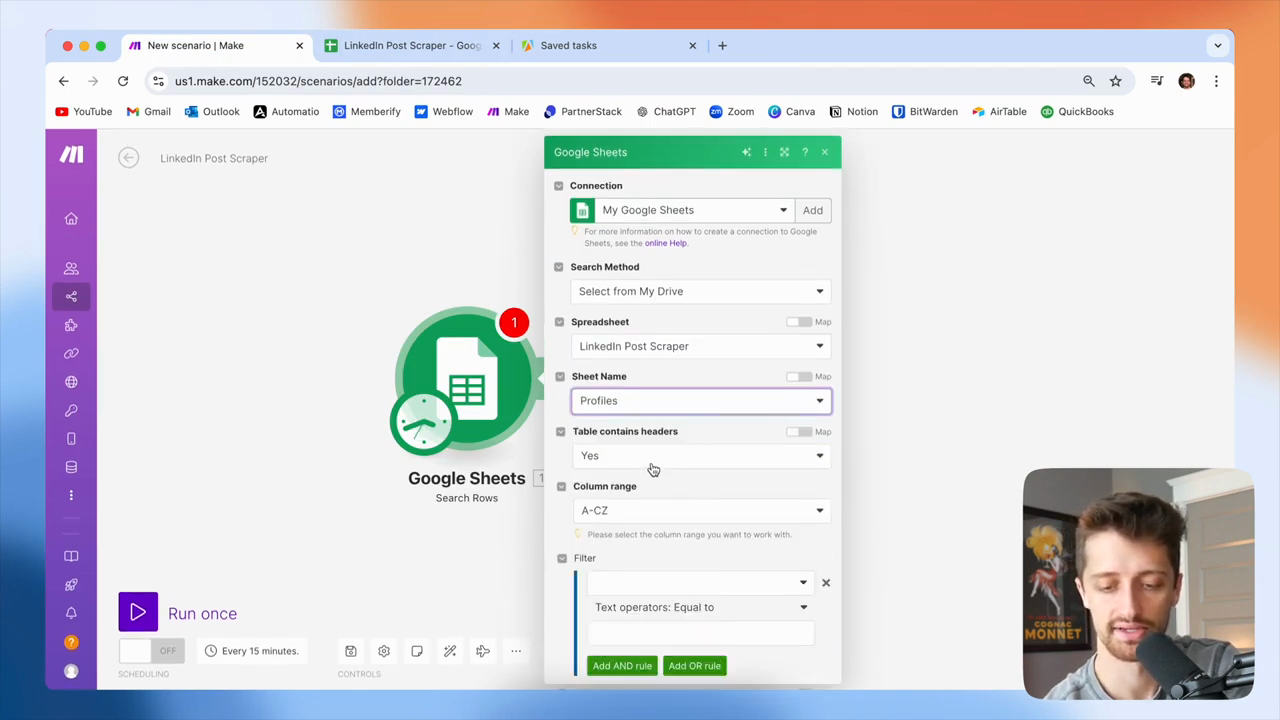

The tab I will use is called Profiles.

I'll find my sheet named Profiles.

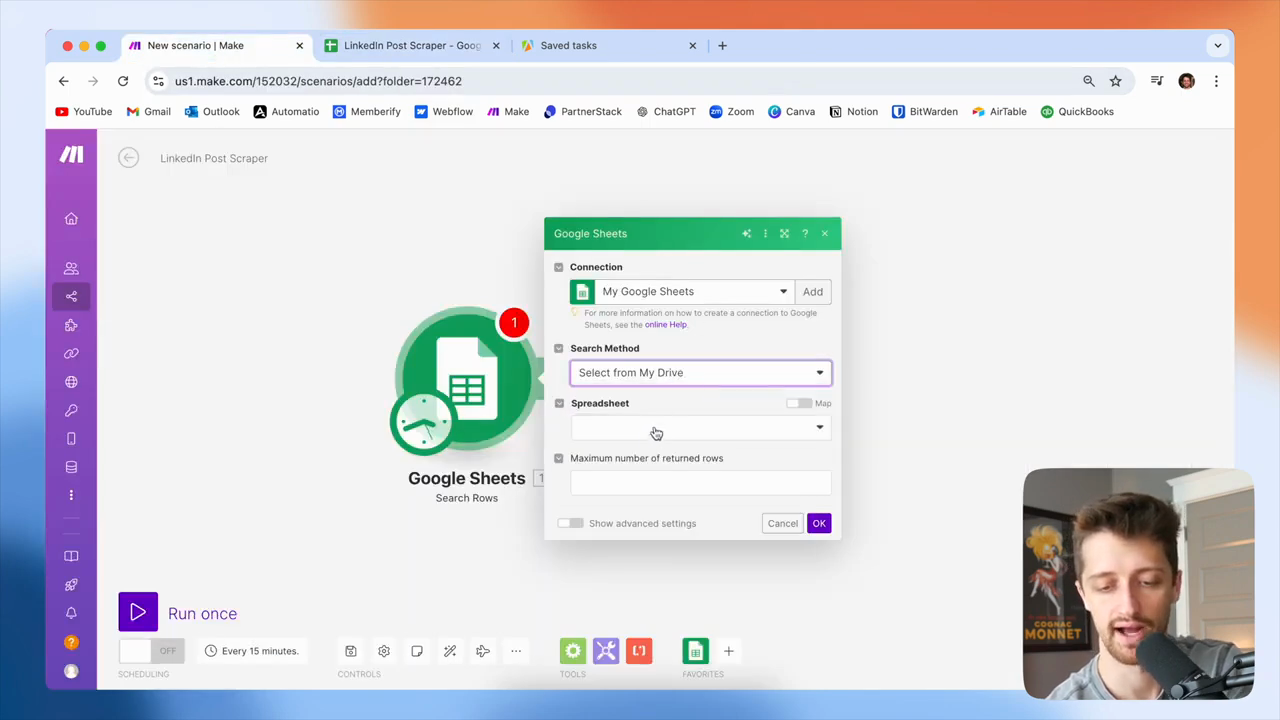

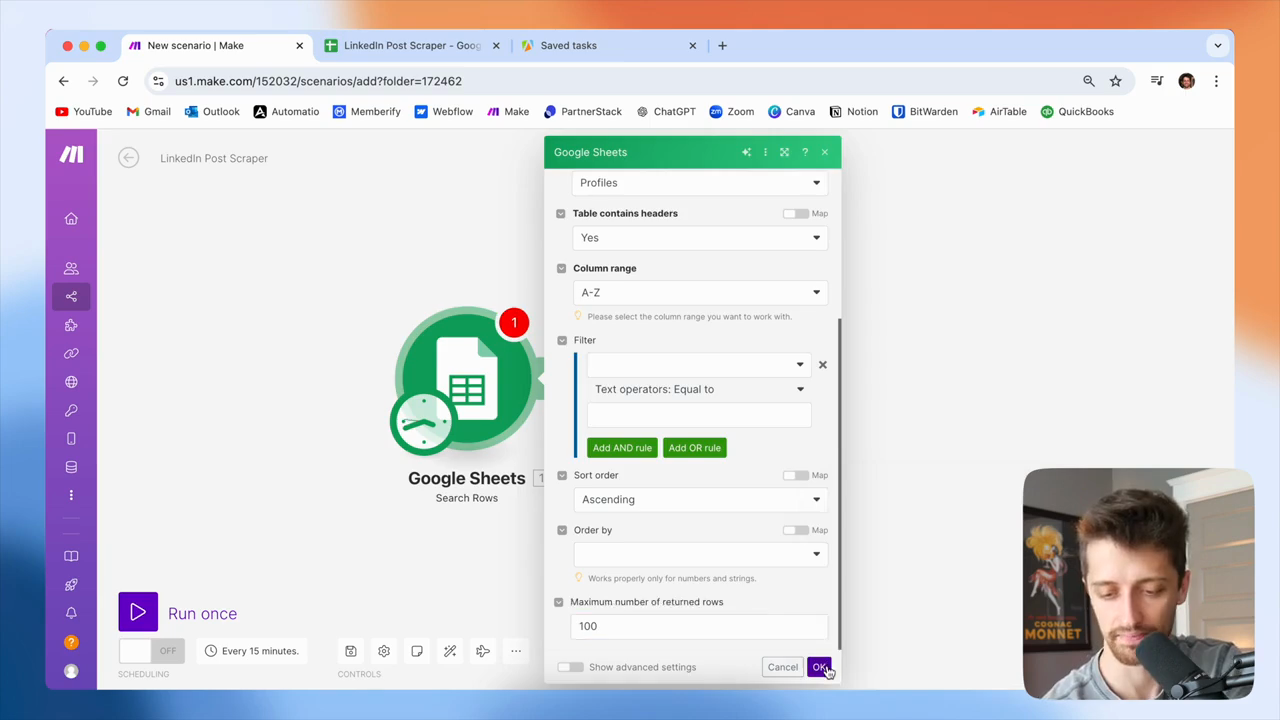

My table has headers. I only need A to Z. I will get a maximum of 100 rows.

You can change that to anything you want. Okay, so we'll click OK.

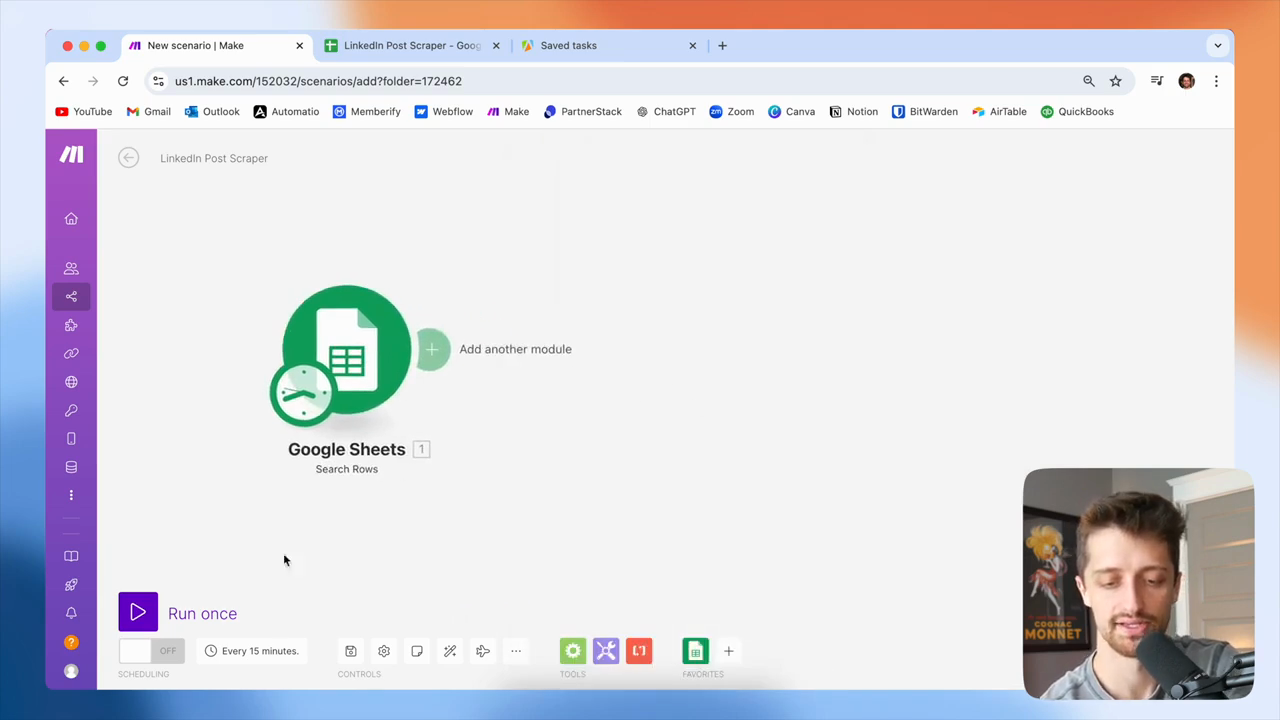

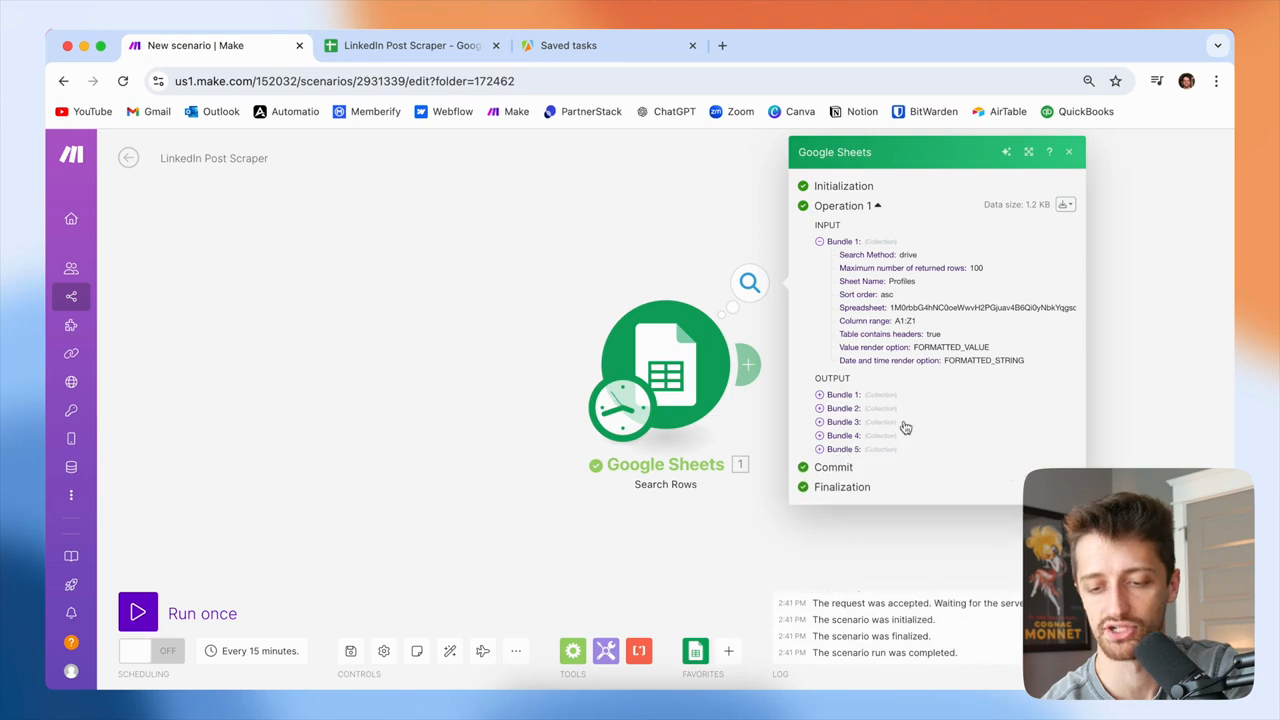

So, I'll just go scrape...

I'll show you how it will scrape all the different rows in this sheet into unique bundles.

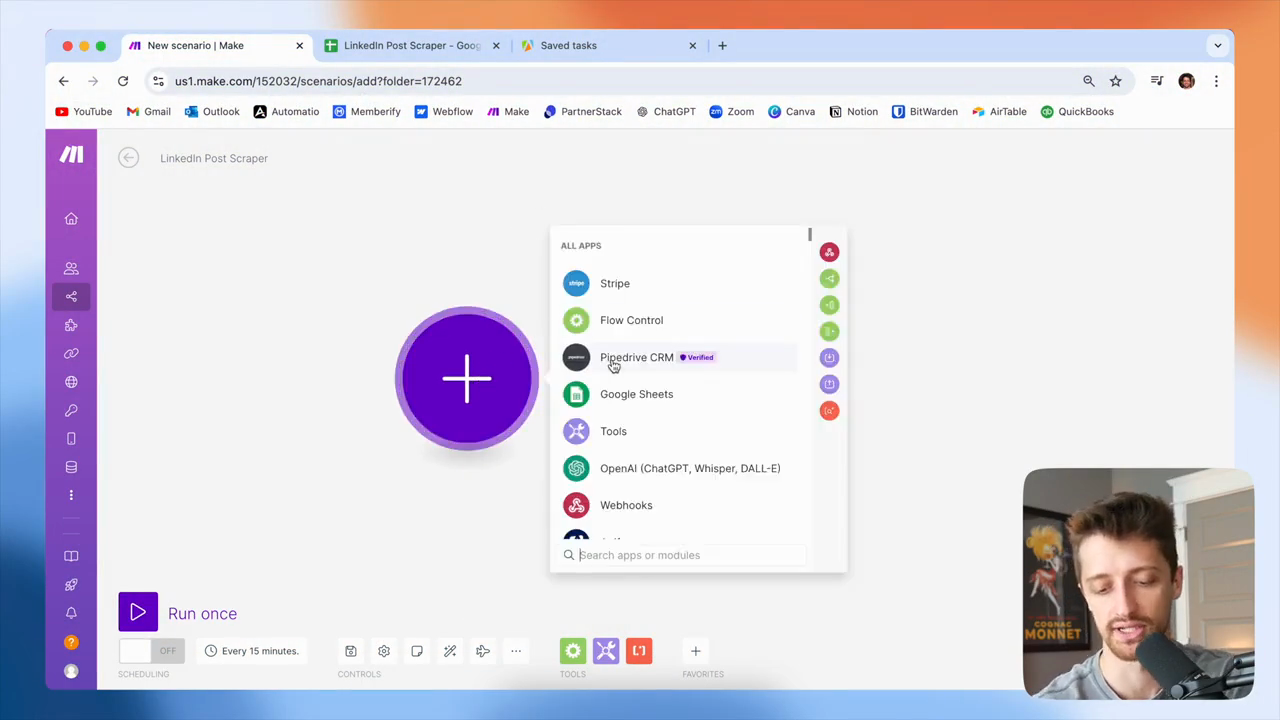

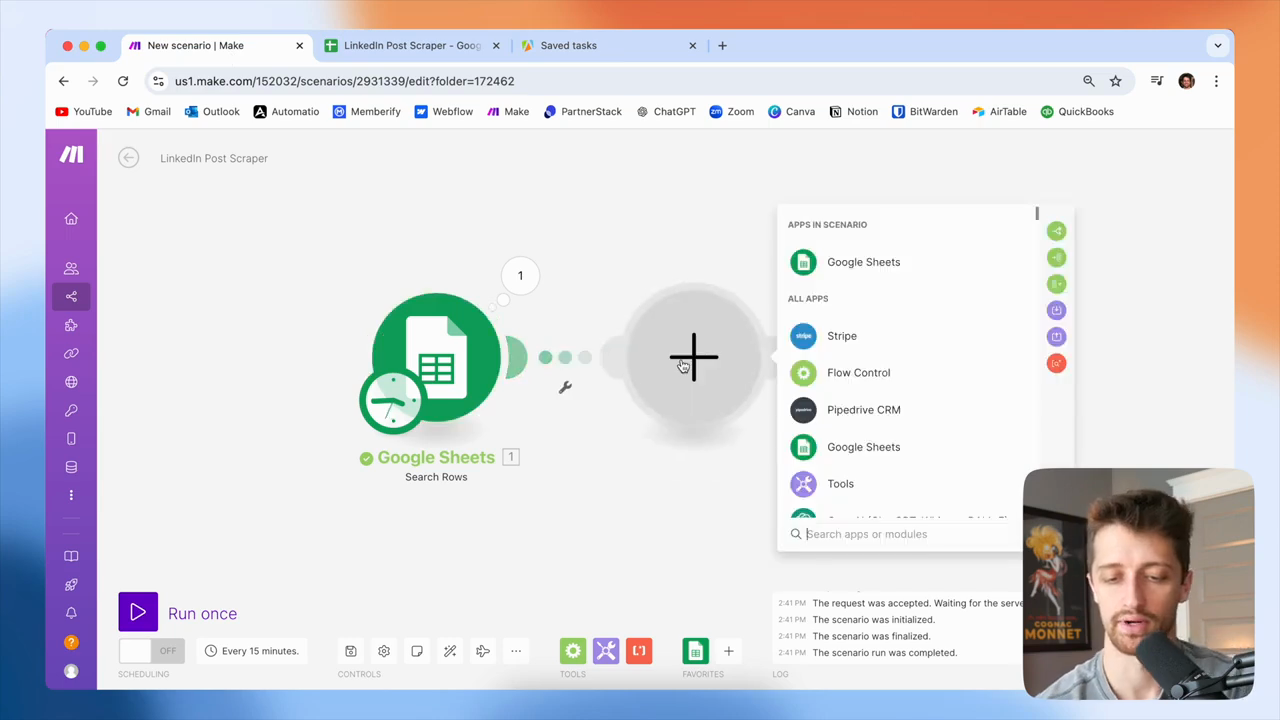

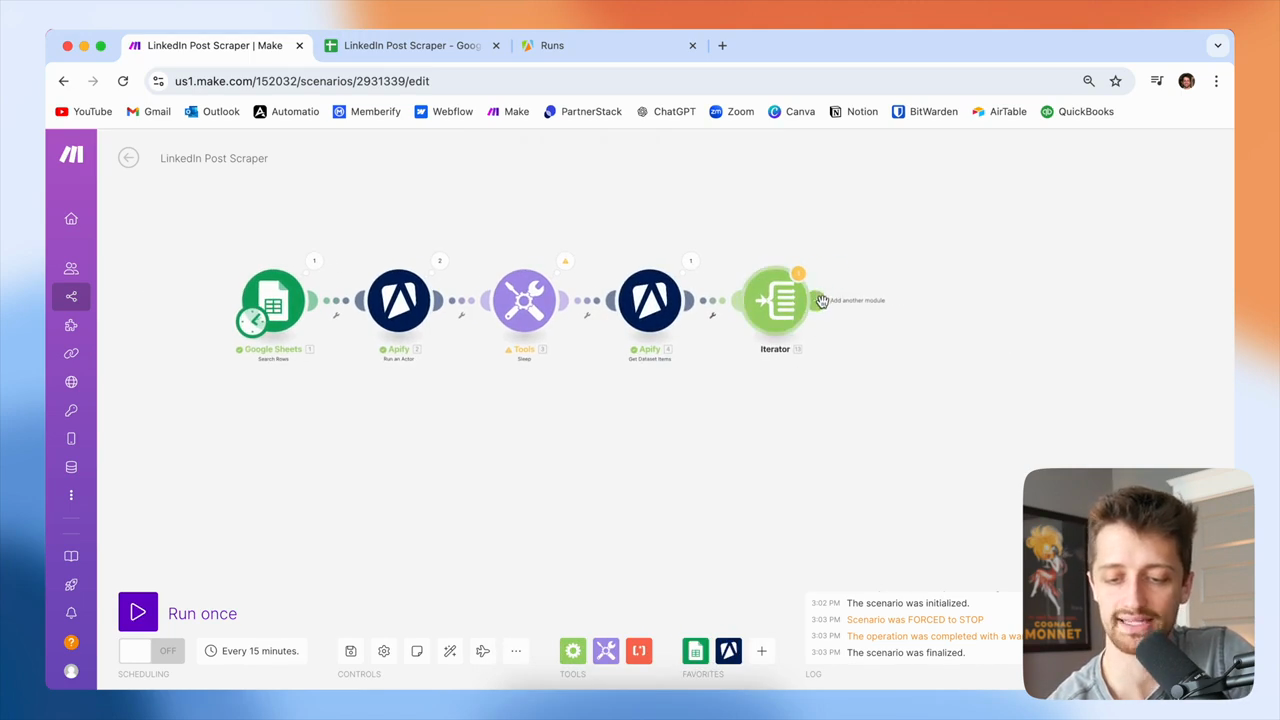

When a module in make.com outputs bundles, we don't need to use an iterator. We can use the Google Sheets module to automatically go through each of these bundles. We don't need to do anything special with iterators or array aggregators. Okay, that's great. All right, so how are we going to scrape LinkedIn?

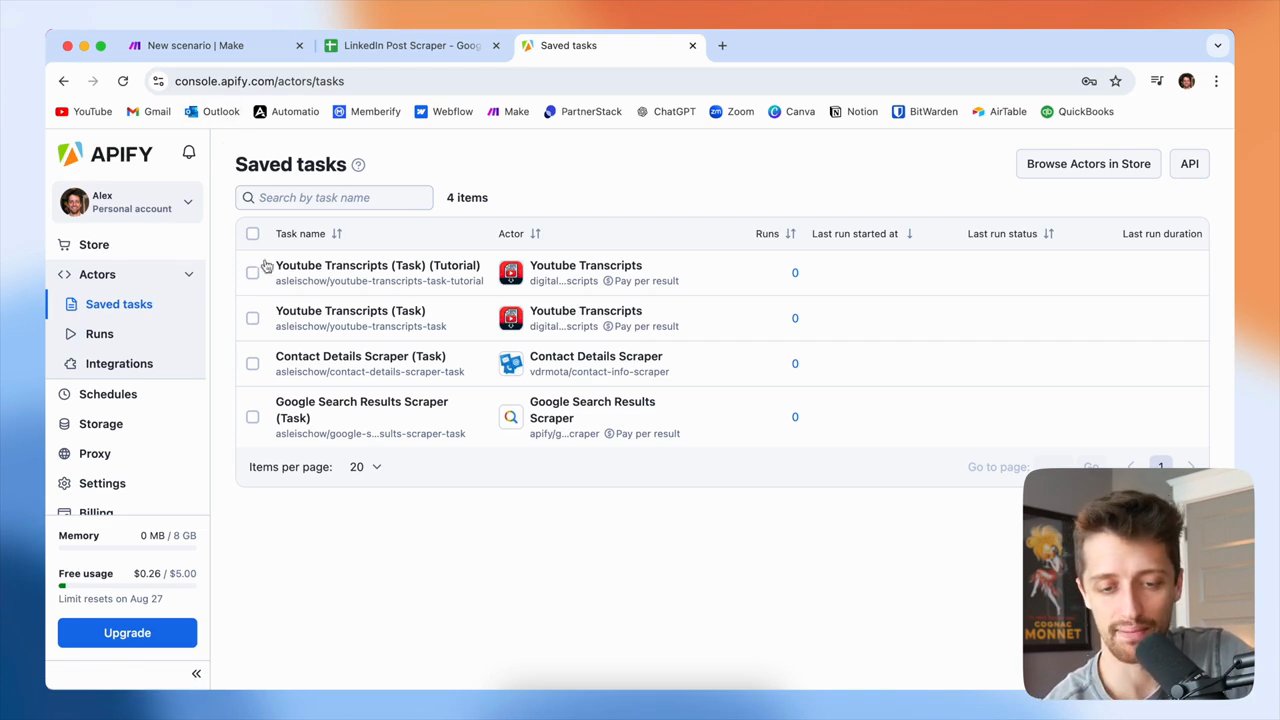

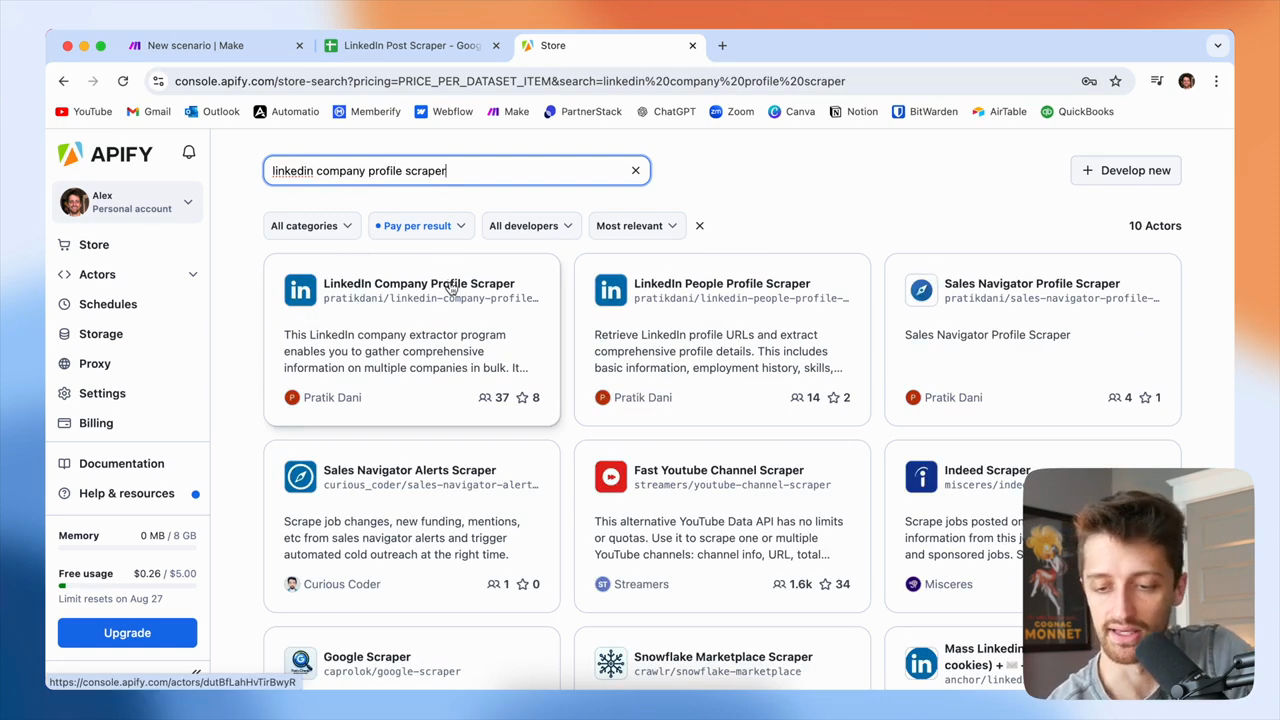

If you have tried to scrape LinkedIn before, you know it can be very technical, expensive, or both. I prefer to use a site called Apify, which offers various bots that can scrape data from different tools or websites.

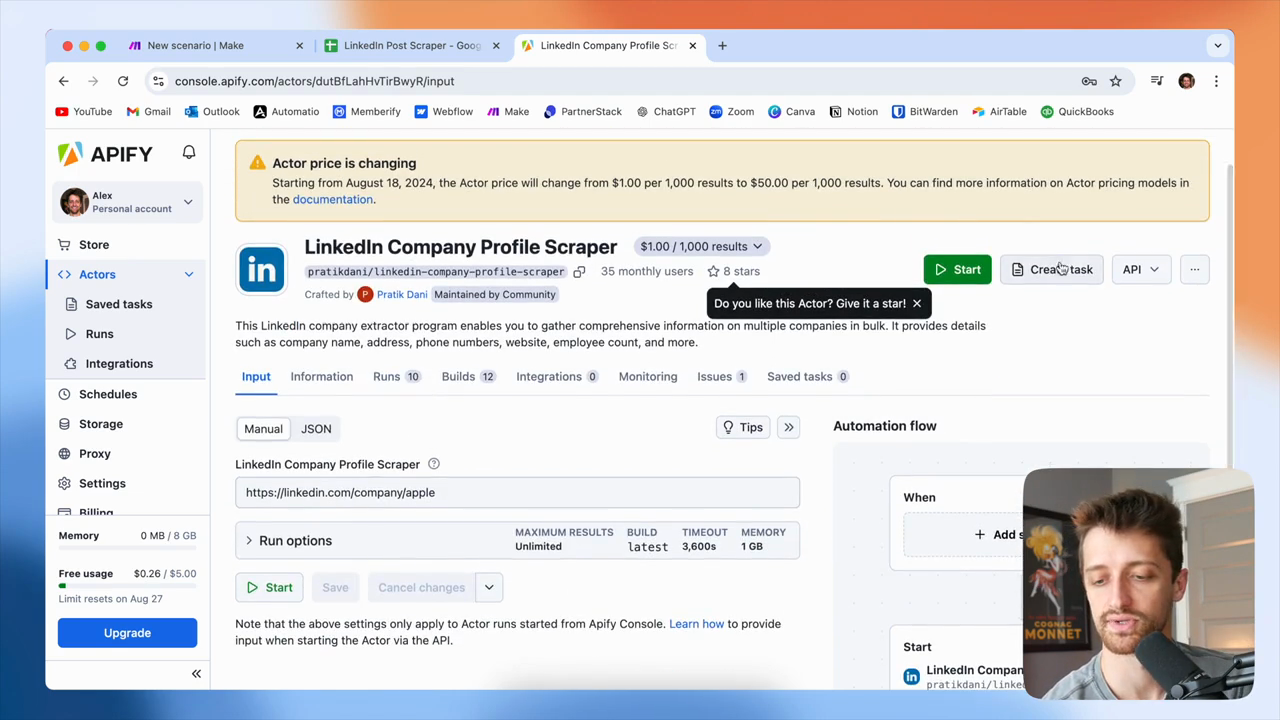

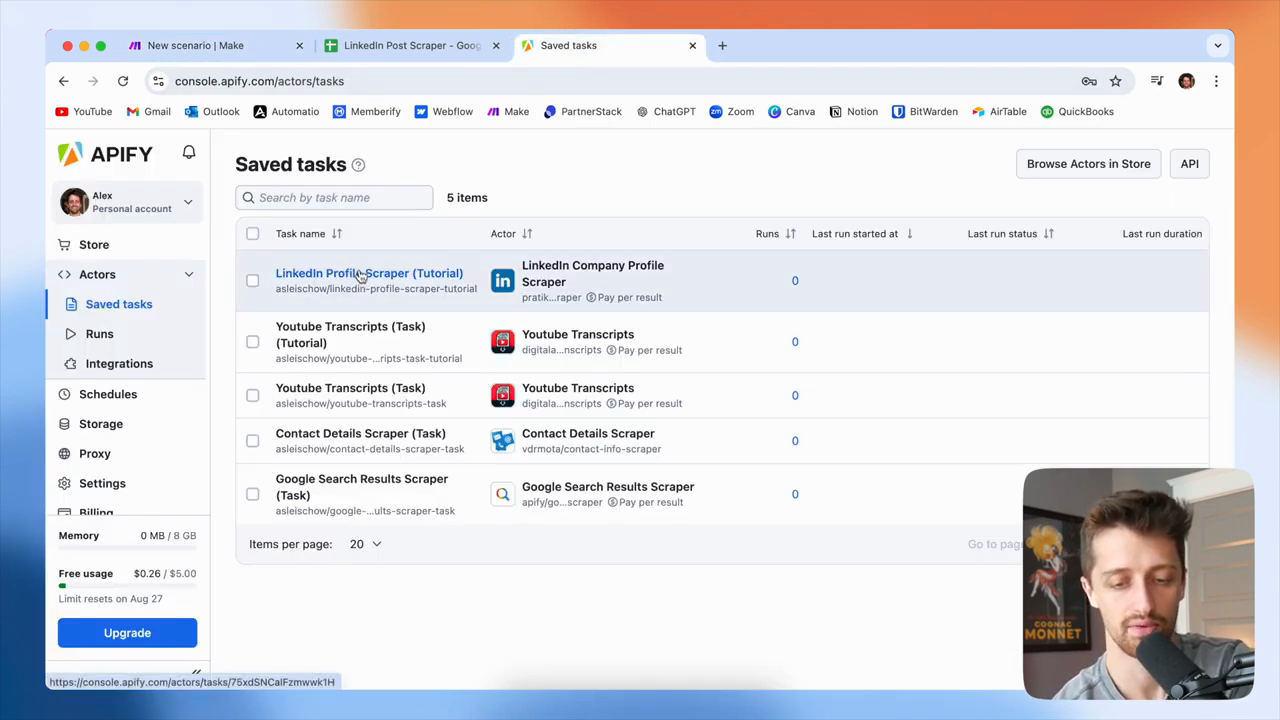

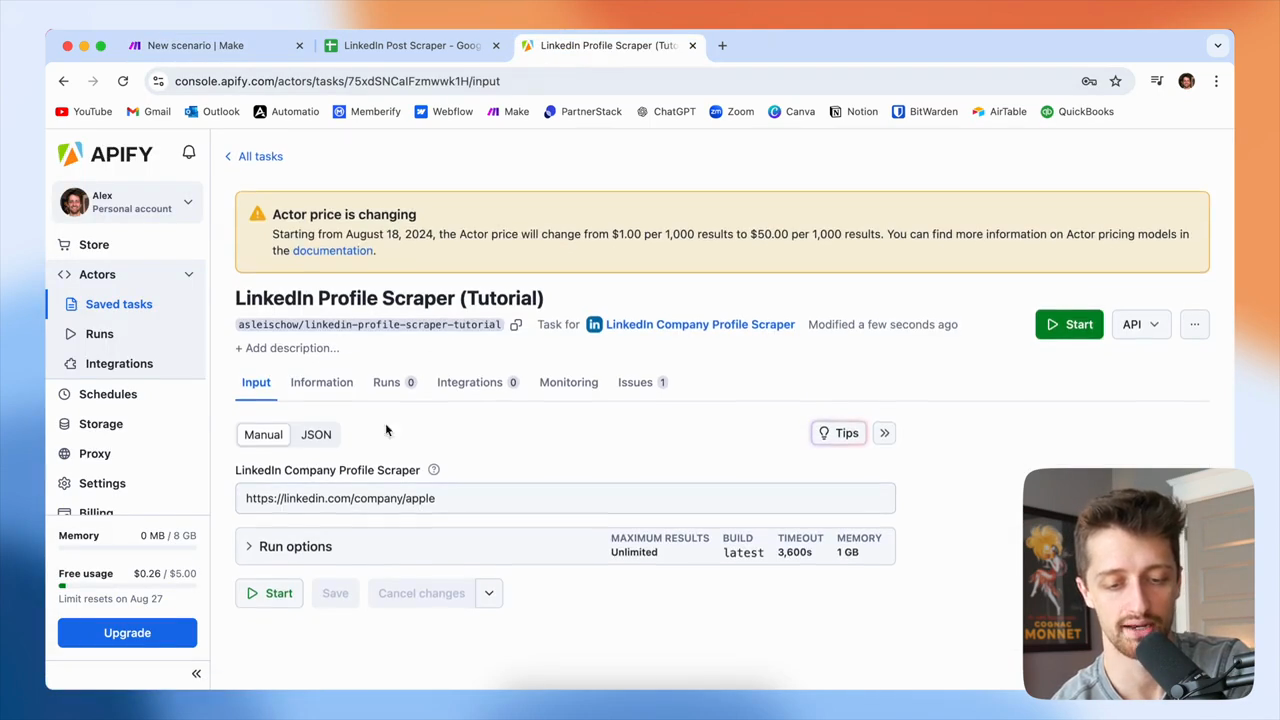

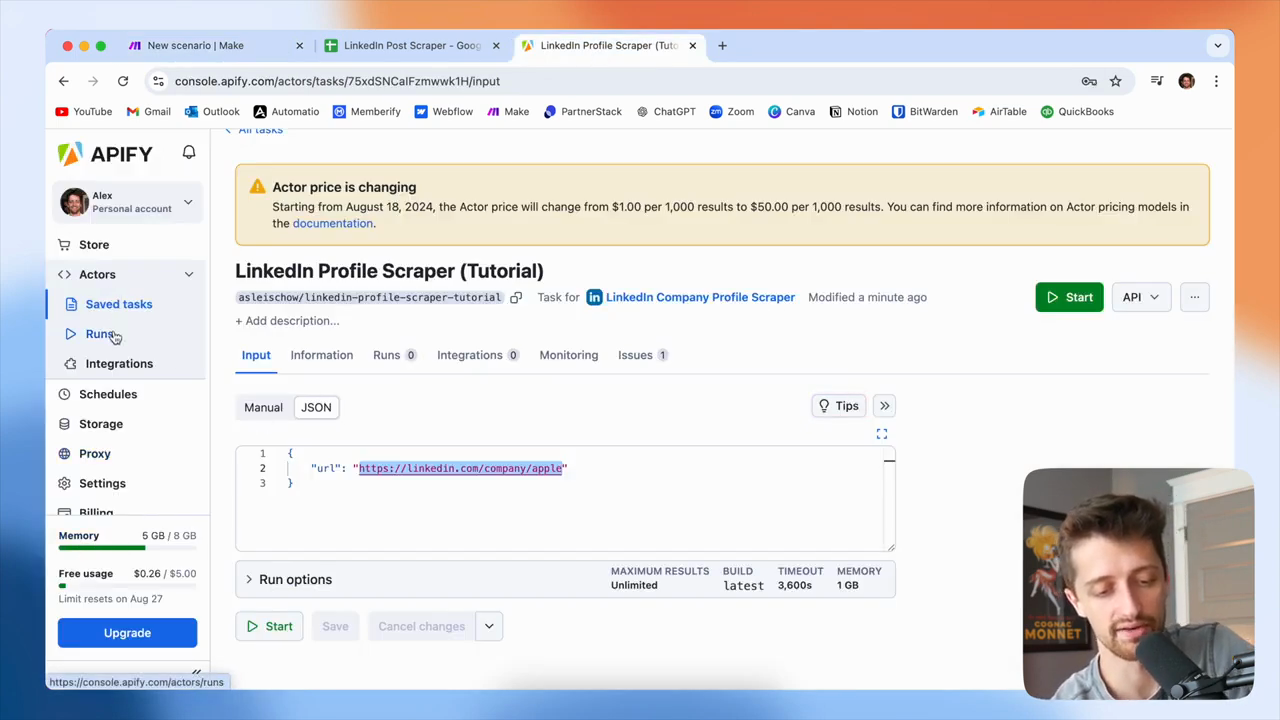

In this video, we will use a bot called LinkedIn Company Profile Scraper. This is the tool we will be working with.

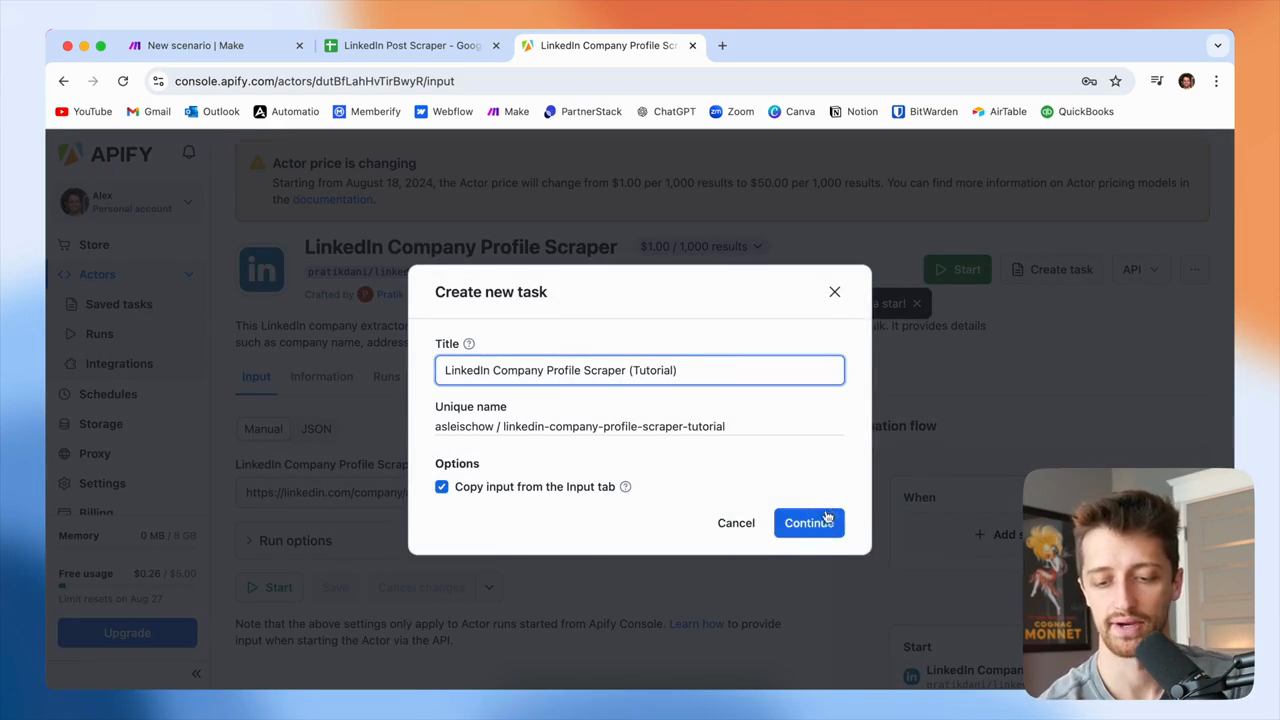

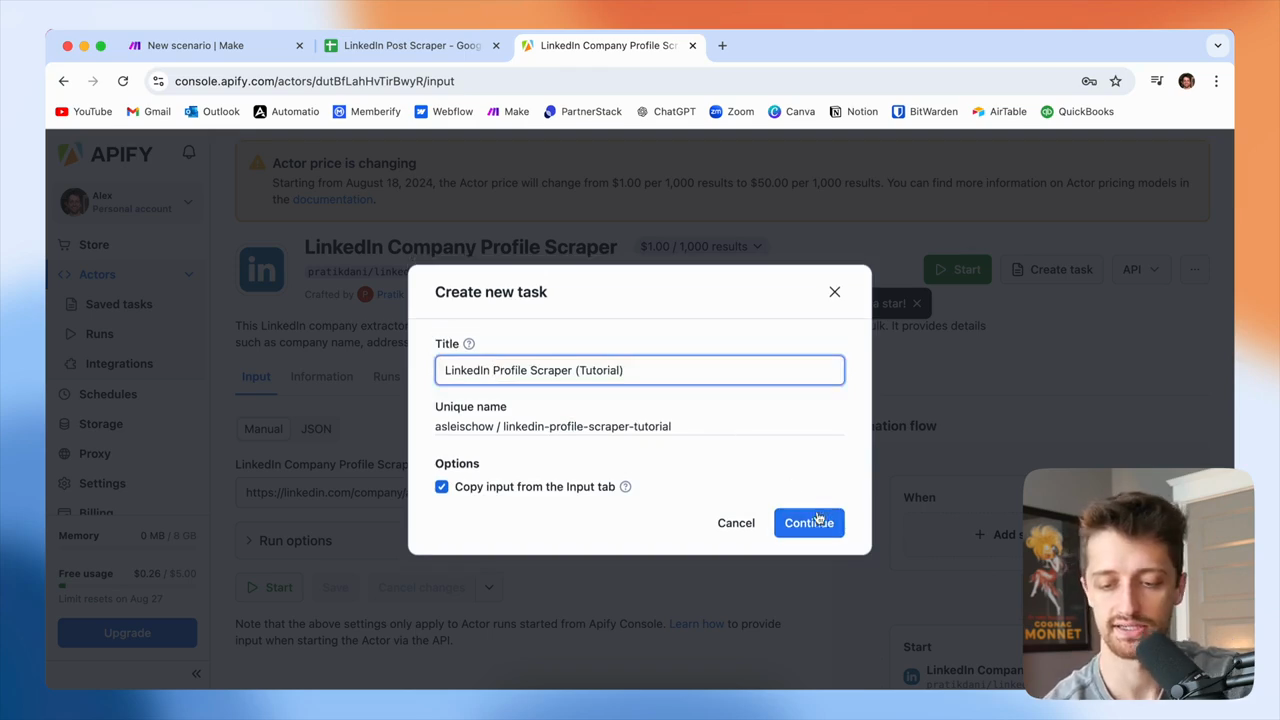

You can see it currently costs one dollar for every thousand results. It's essentially free. They will raise the price to $50 per thousand results. However, we receive $5 in free credits each month. Unless you process a large number of profiles each month, the free plan should be sufficient. However, remember that you pay per result with this bot. Next, create a task and give it a name. Click continue, make any necessary changes, then click continue again.

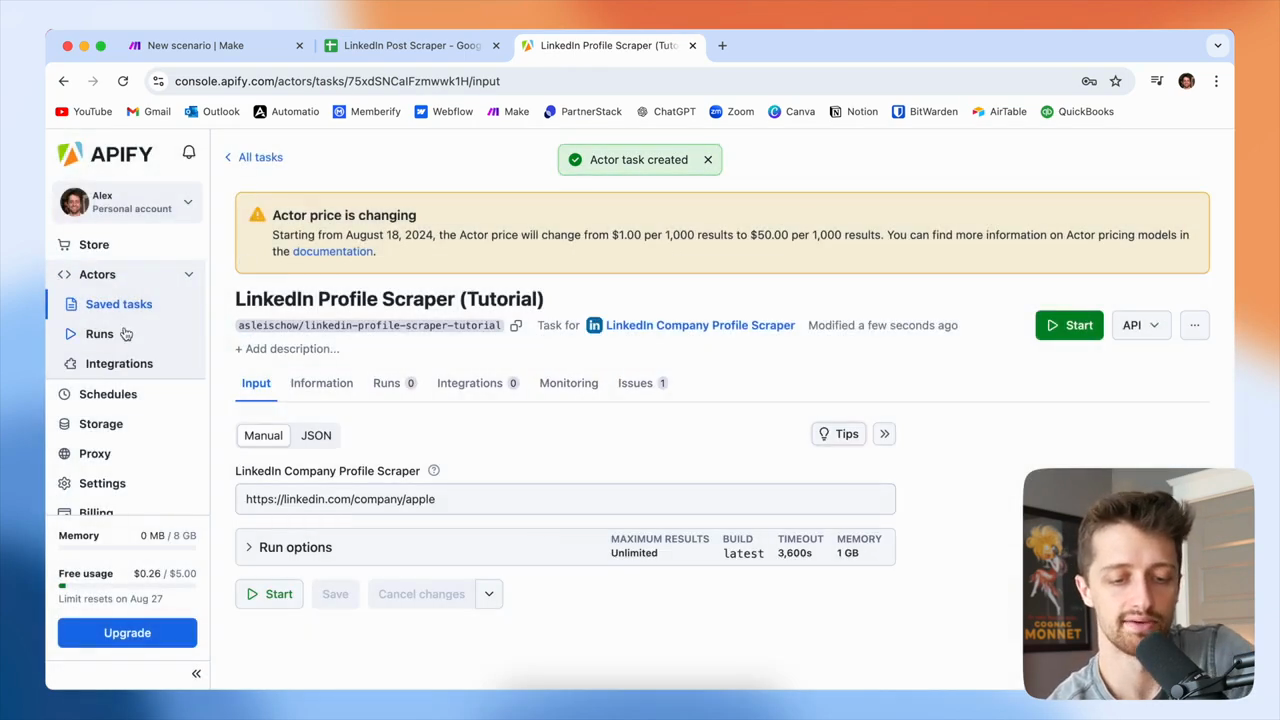

Once we have our task, it will appear here.

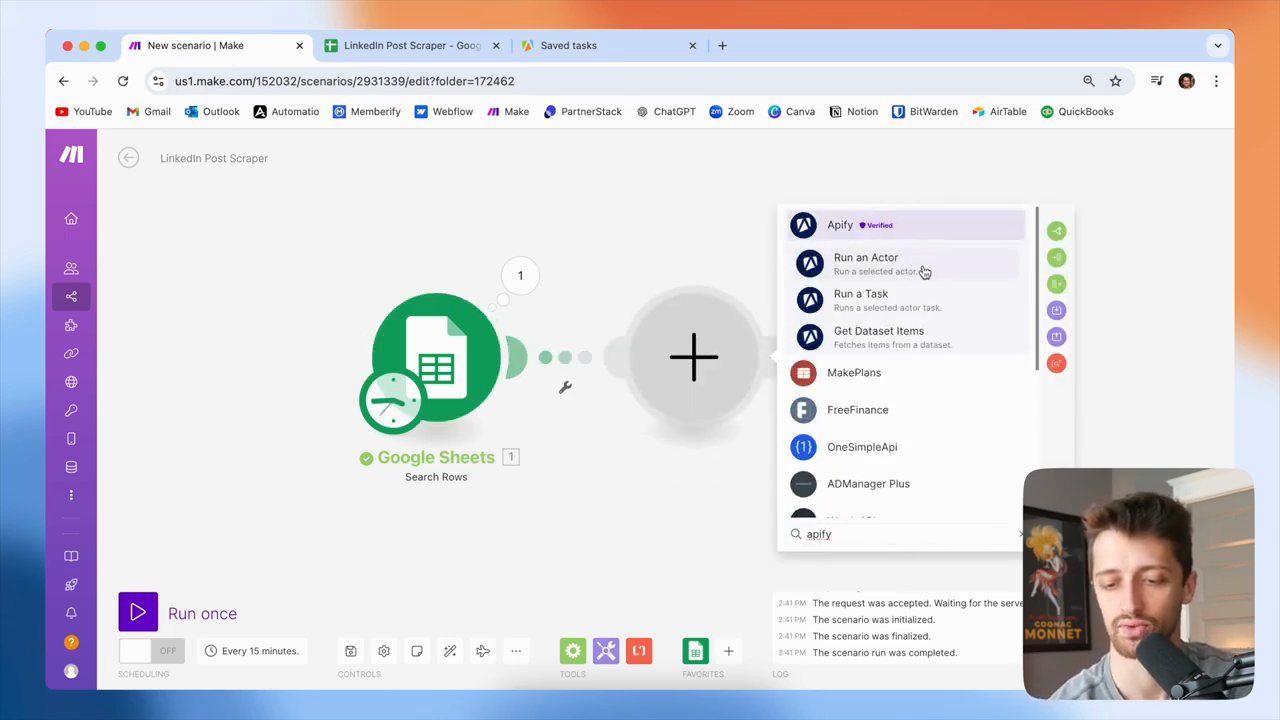

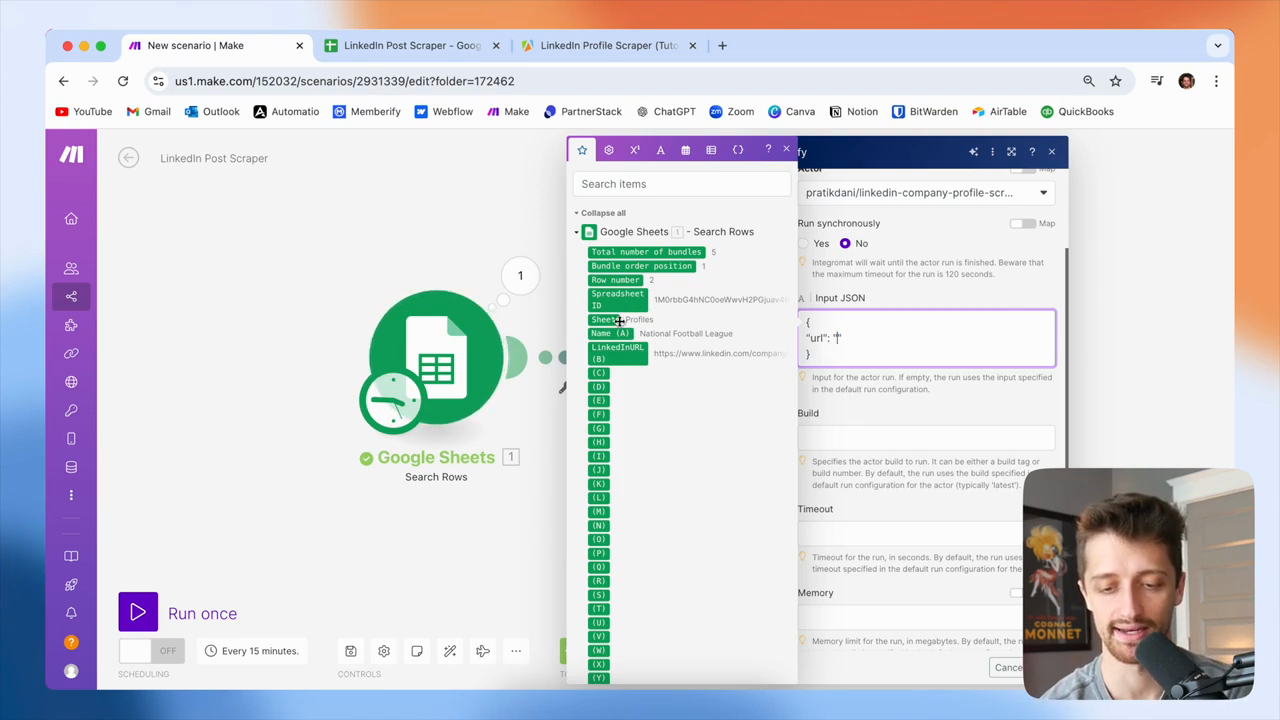

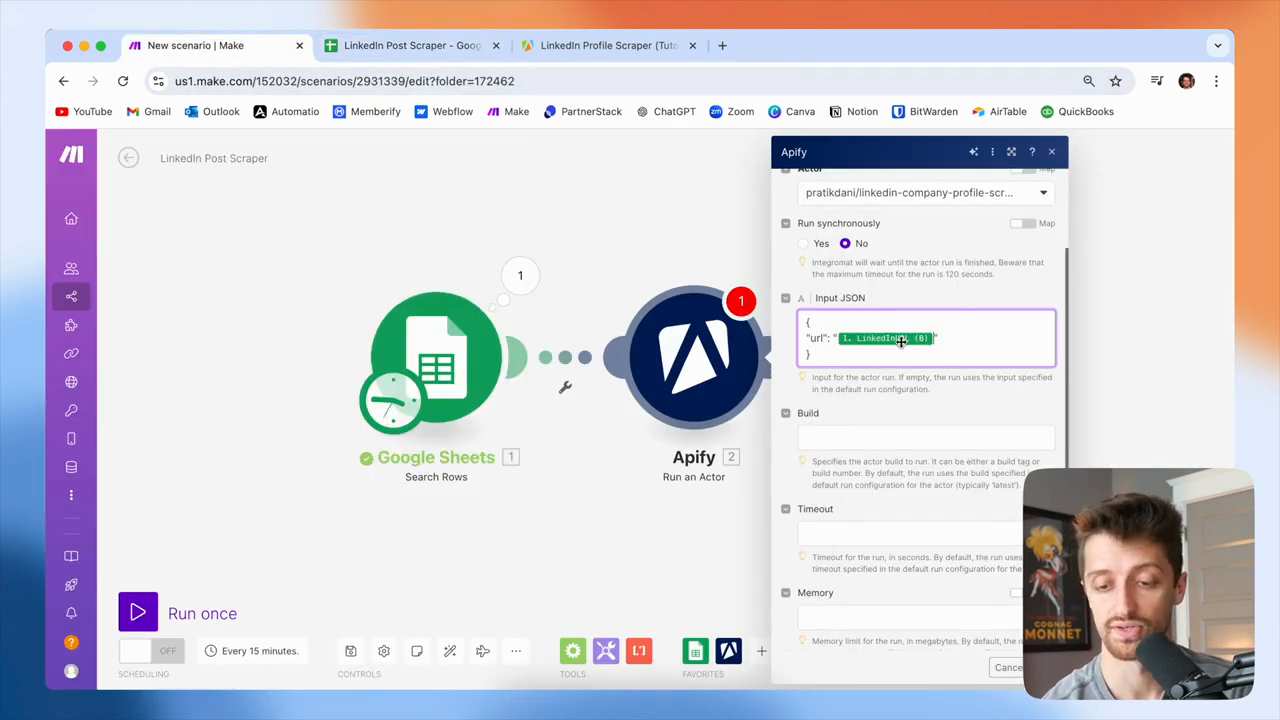

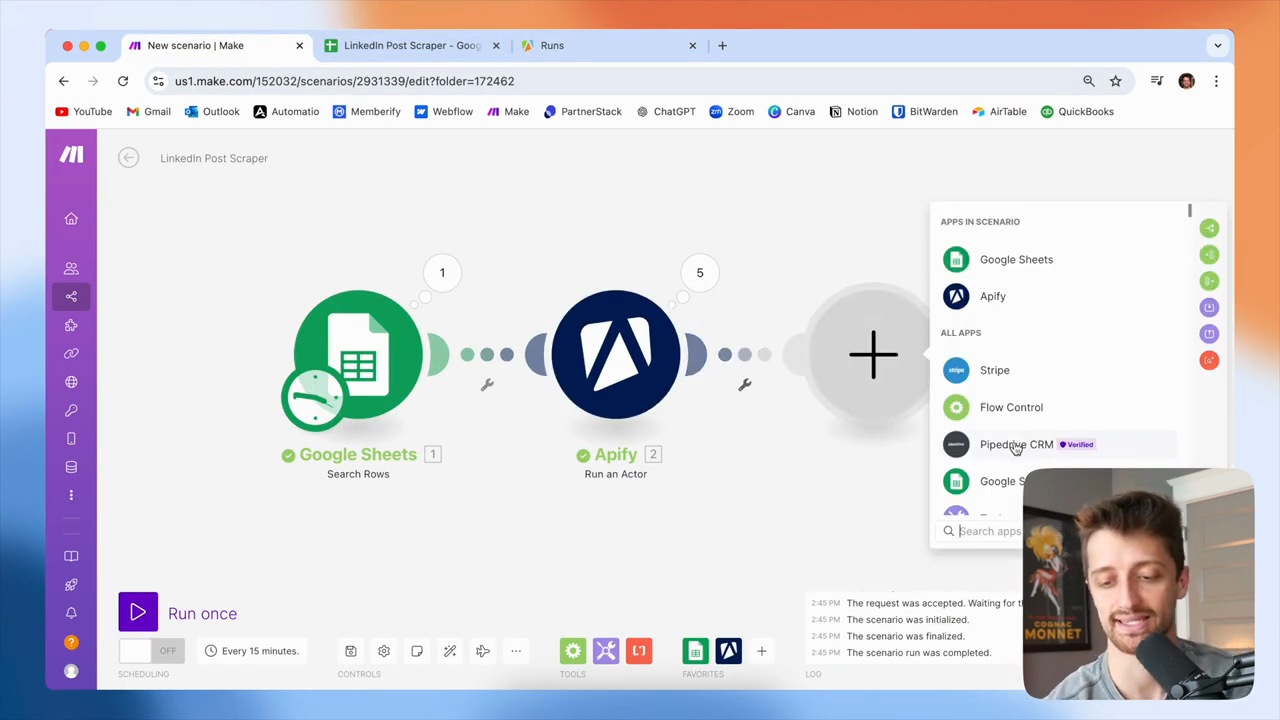

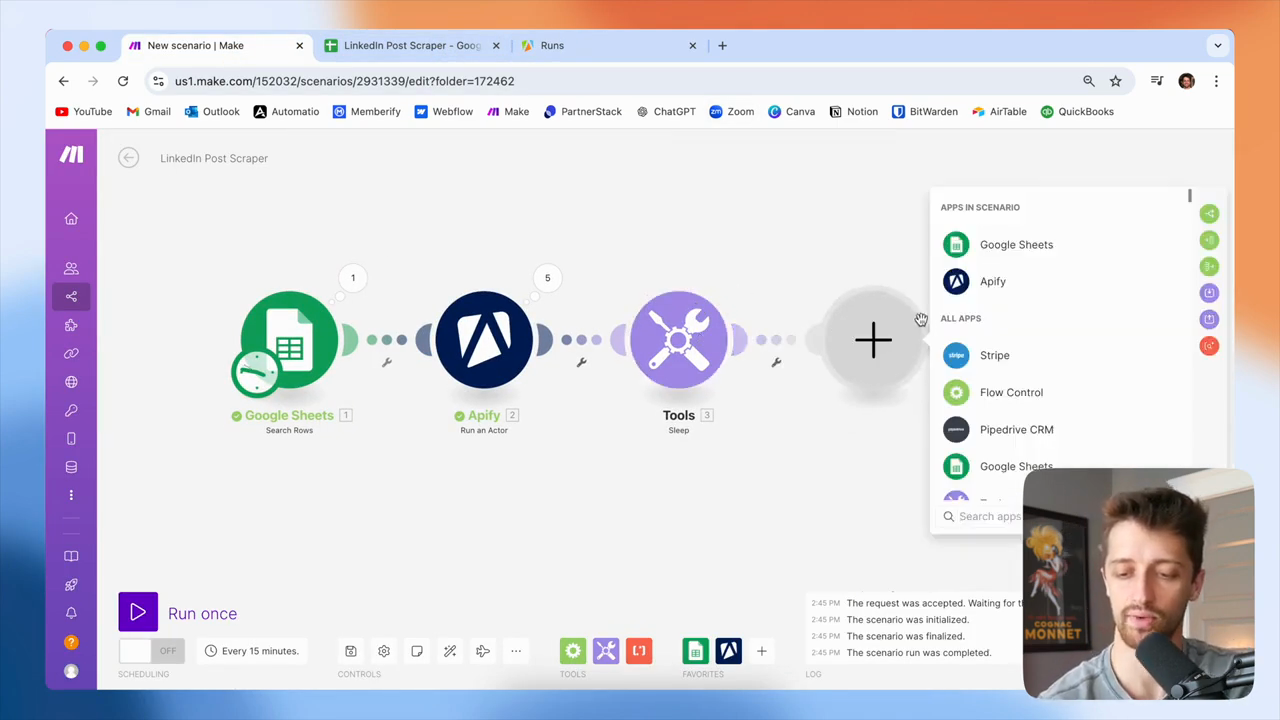

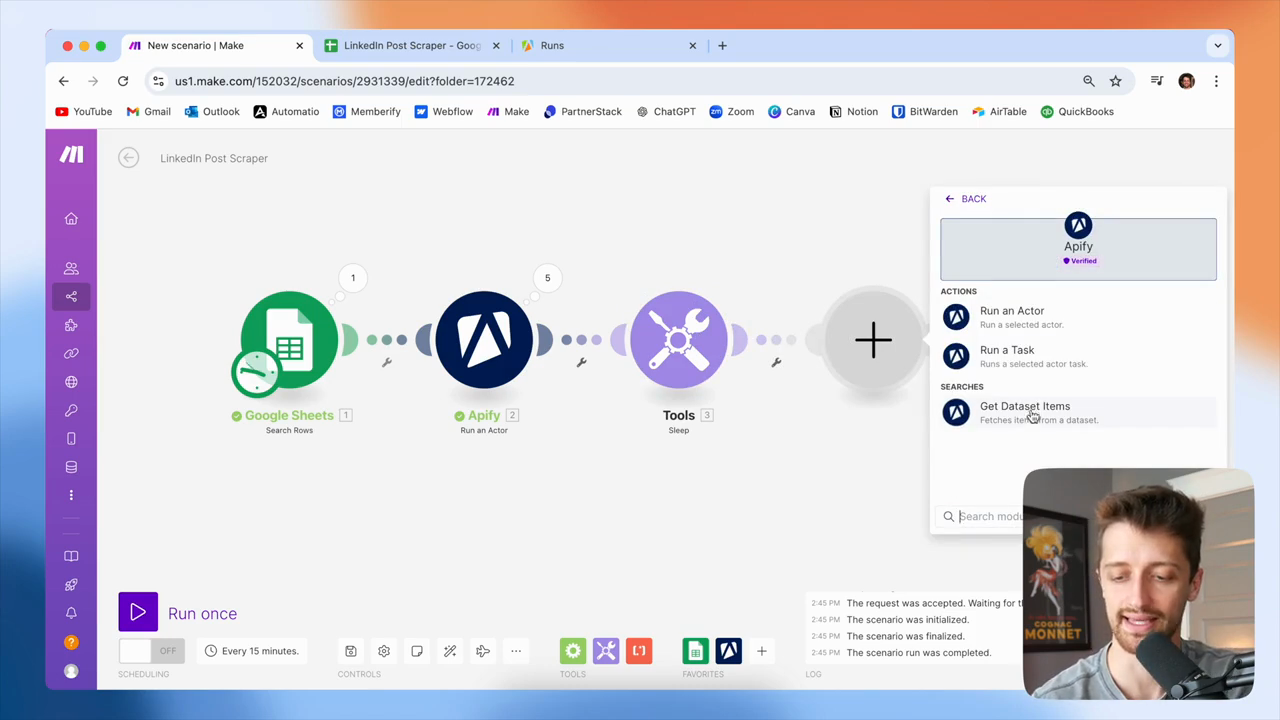

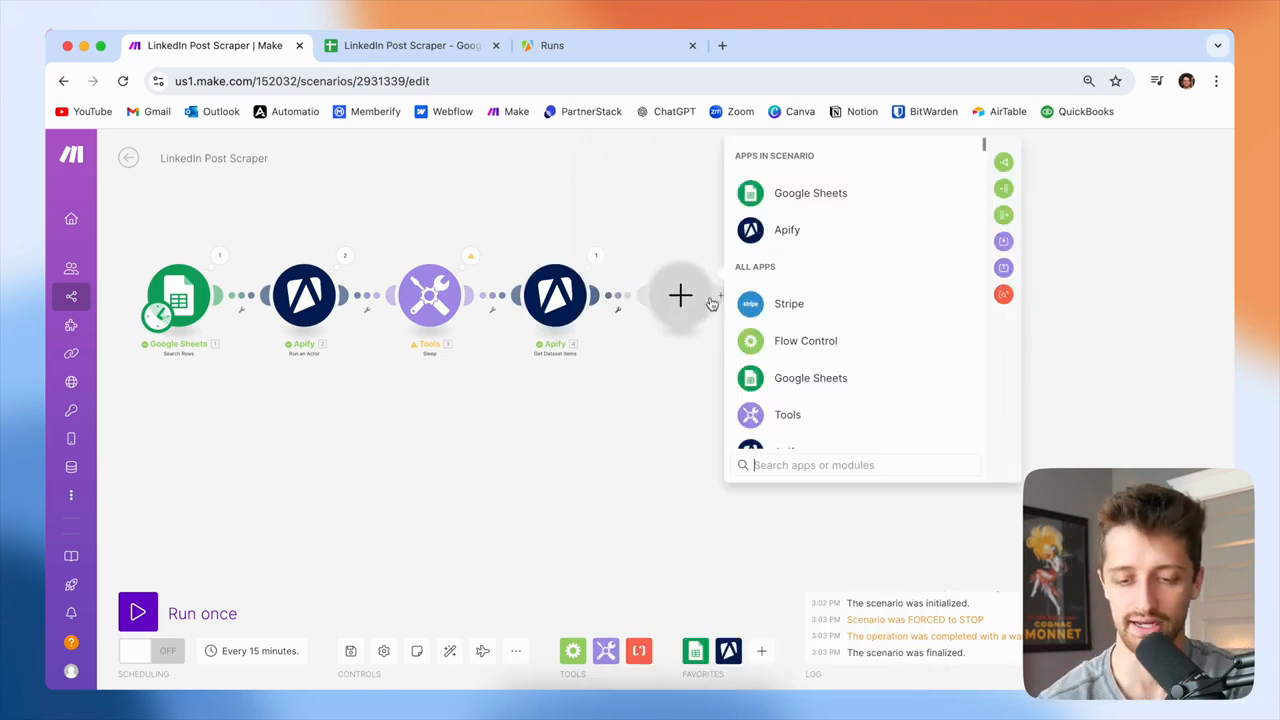

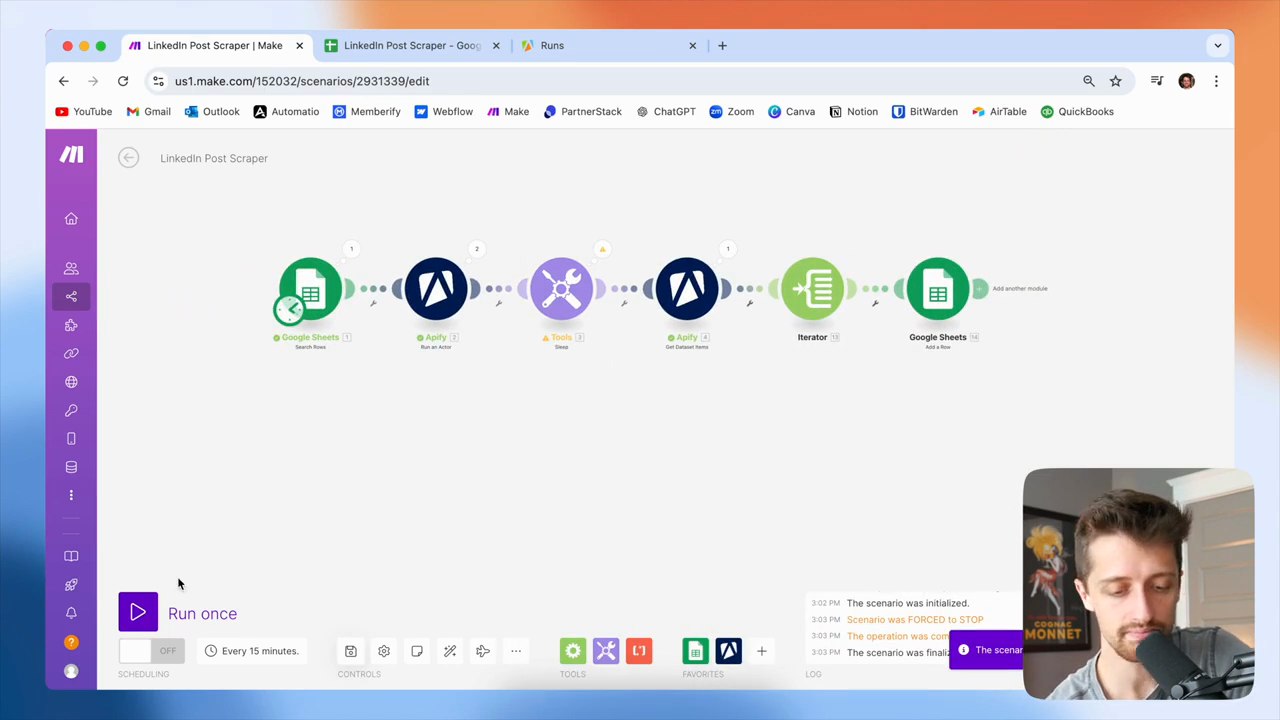

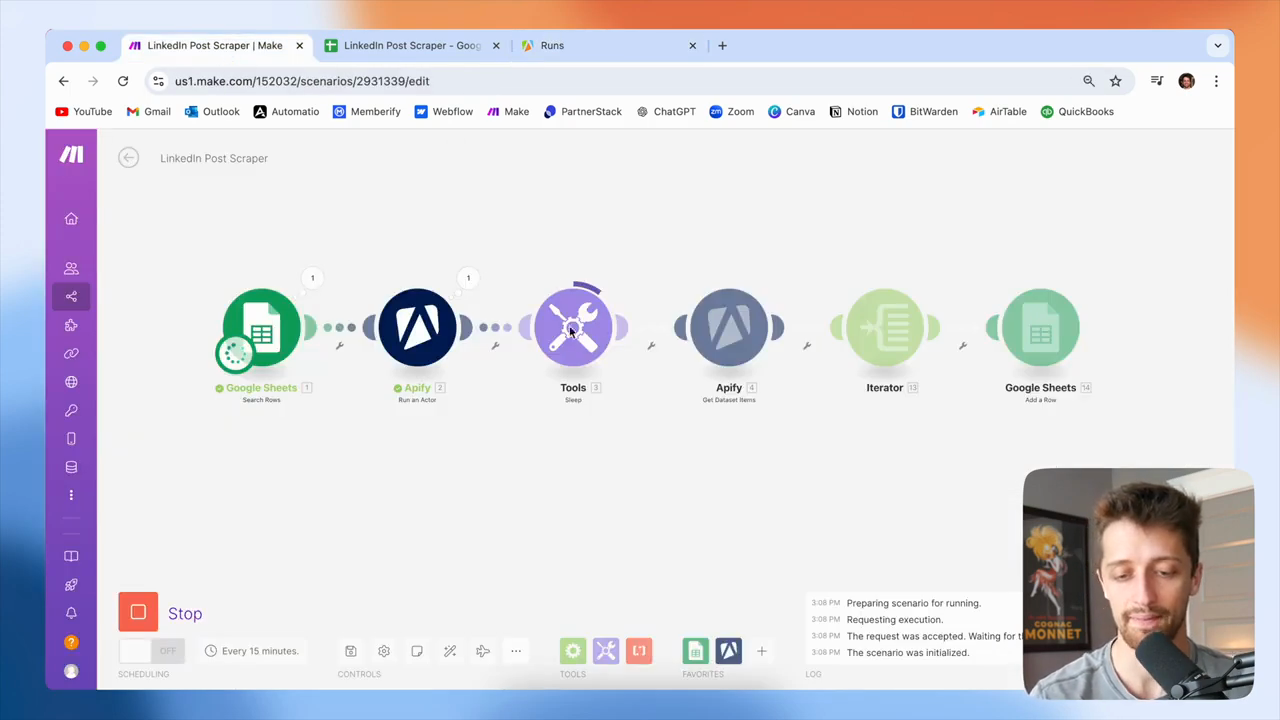

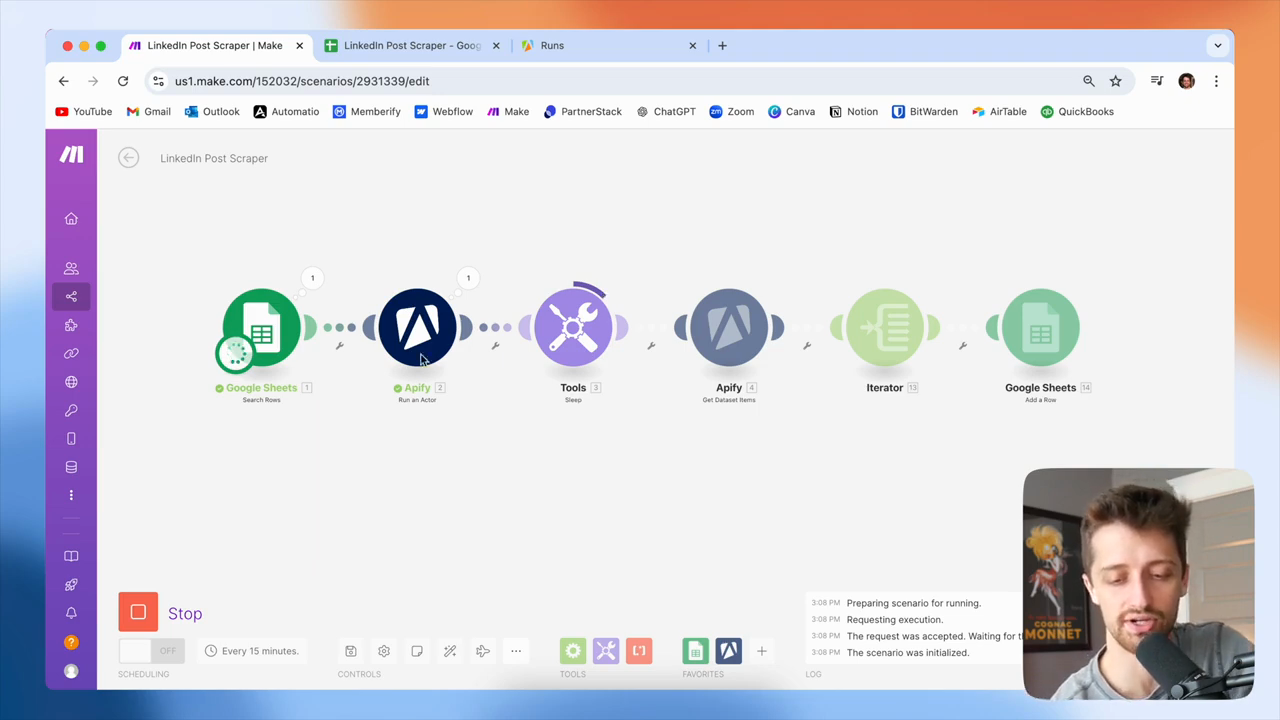

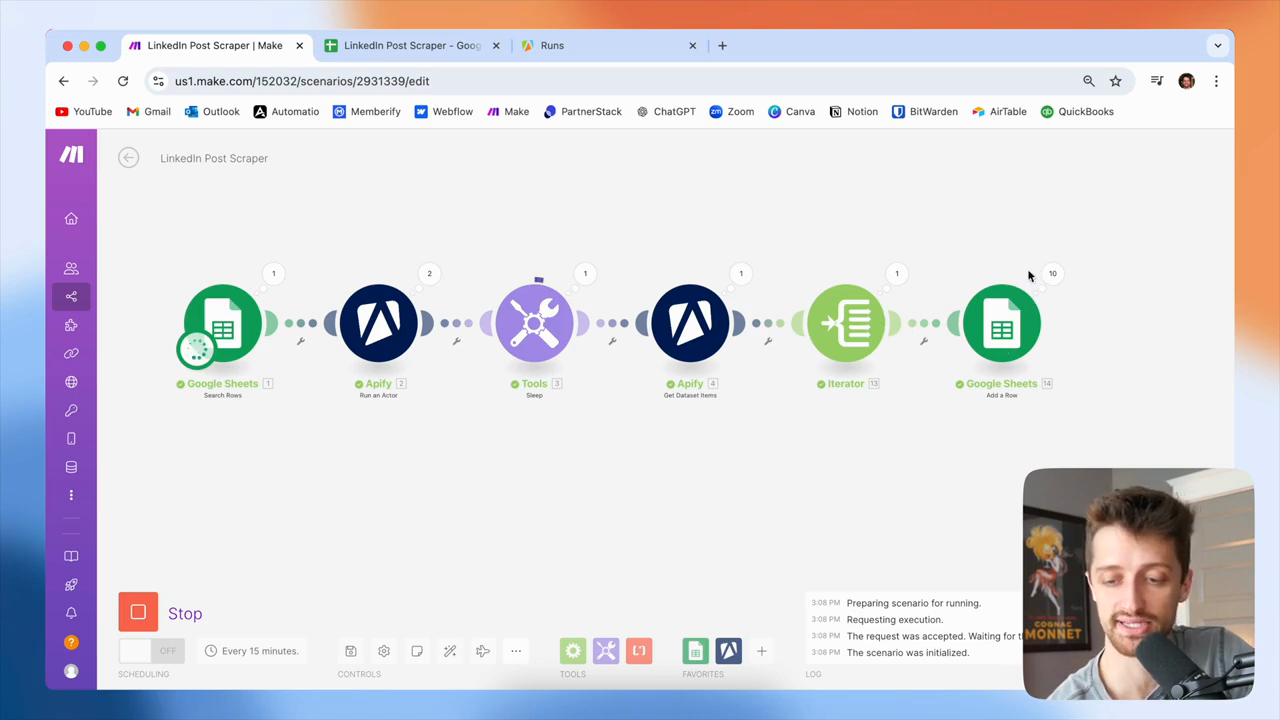

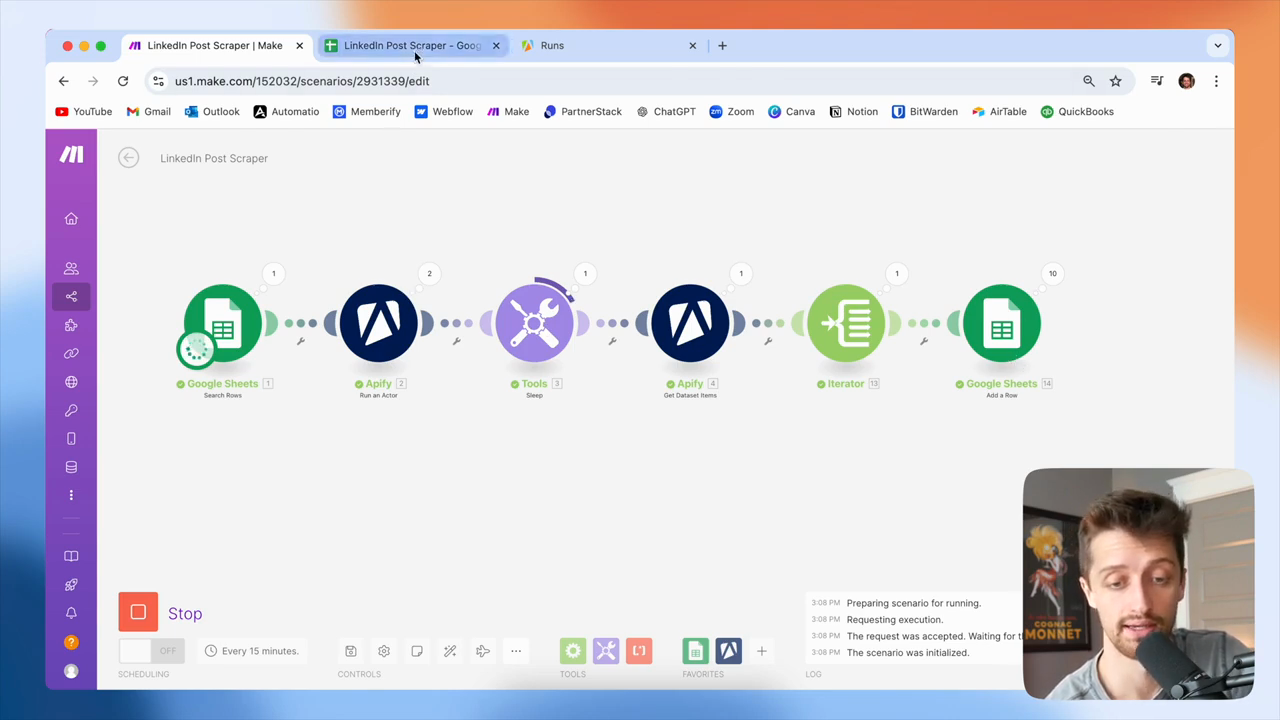

We will go into Make and add an Apify "Run Actor" module.

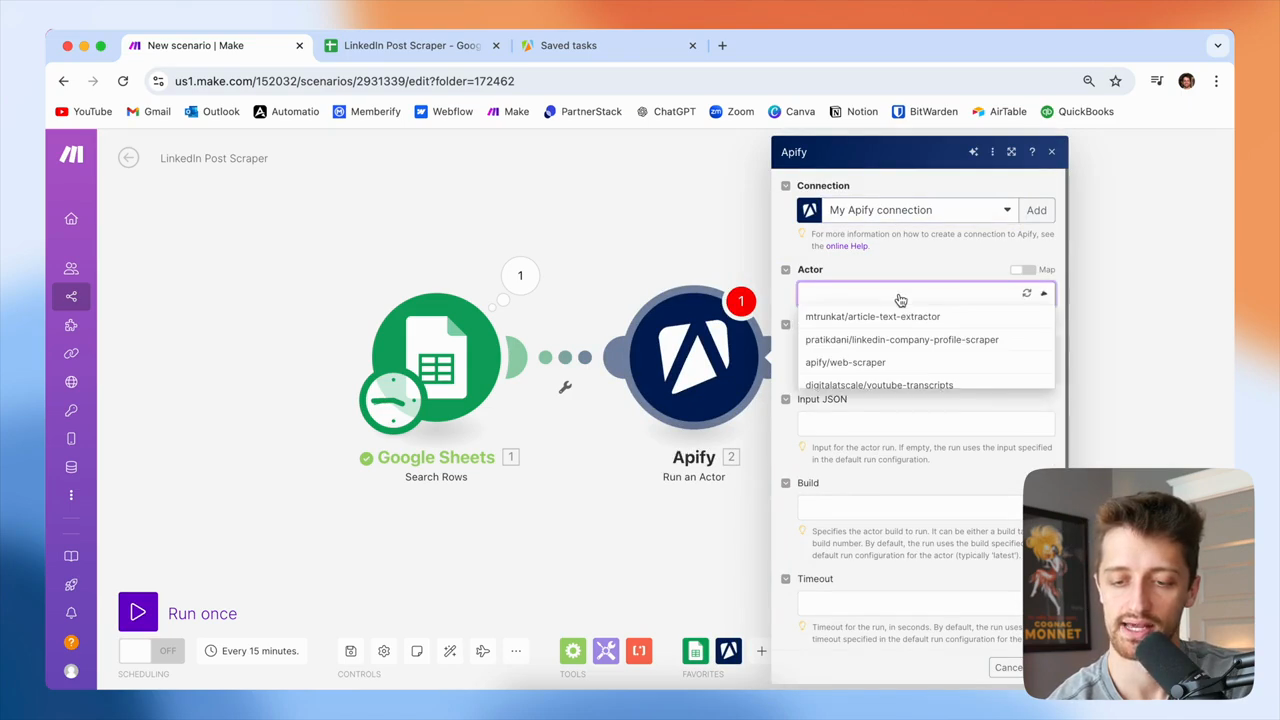

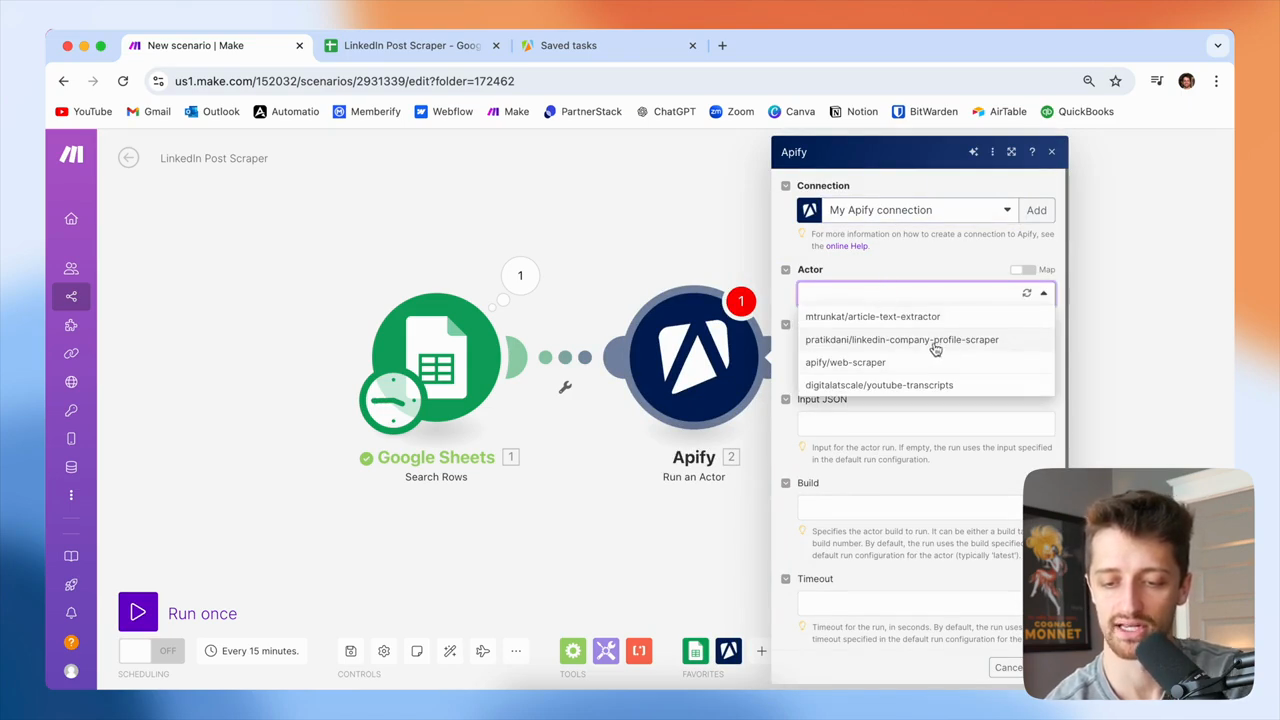

You want to connect to your Apify account. First, locate the specific actor you need.

This will be the Prataki Dani LinkedIn company profile scraper.

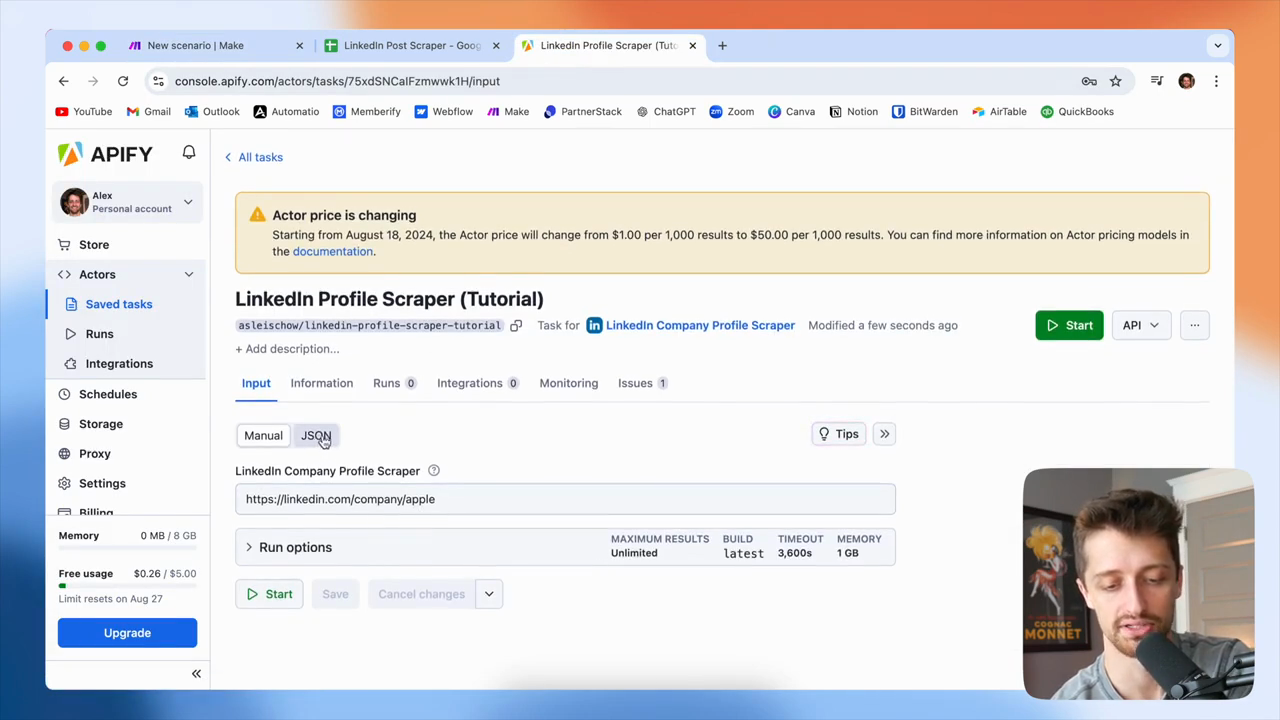

Sorry if I mispronounced the name. Okay, in this input JSON field, we will go back to Apify. Open the bot, switch to the JSON tab, and copy this in.

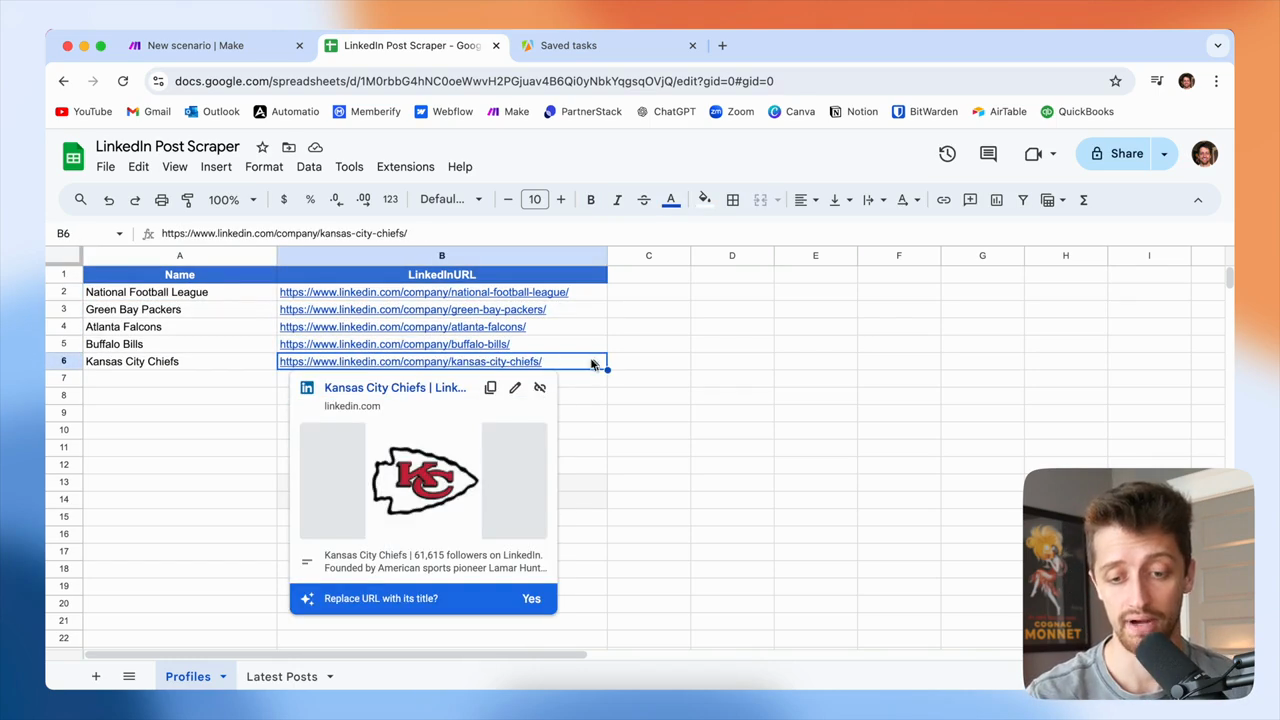

You can see we are just... Just enter the actual LinkedIn profile URL here. That’s all the bot requires. We'll paste that in here like this.

Instead of searching for the Apple profile each time, we will dynamically use the LinkedIn URL from our first module or from this column.

Okay? Just feed it in like that.

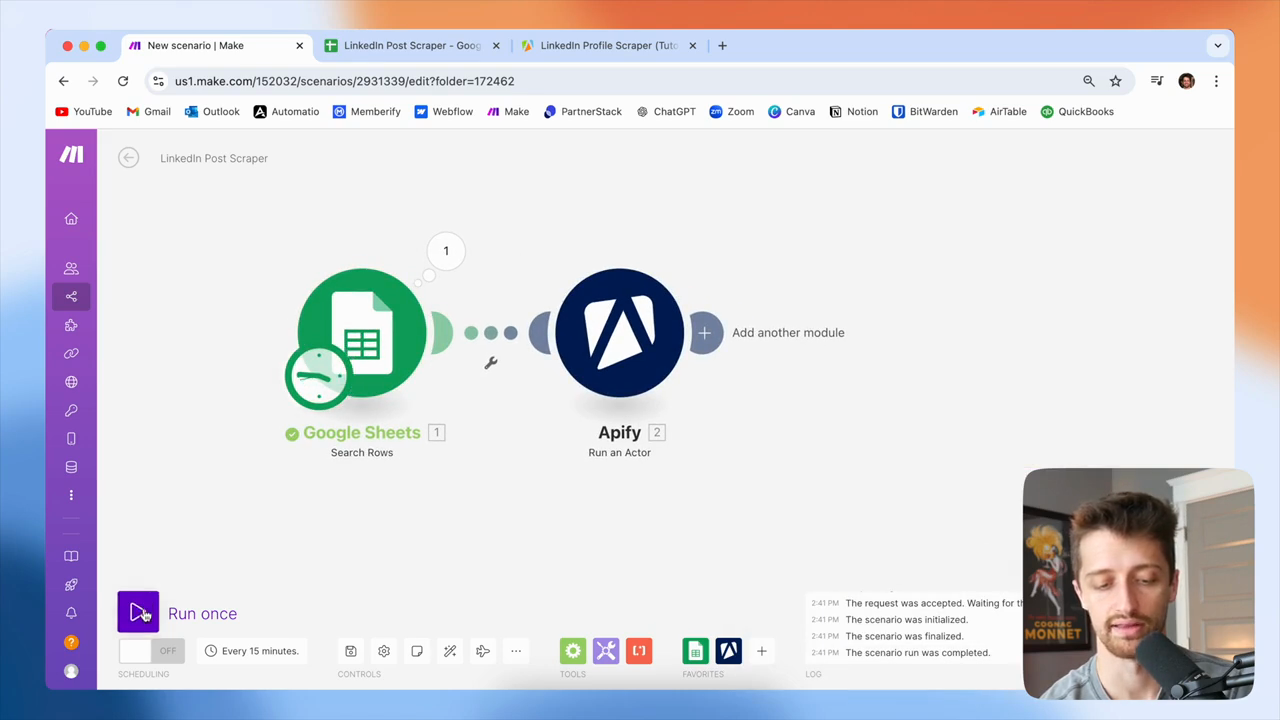

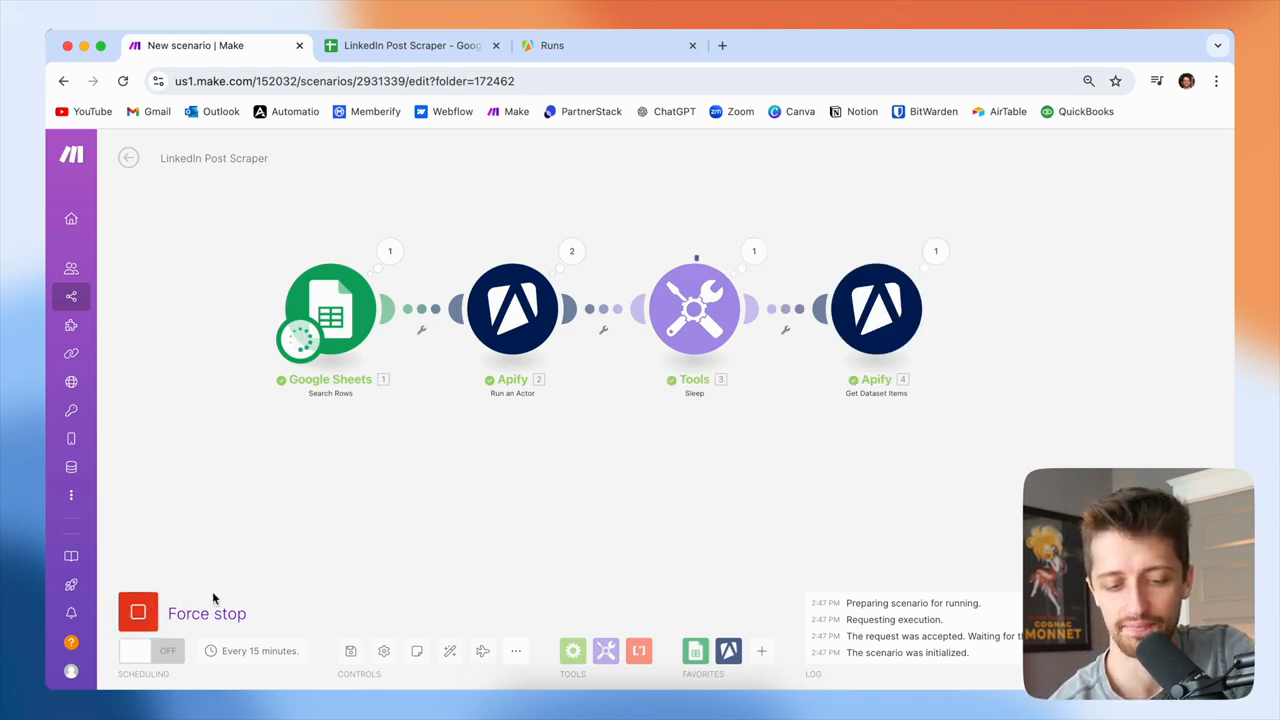

Click "OK," then run the program to test and ensure it works.

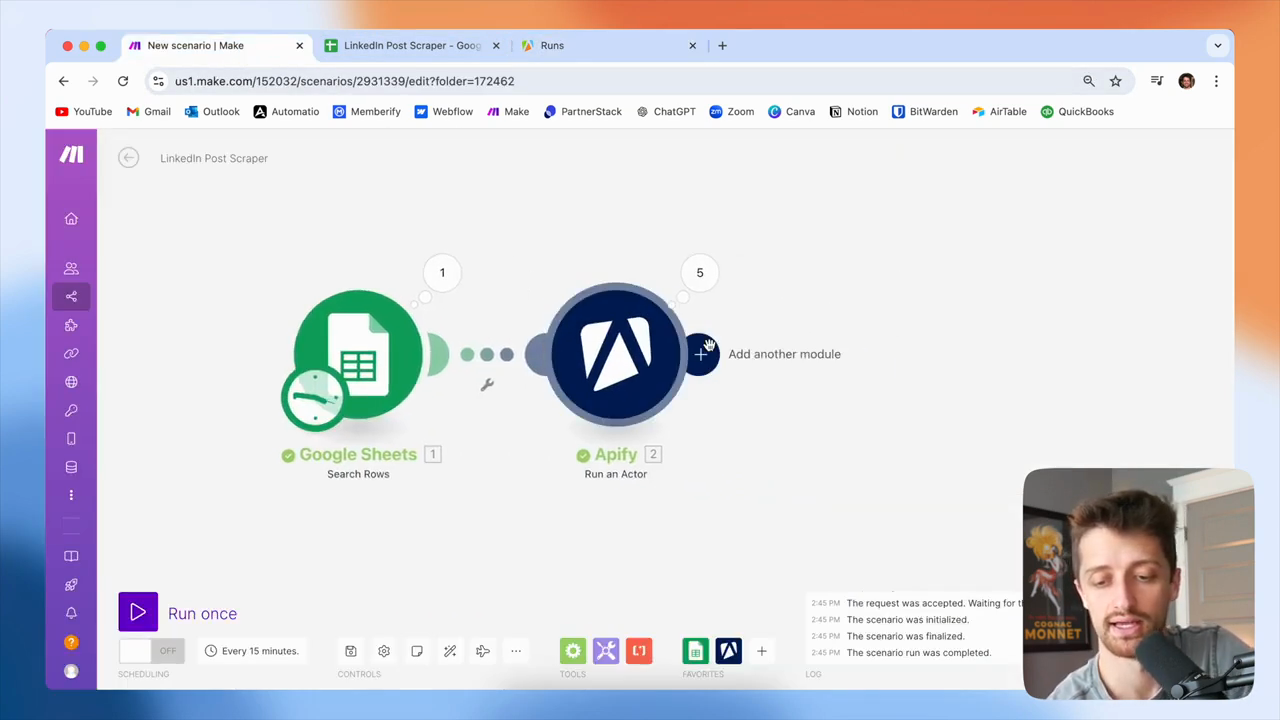

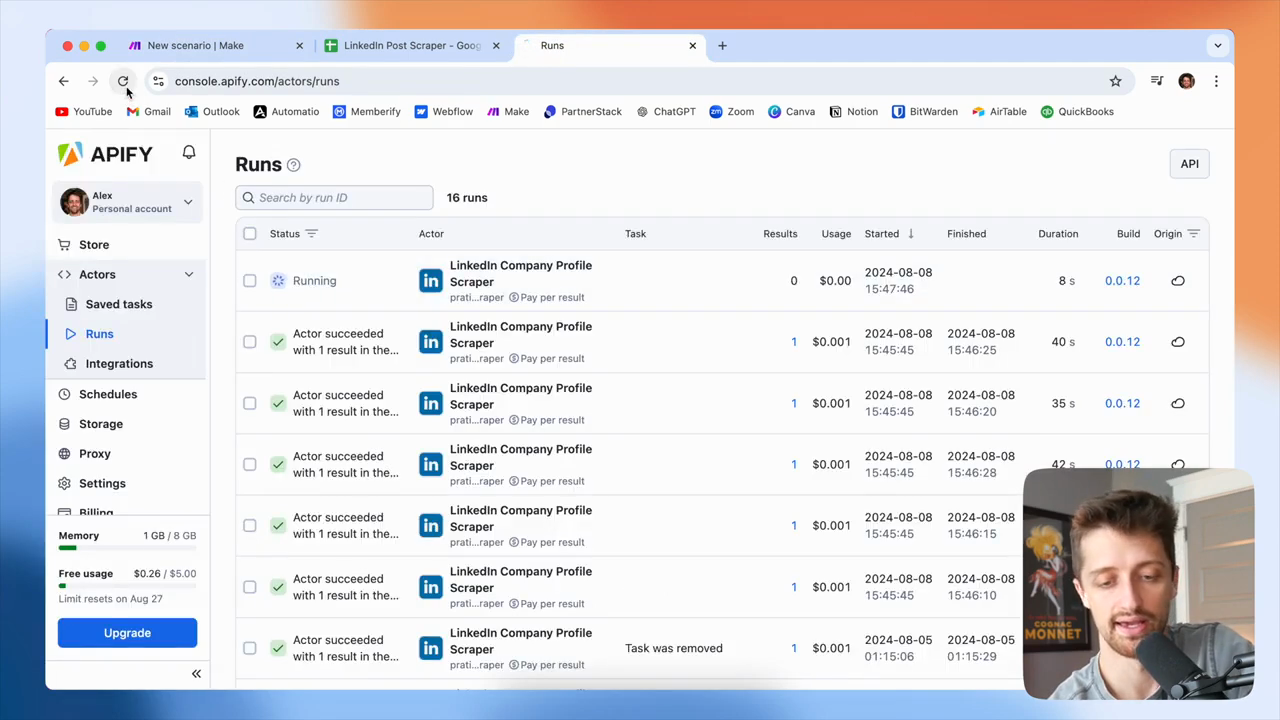

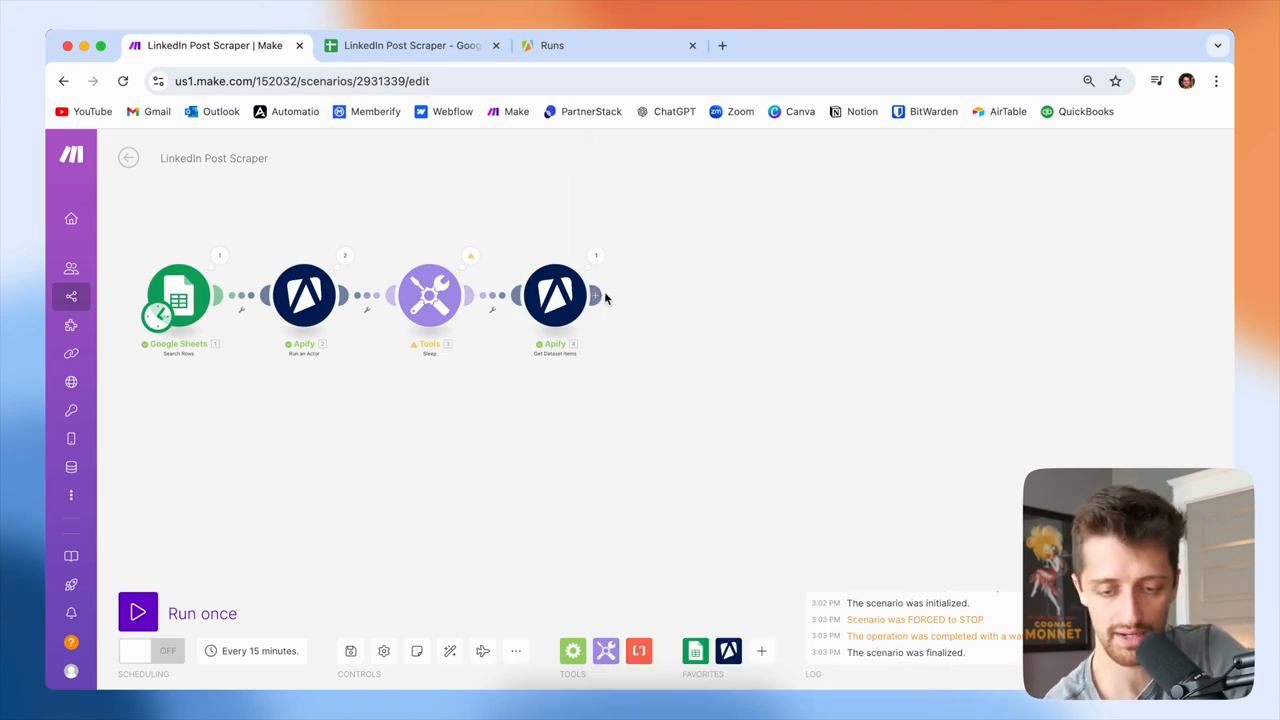

Okay, cool. It ran five times. If I go to Apify, I can see that we are now using memory. Now, we are running five different tasks simultaneously.

That's not what we want to do, because many of these will take time to complete. Okay, it's gonna take... These have been running for 20 seconds and are still going. Sometimes, these may take a minute. Sometimes they take 45 seconds.

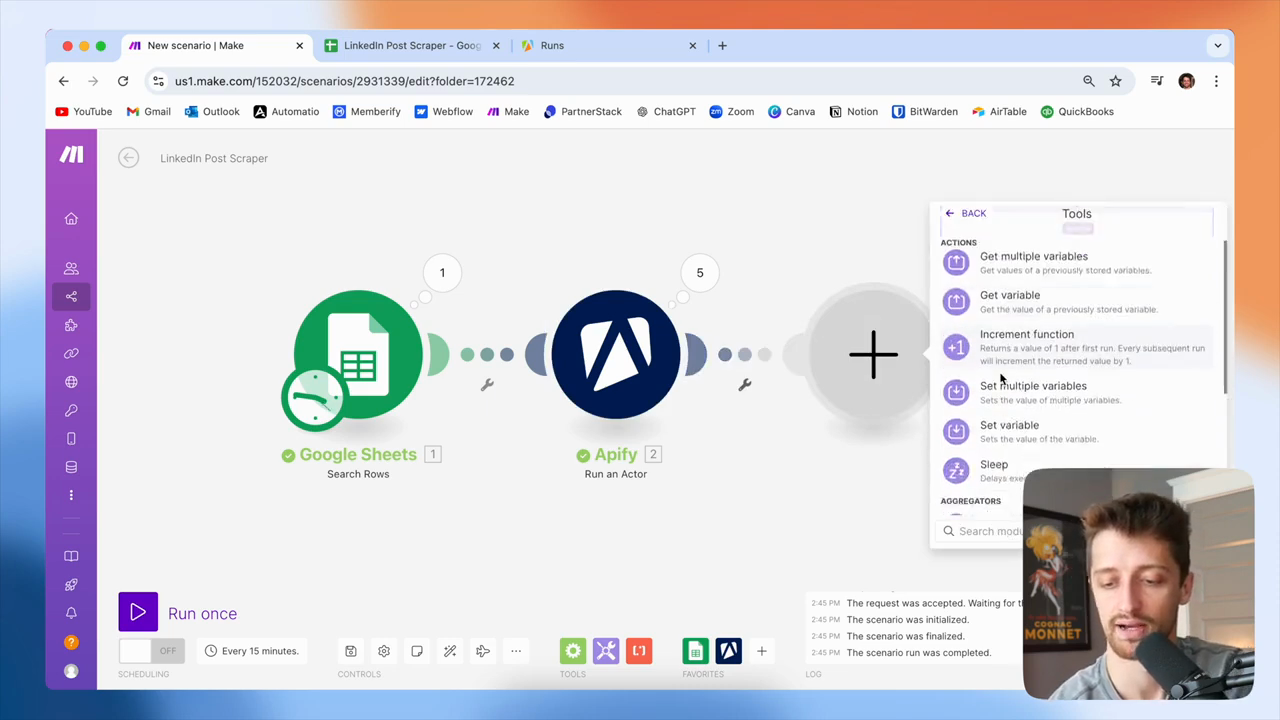

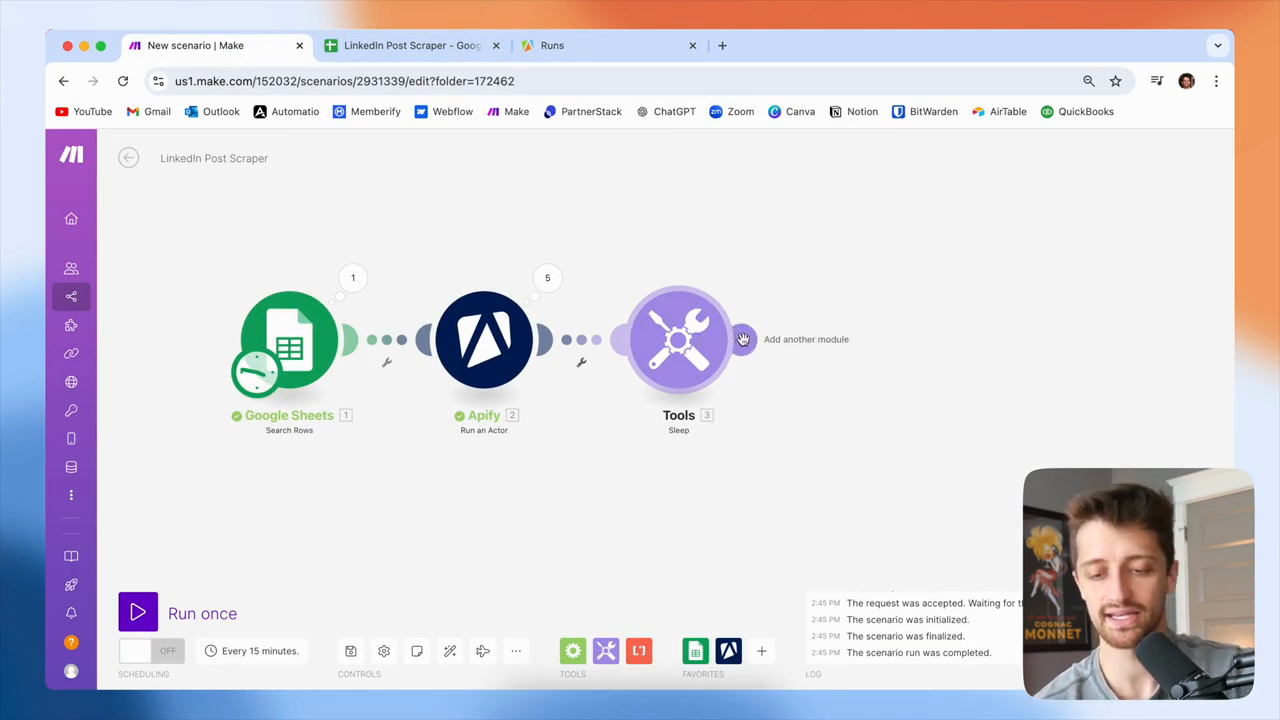

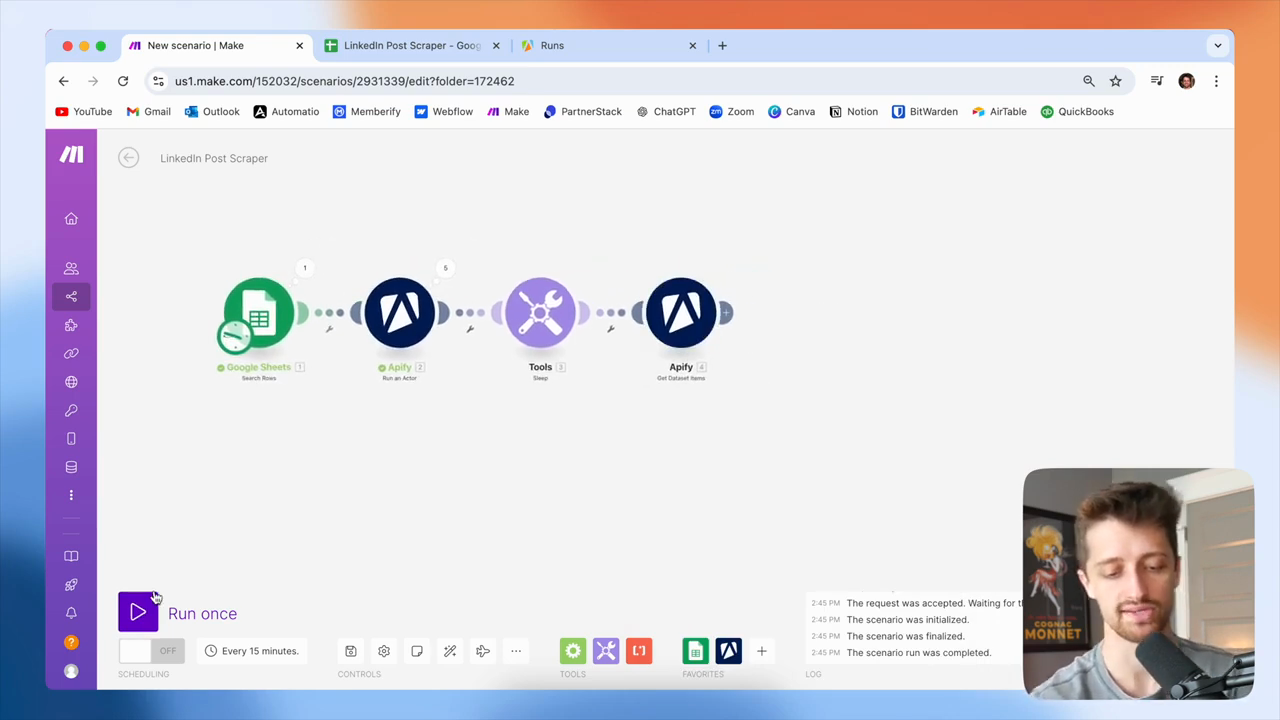

Okay, I usually take a short break here. After this module, I will add a sleep module and set it to pause for 60 seconds.

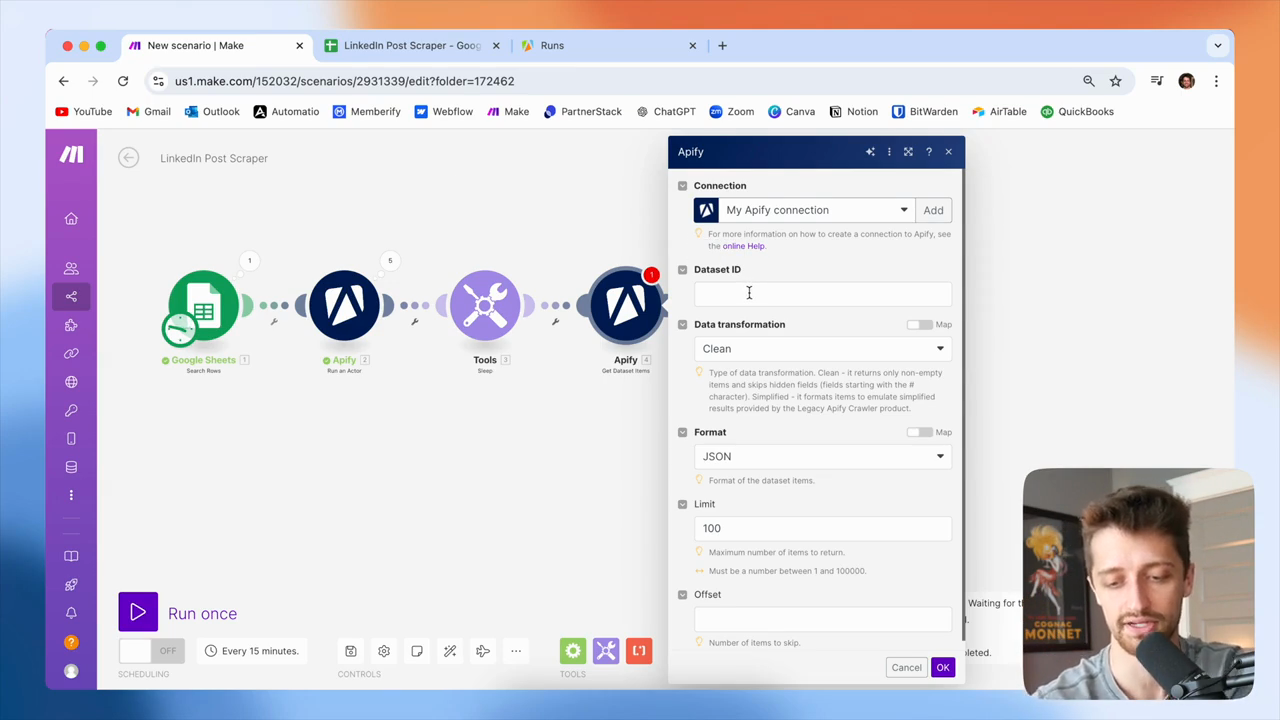

And that way, our... The small actor in Apify can scrape the LinkedIn profile we need. These all take about 30 to 40 seconds, so a minute should give us plenty of time. We're going to sleep. Next, we need to get the dataset that we will retrieve with these actors.

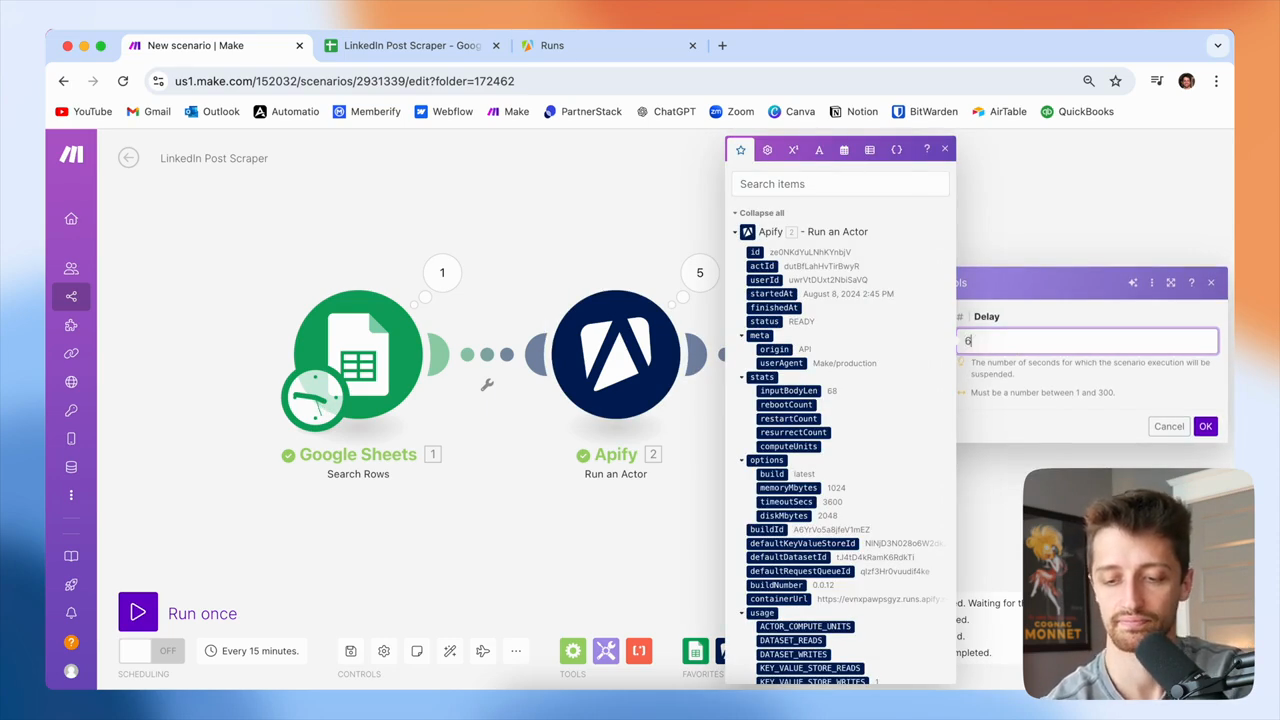

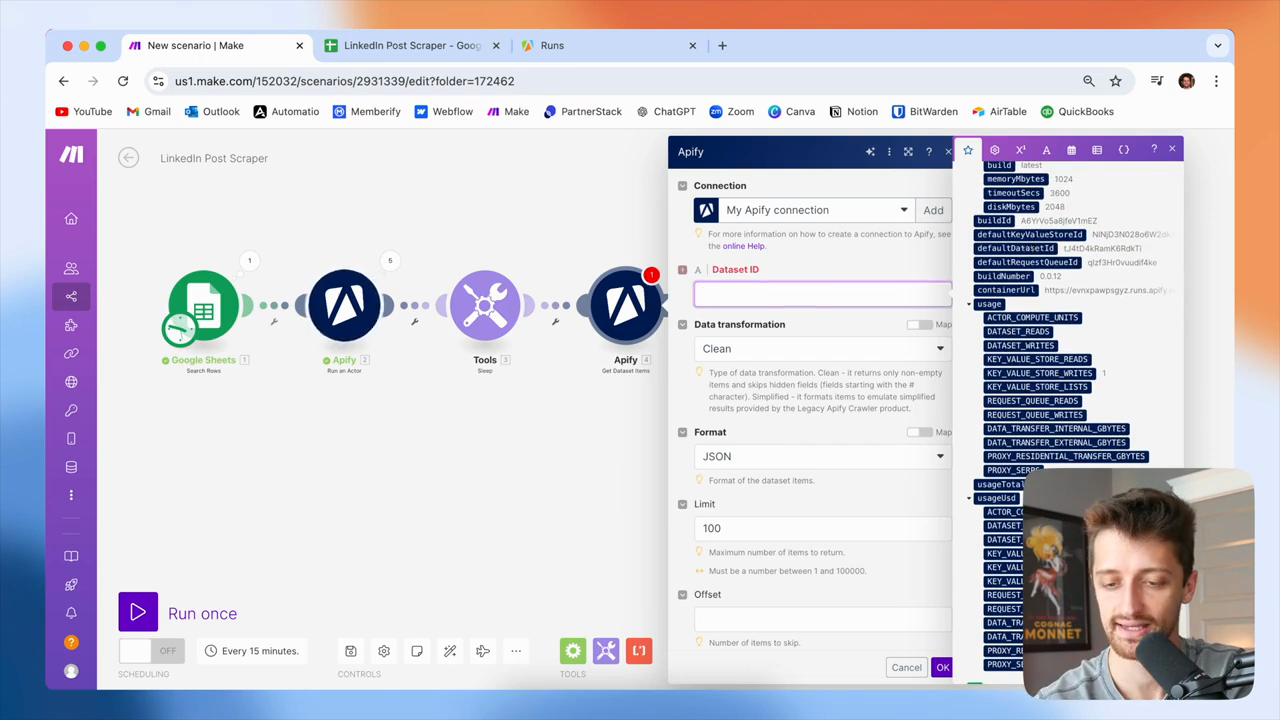

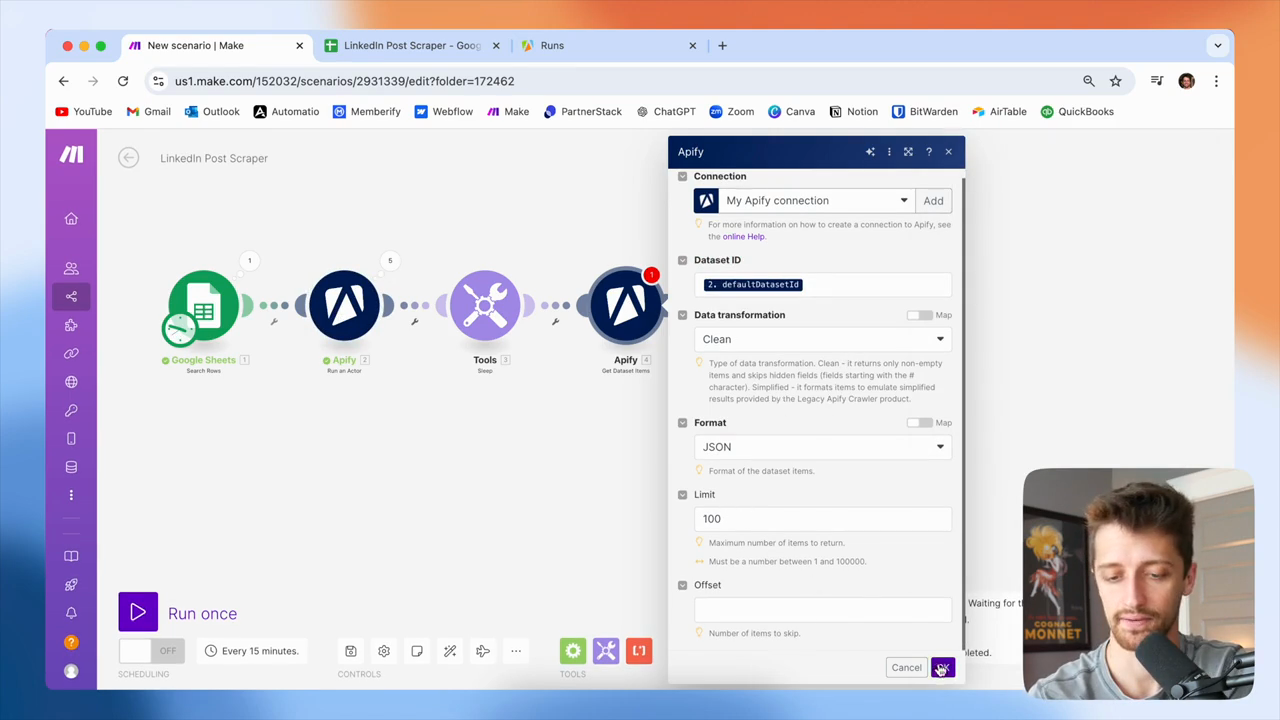

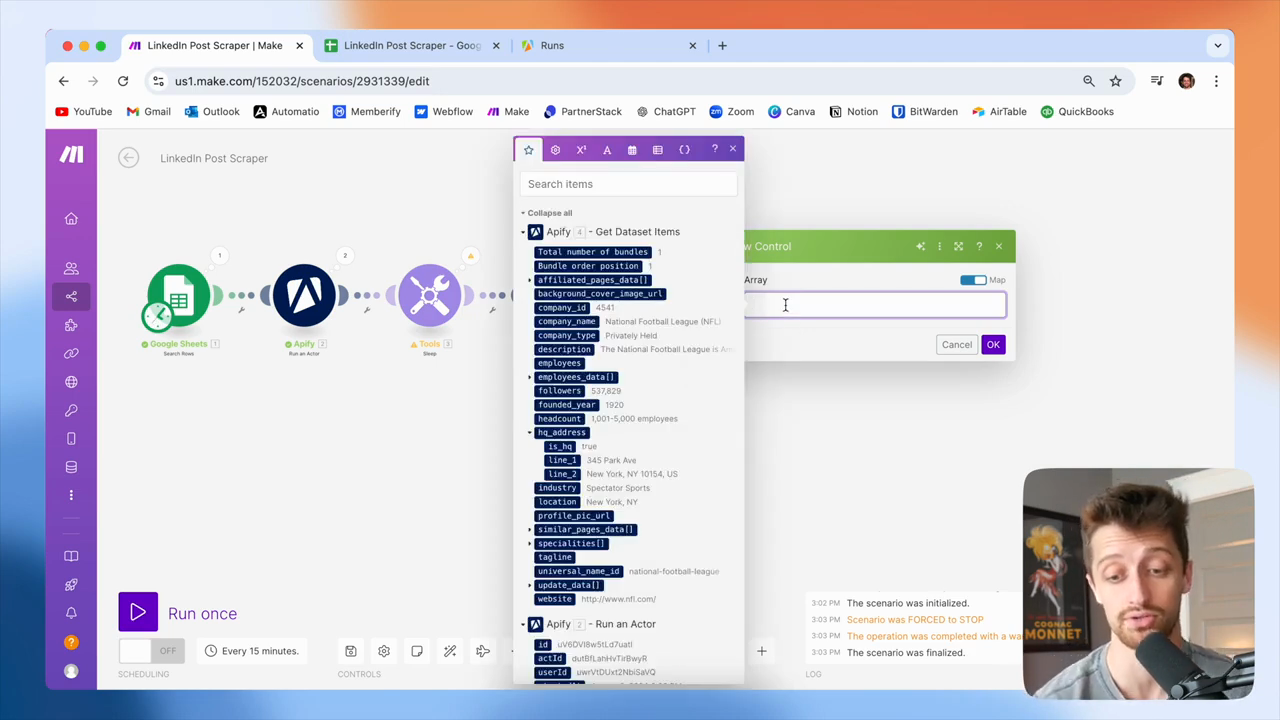

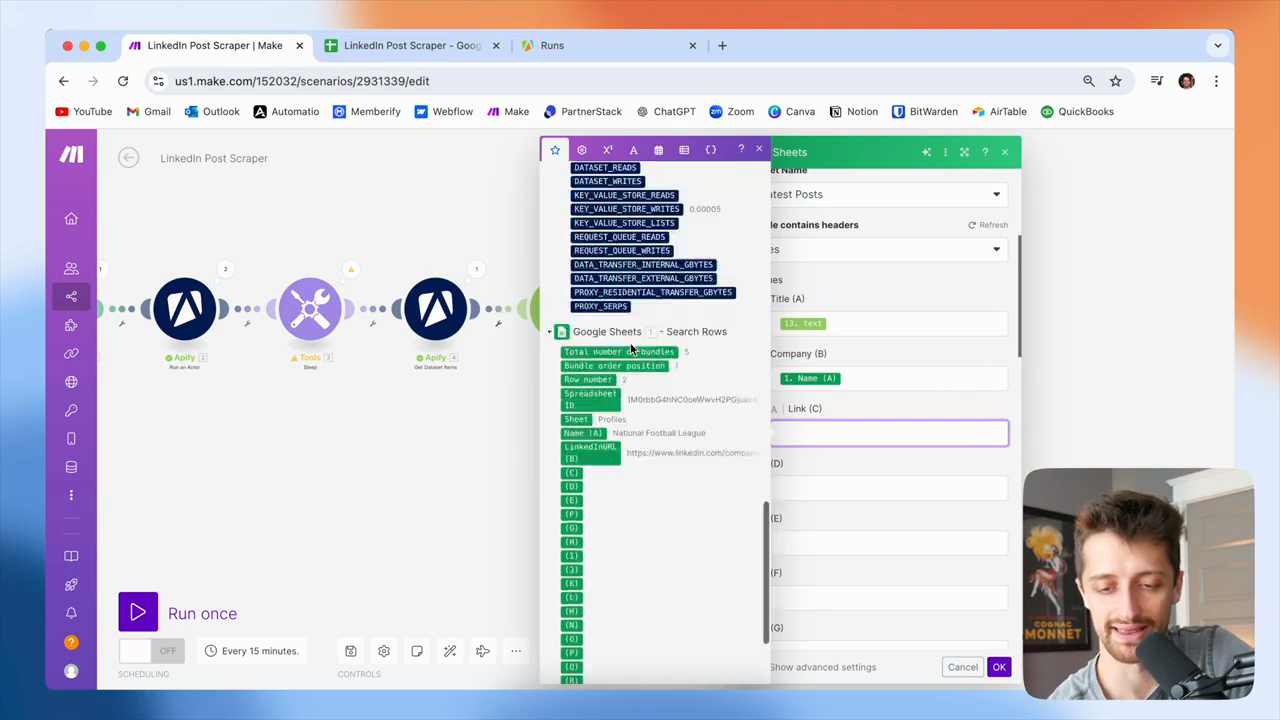

We launch the task or run the actors, which sends the bot to LinkedIn to collect the data. We need to retrieve the contents of the package they collect for us. This is the purpose of the fourth module. We need to provide a dataset ID so it can unpack and retrieve the contents for us.

All right? We're going to feed it this. Where is it? Default dataset ID for our second module, okay?

This will gather all the information and posts from LinkedIn, so we can use them now. We'll leave the limit at one hundred. That's fine.

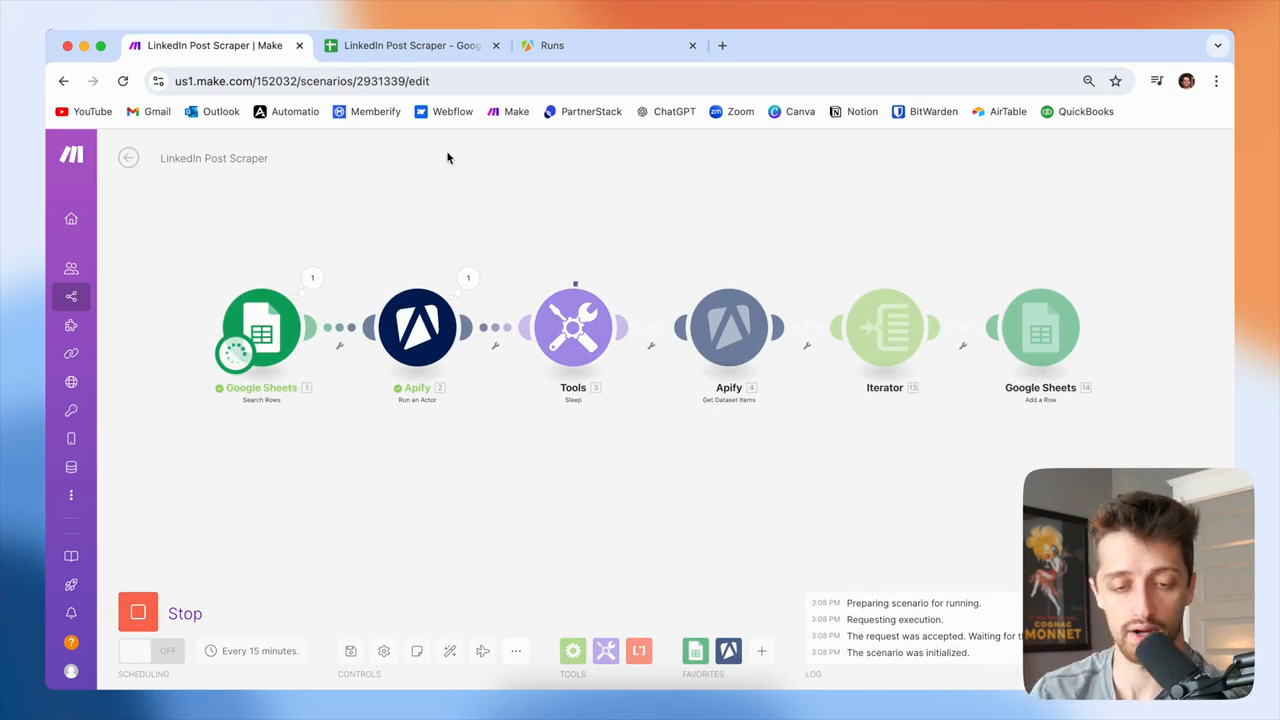

All right? Next, we'll run this again to check how it looks.

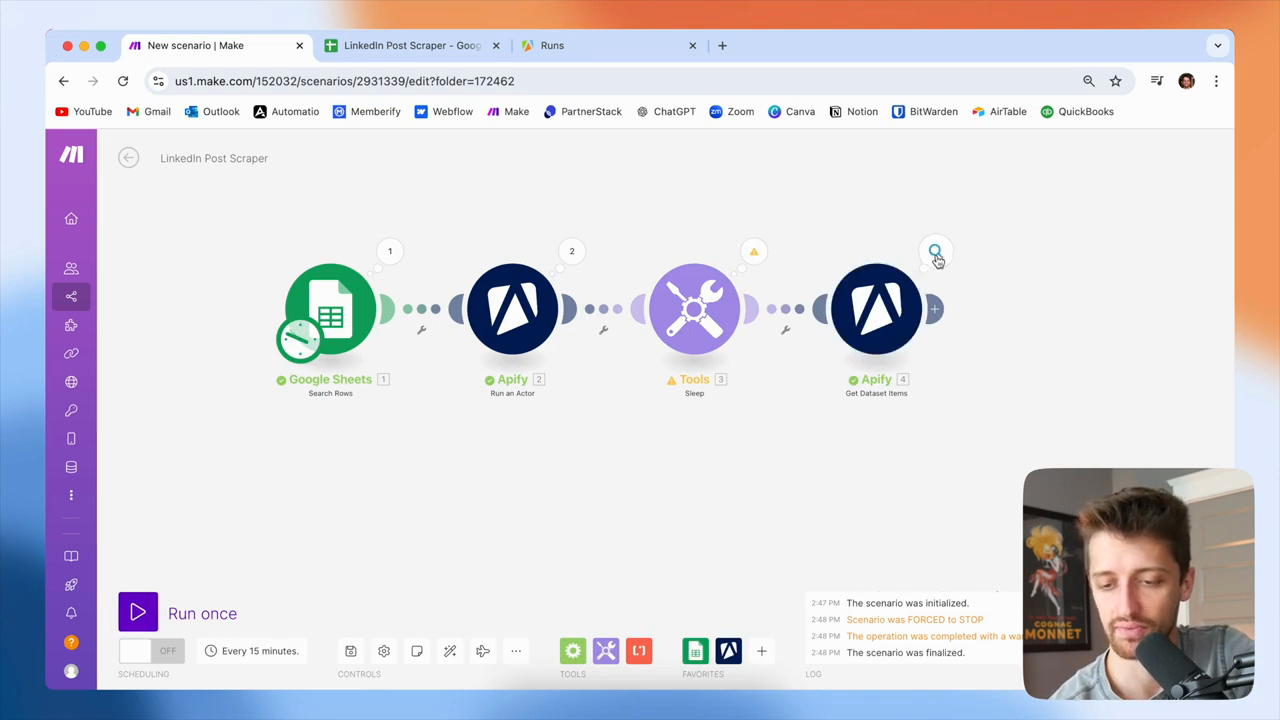

Only one of our actors is running right now. We will now wait for 60 seconds while this runs. We need to do this because if we try to get data from our actor before it finishes running, we won't retrieve any results.

We need to wait 60 seconds for this to finish. This one finished in 19 seconds. Once it's complete, we can use this module to retrieve the data. We'll wait another 30 seconds, then continue. Okay, that's done now.

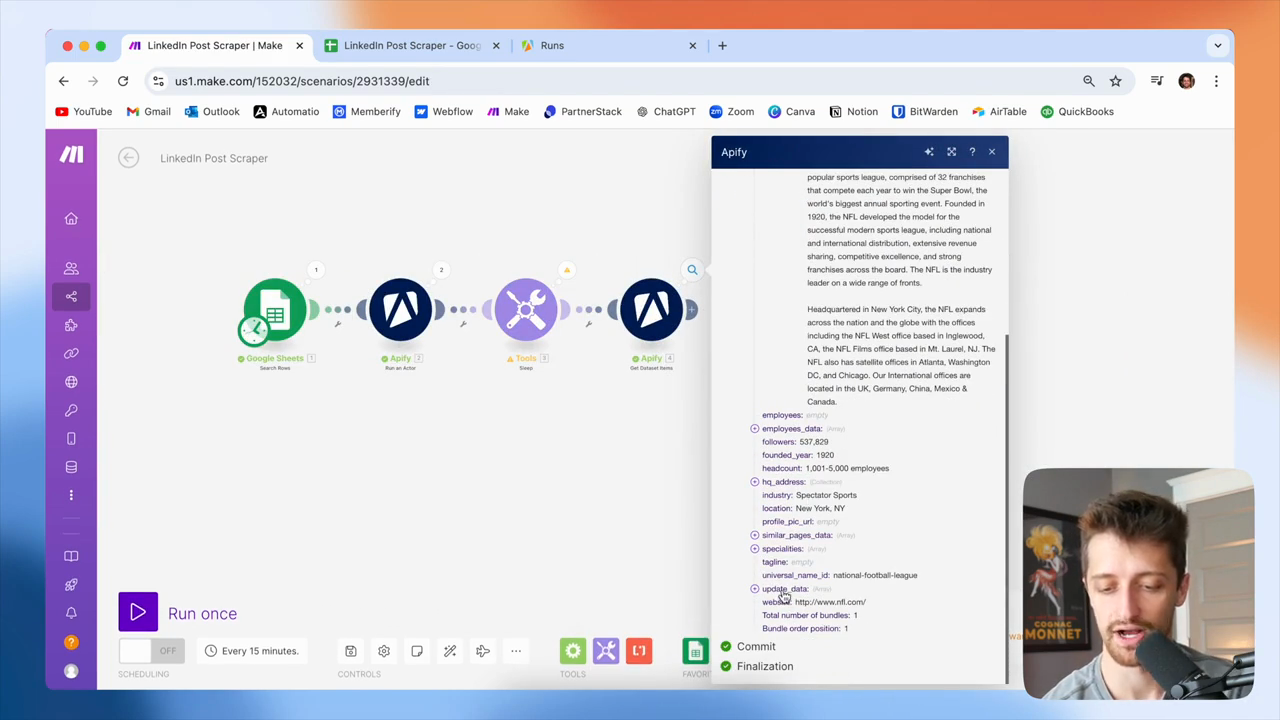

I'll stop here because I don't want to go through all of these. Now, we can see what we got from the get dataset item module.

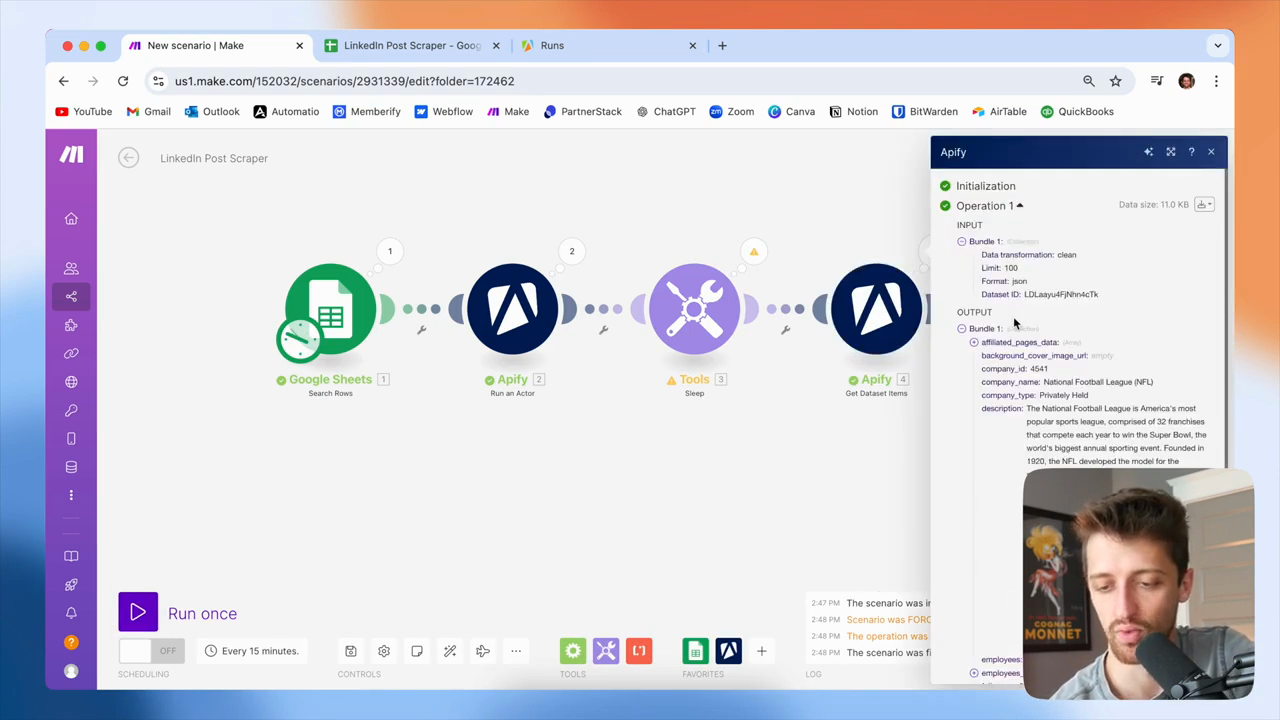

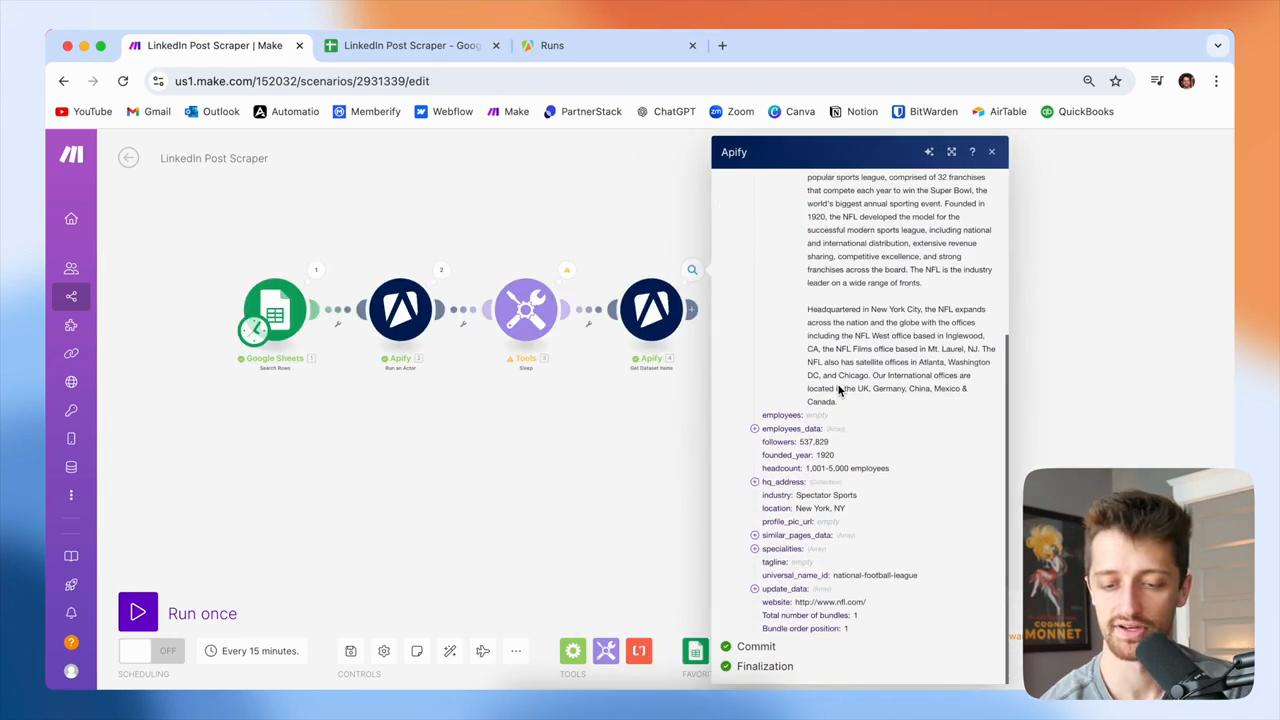

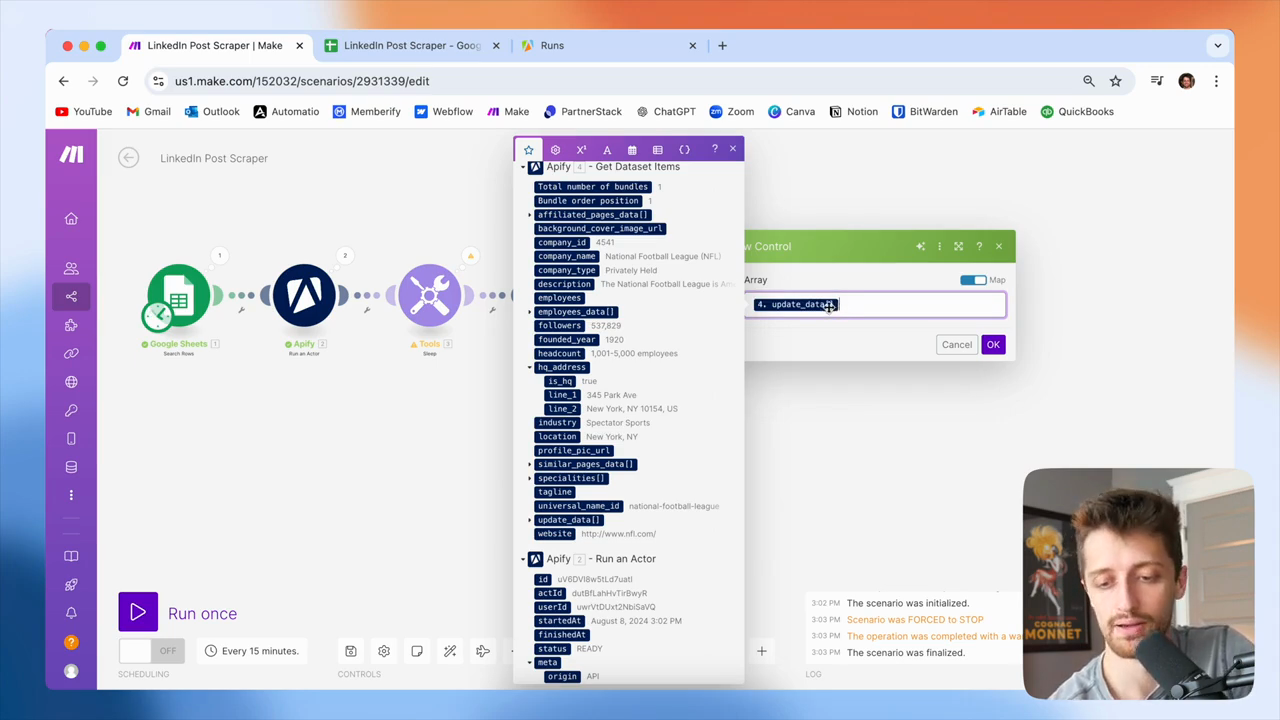

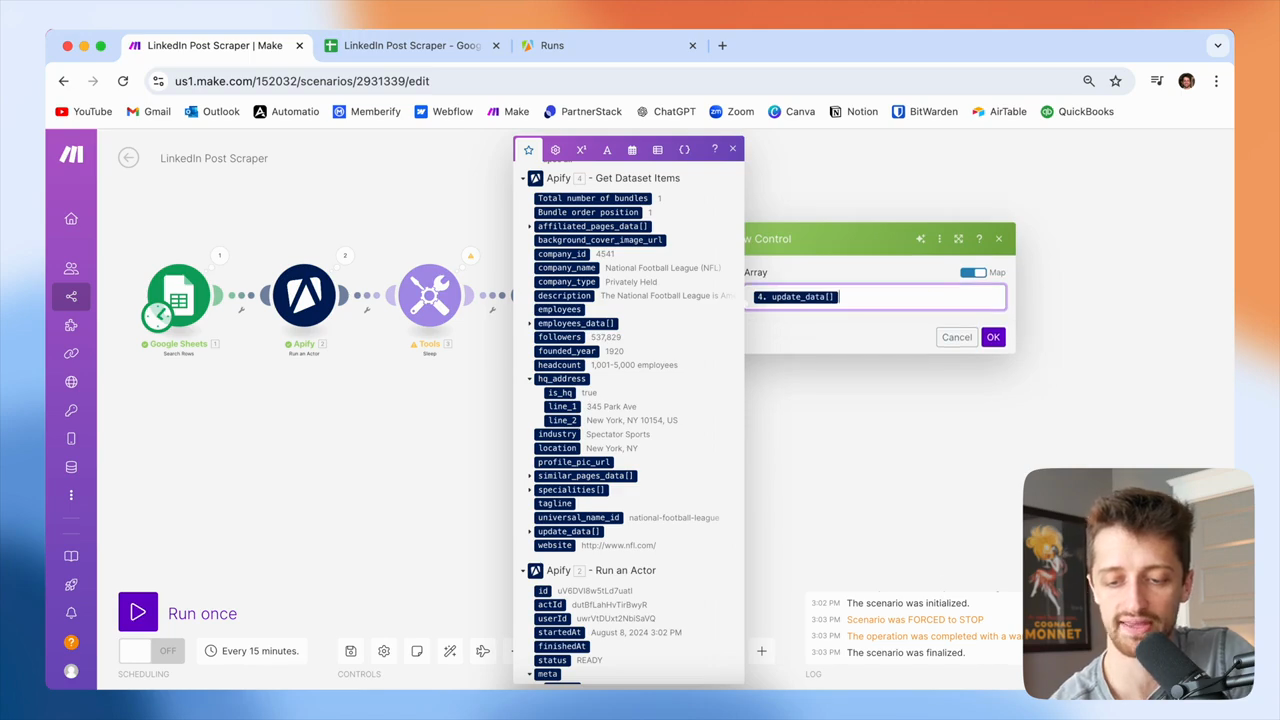

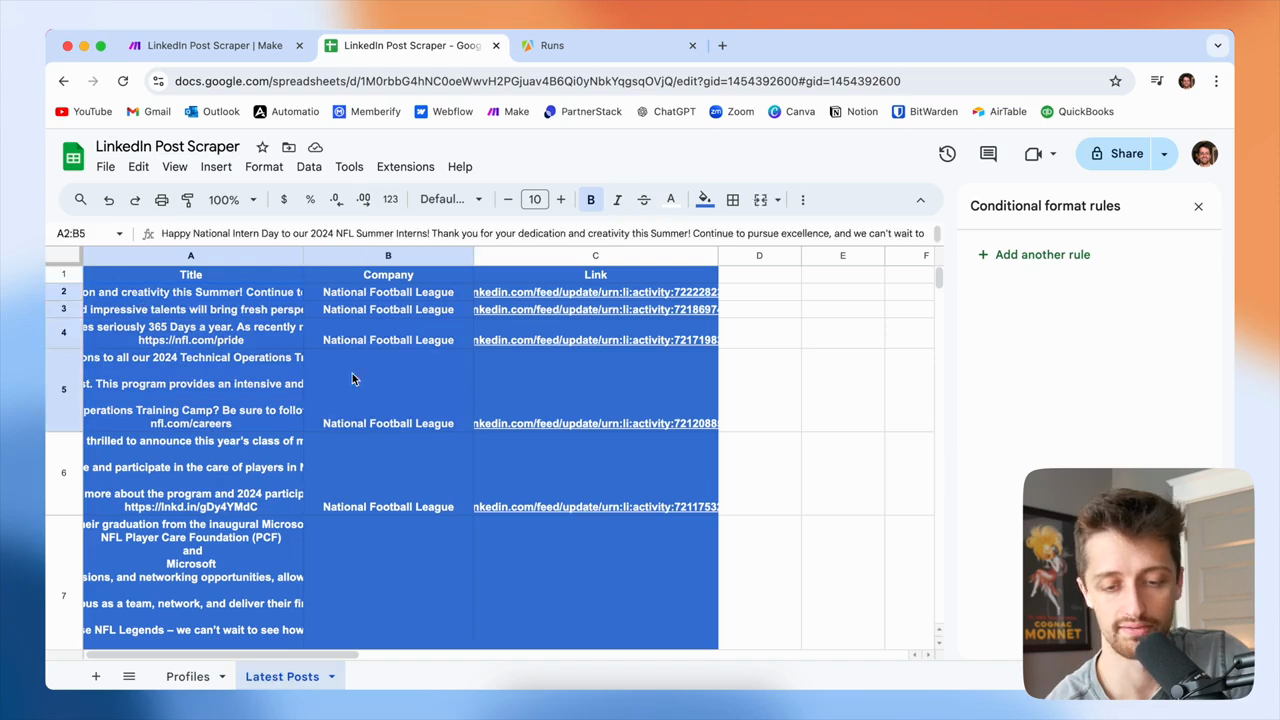

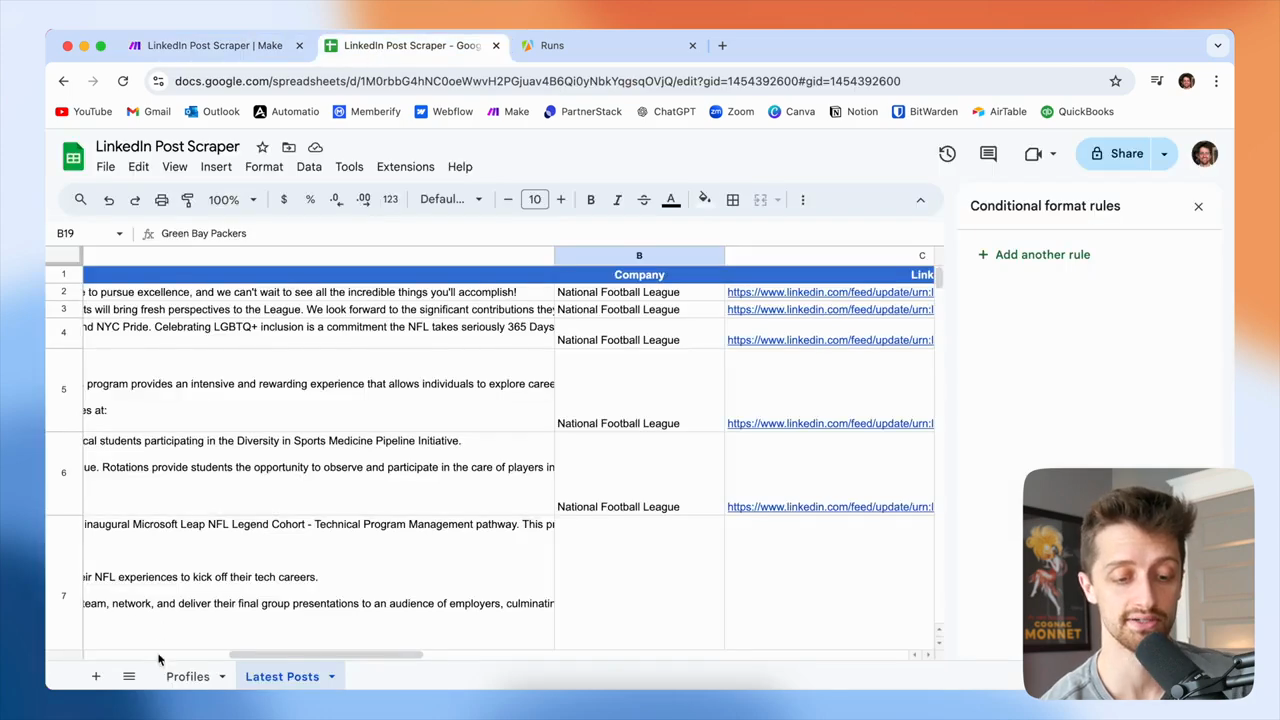

If I scroll down in my Apify module to the update data array, you can see that we now have the 10 latest LinkedIn posts from the NFL, all within this array.

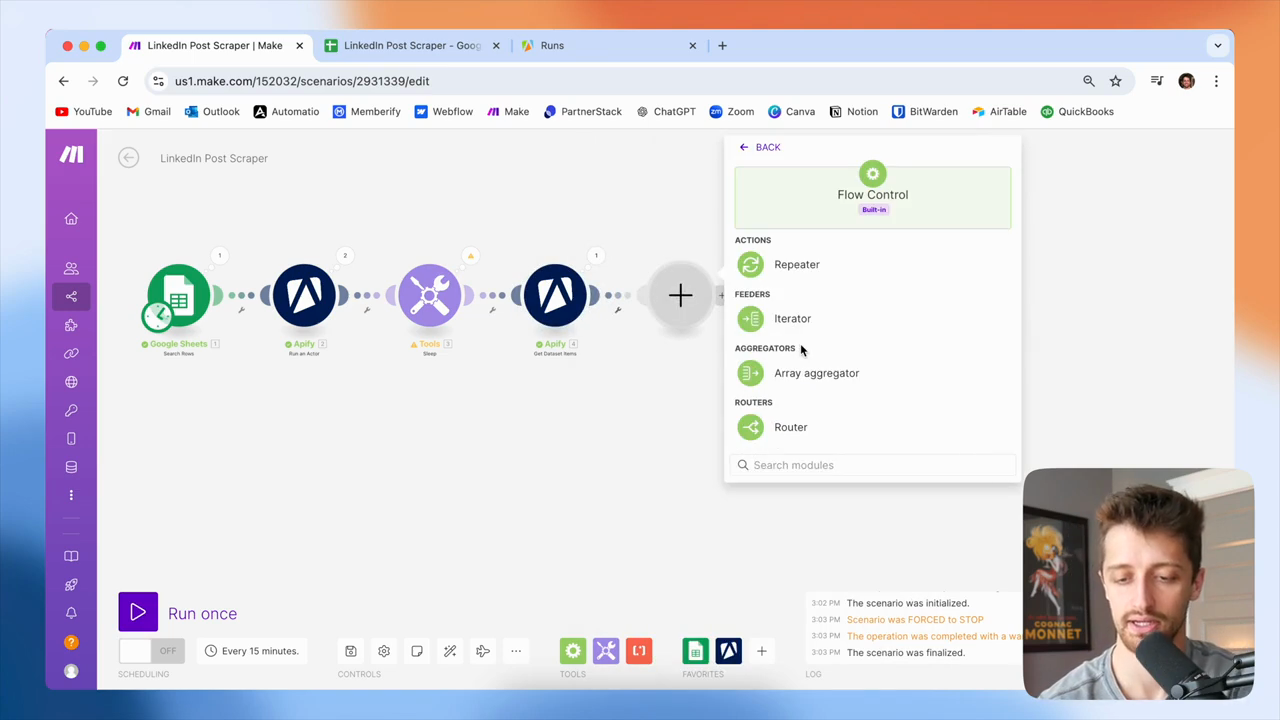

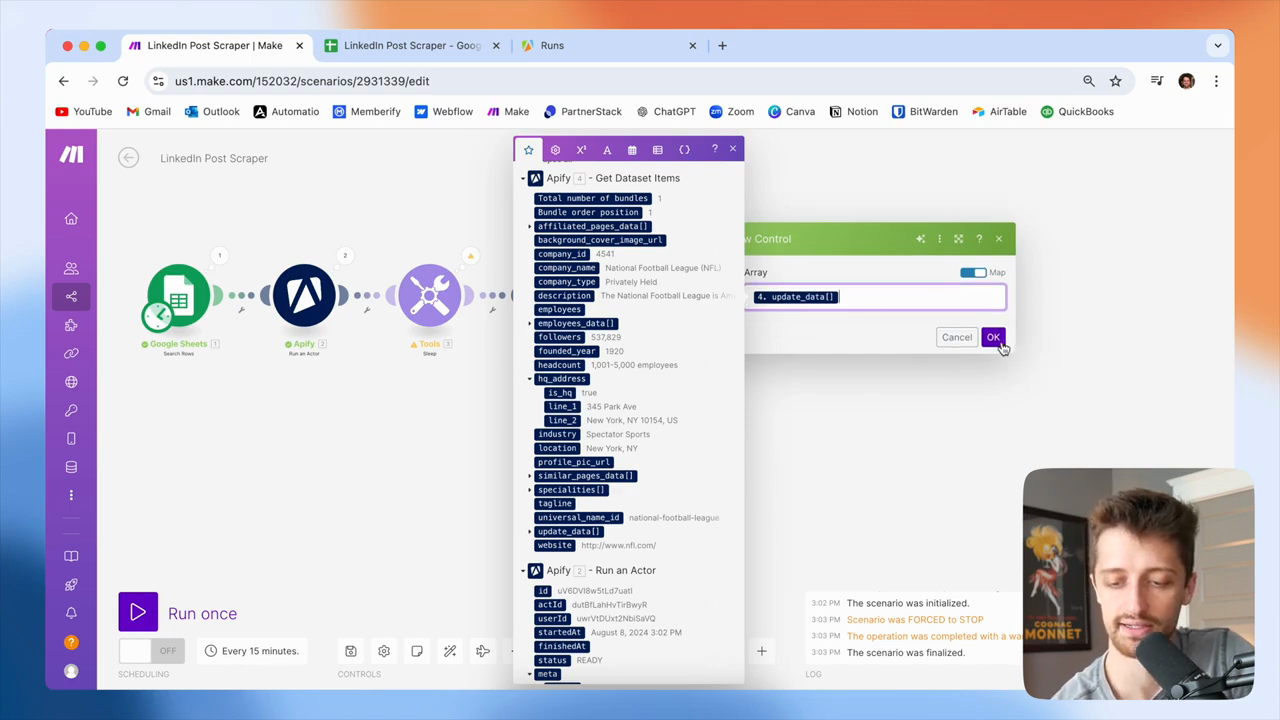

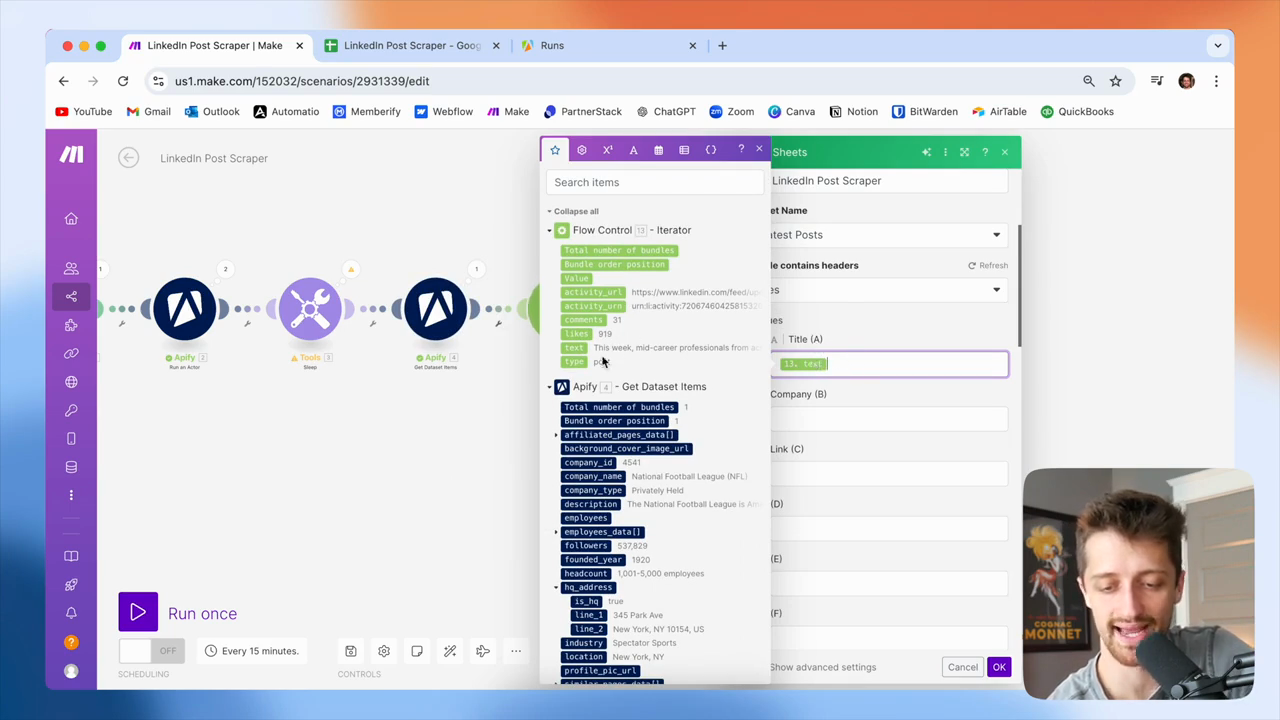

Okay? You can see them all here. I keep emphasizing that this is an array because it's very important. When you receive data as an array in make.com and want to perform an action on every item, not just the first one, you need to use an iterator. An iterator goes through each item in the array and, in our case, adds each item to our Google Sheet.

If we didn't do this, we would only add the first item in the array to our Google Sheet. We want to add all 10 posts to our Google Sheet. To do that, we need to iterate through this array.

Okay? We will create an iterator and feed it the update data array. If you're new to make.com, you can identify an array by looking for two square brackets at the end.

Okay? You can see here, these two square brackets indicate that this is an array. So, we could iterate through. Okay? Feed that into your iterator, then let's see.

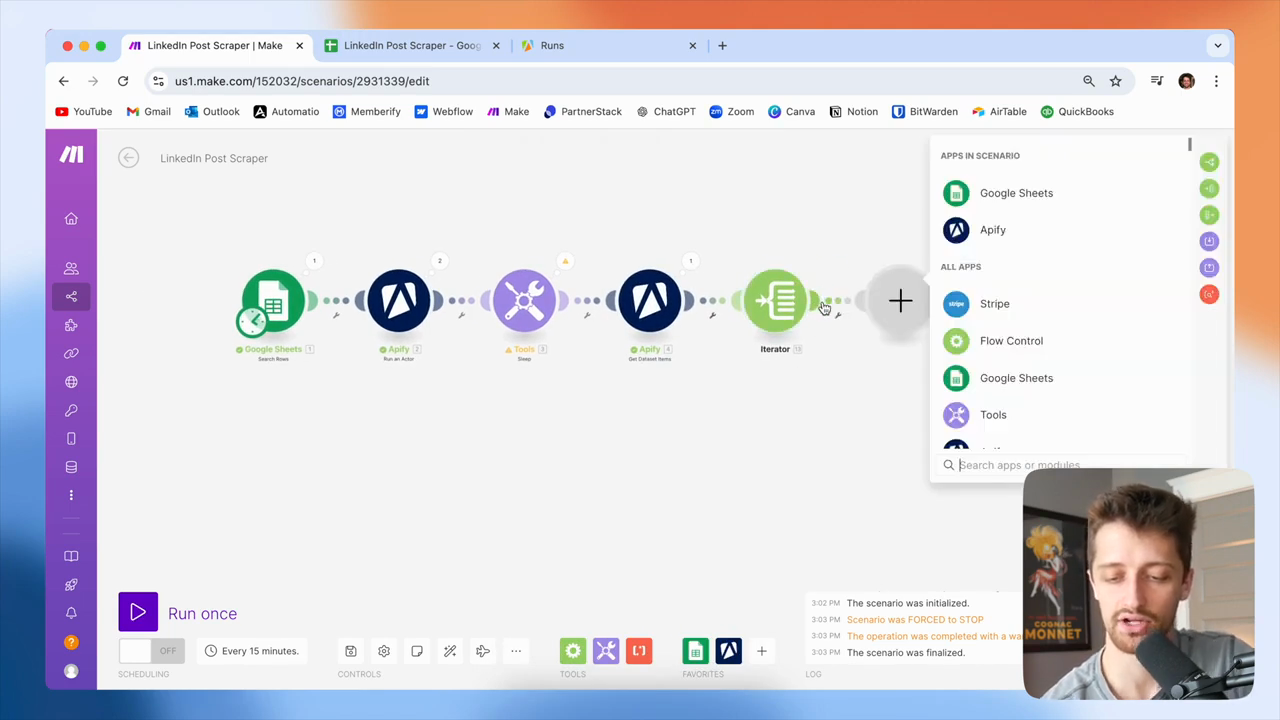

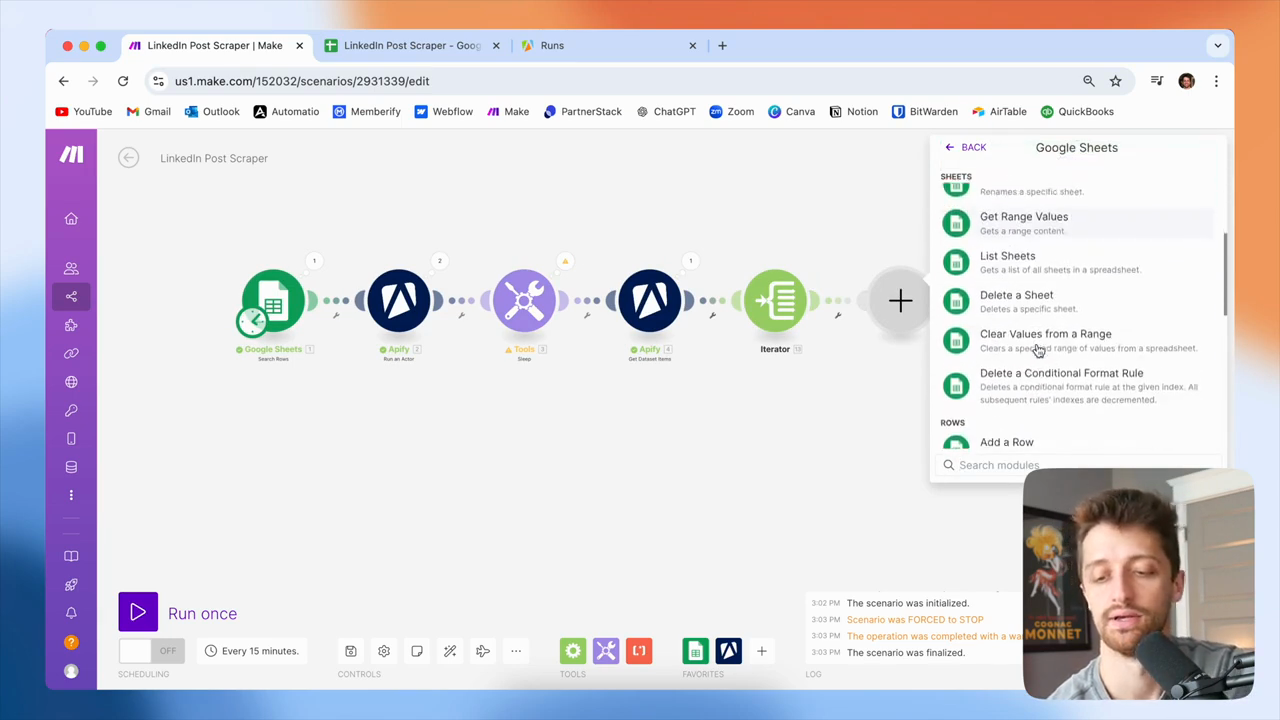

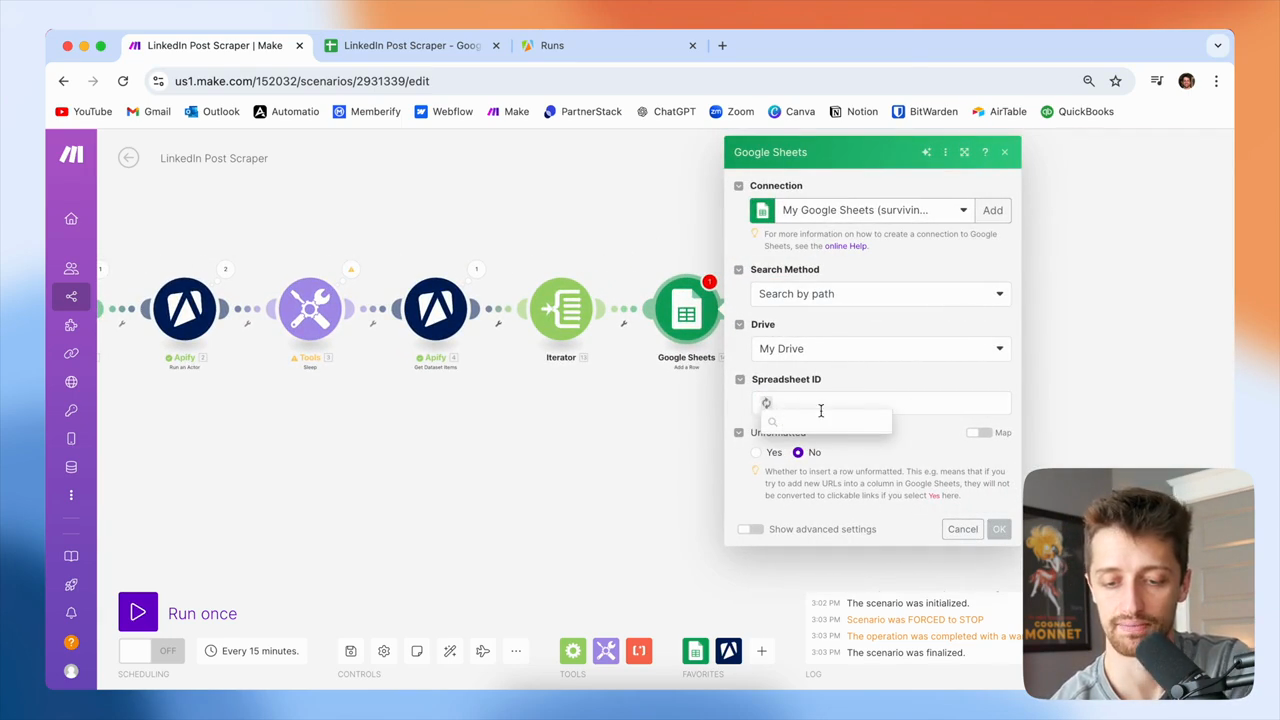

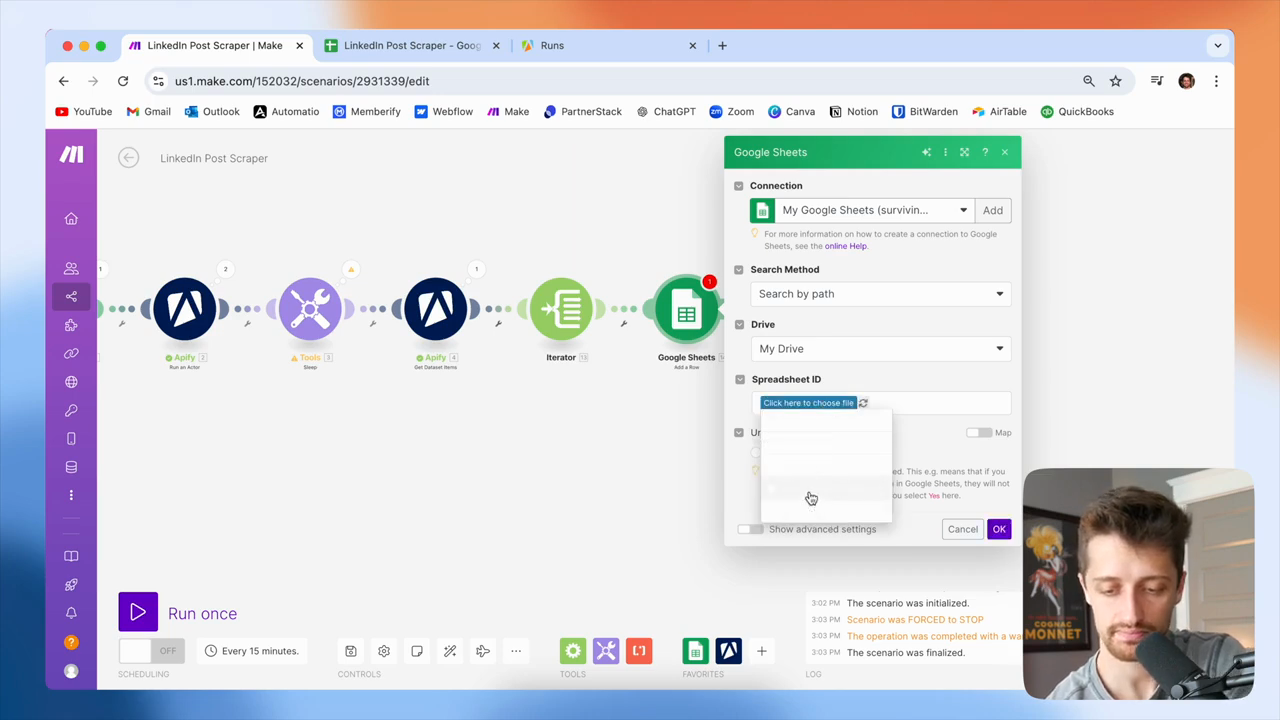

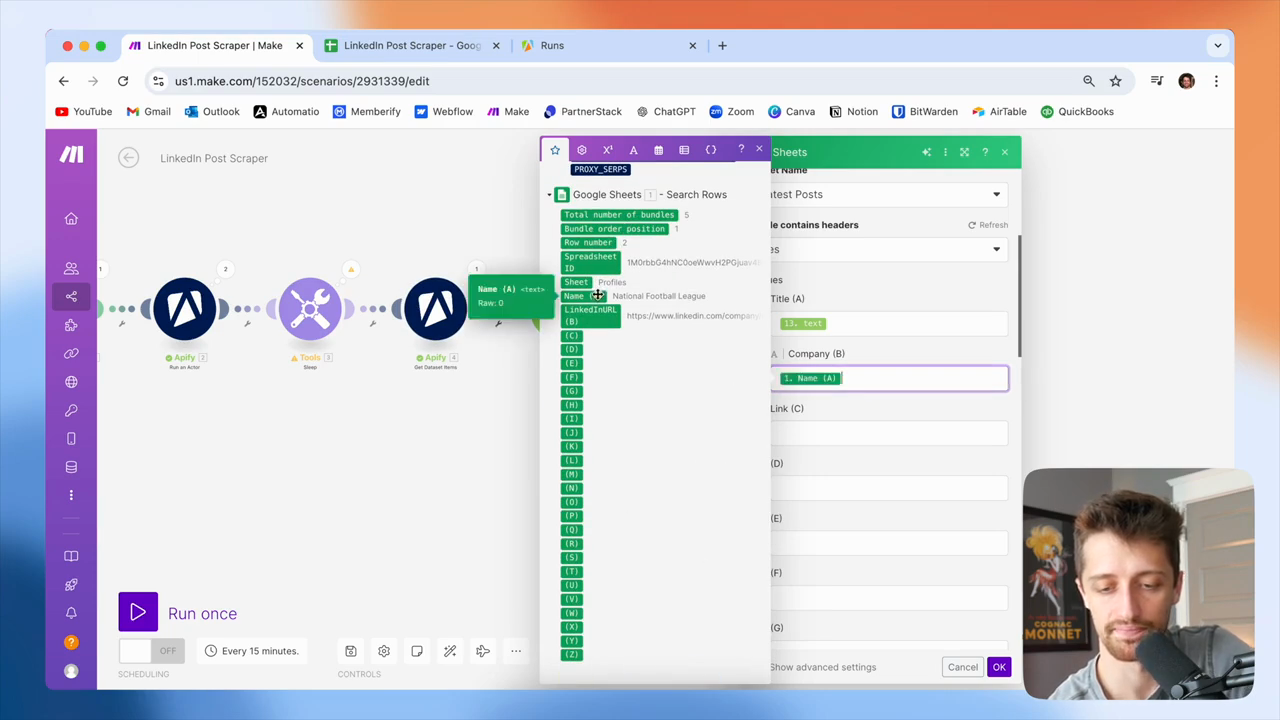

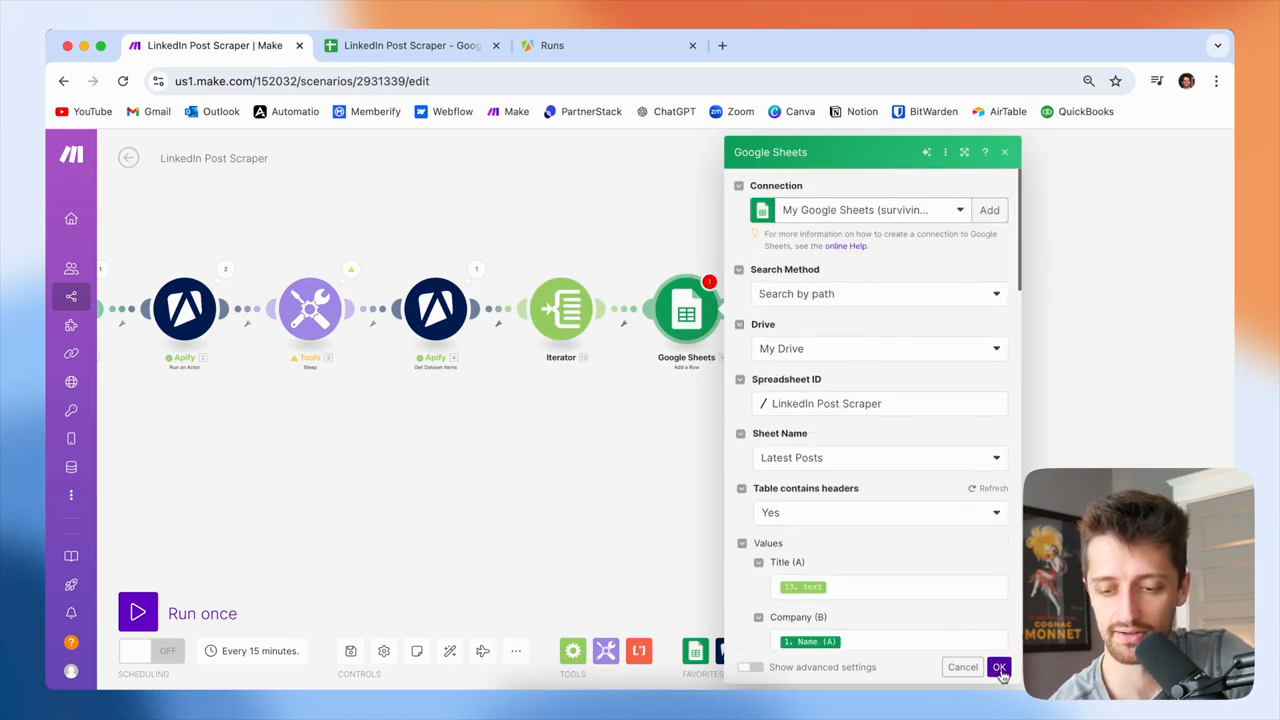

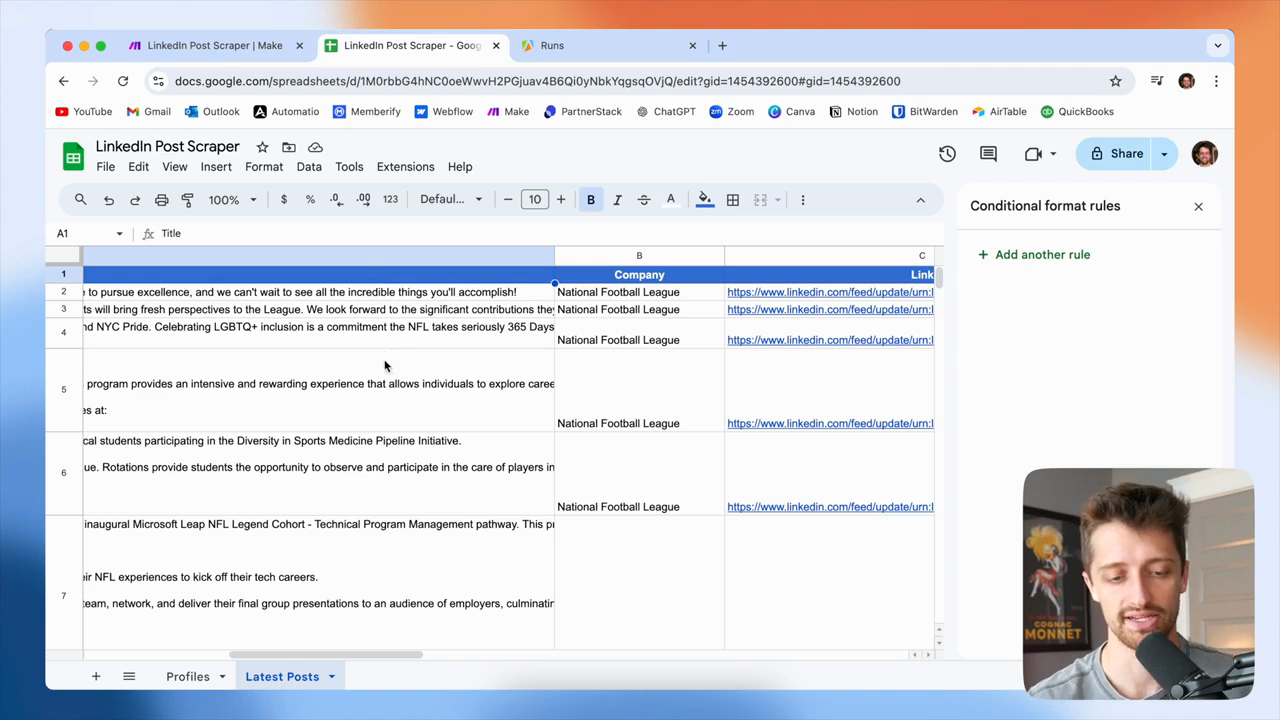

Next, I will add a Google Sheets module. We want to add each post as a new row to our Google Sheet.

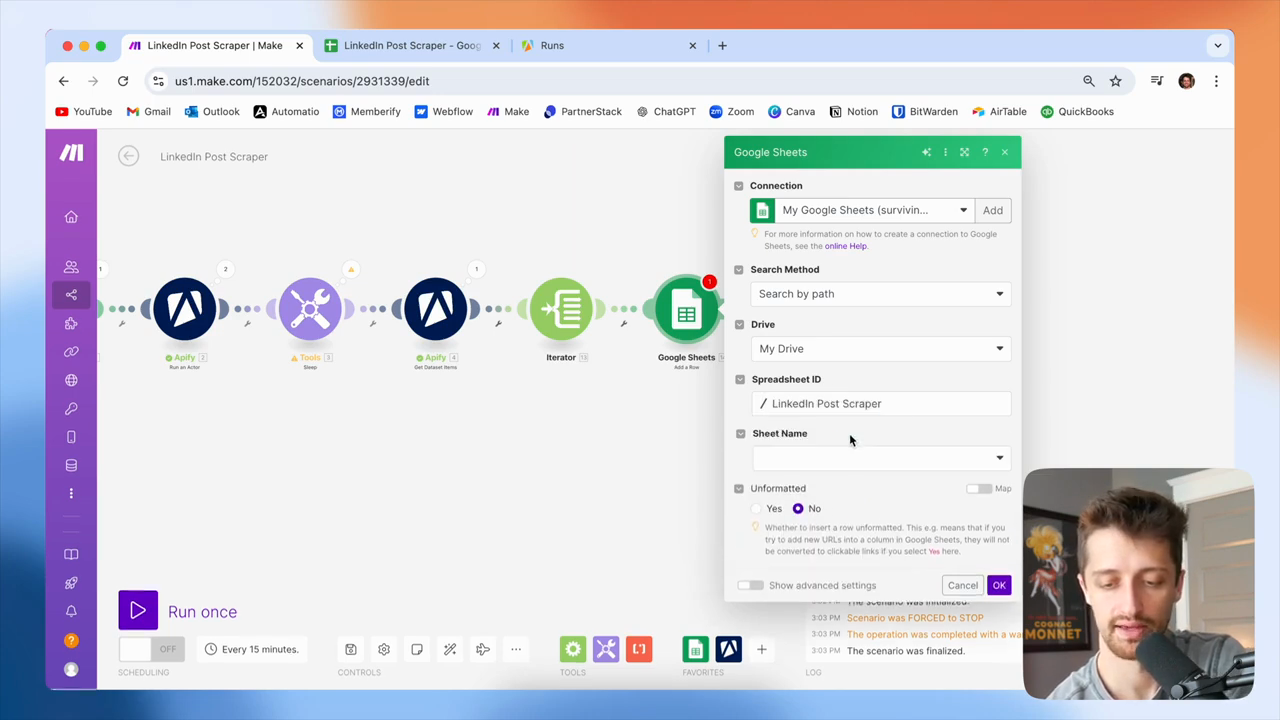

Okay? It will map to this file, like this.

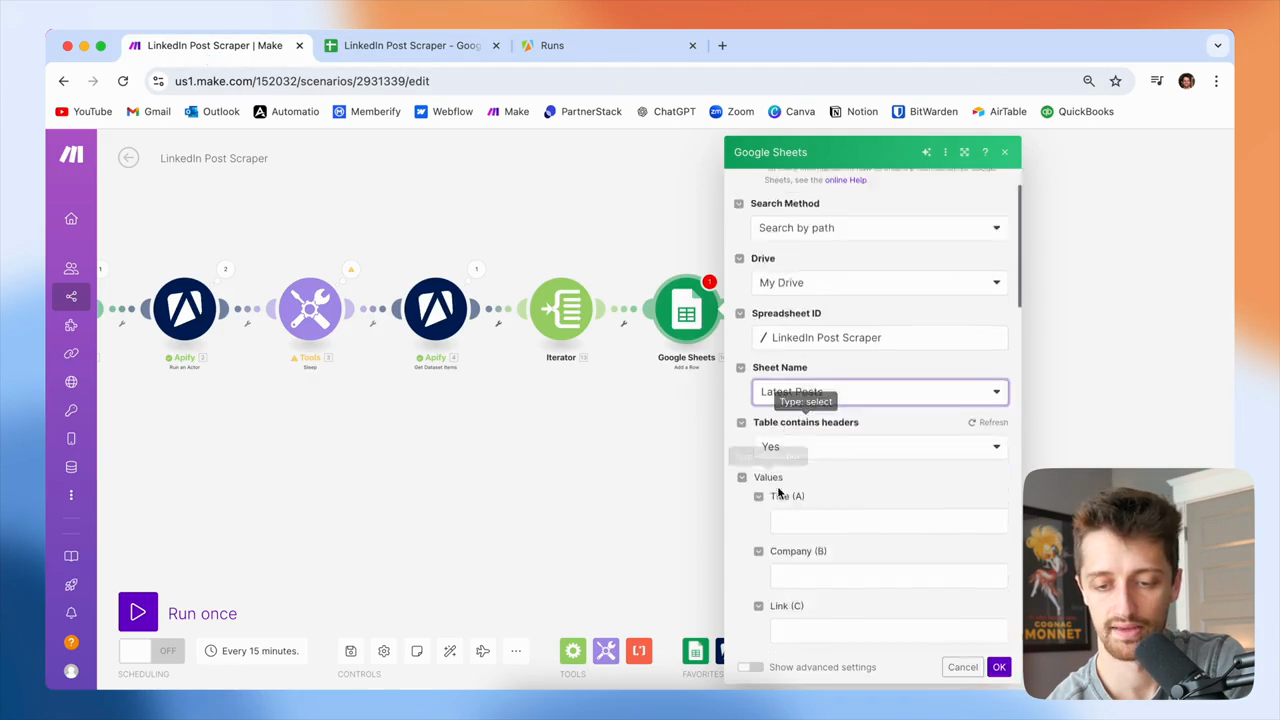

I have my sheet name and my latest post. I will add them to this sheet, then map the fields.

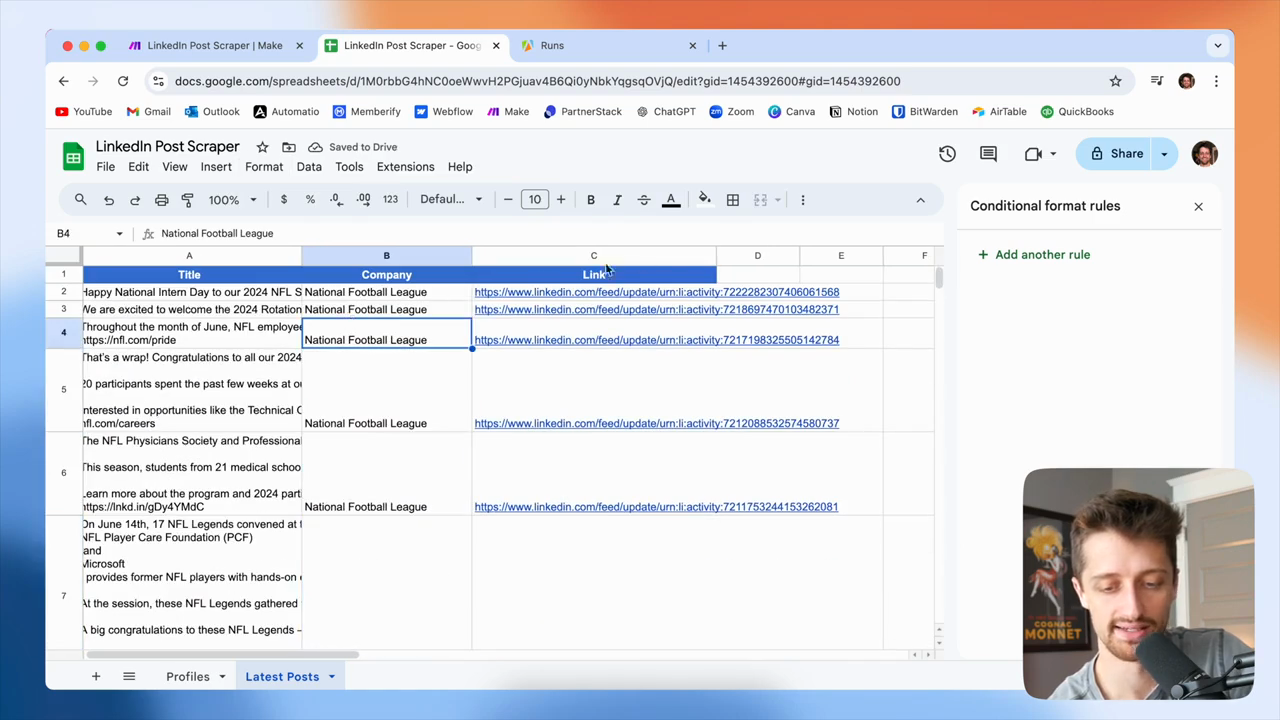

Okay? Importantly, I want to map the fields from my Iterator. Okay? So Title... I don't have a title from LinkedIn, but we do have the text of the post. I'll update my sheet accordingly.

I will add the post's text from my Iterator.

The company name, let's see. I can get that from my Google Sheet here—NFL.

Perfect. Then I will get my link from my actual iterator.

I'll include that in case I want to view the LinkedIn post directly on their page. We are mapping fields here. Then, click OK, save, and run the process to see how it works.

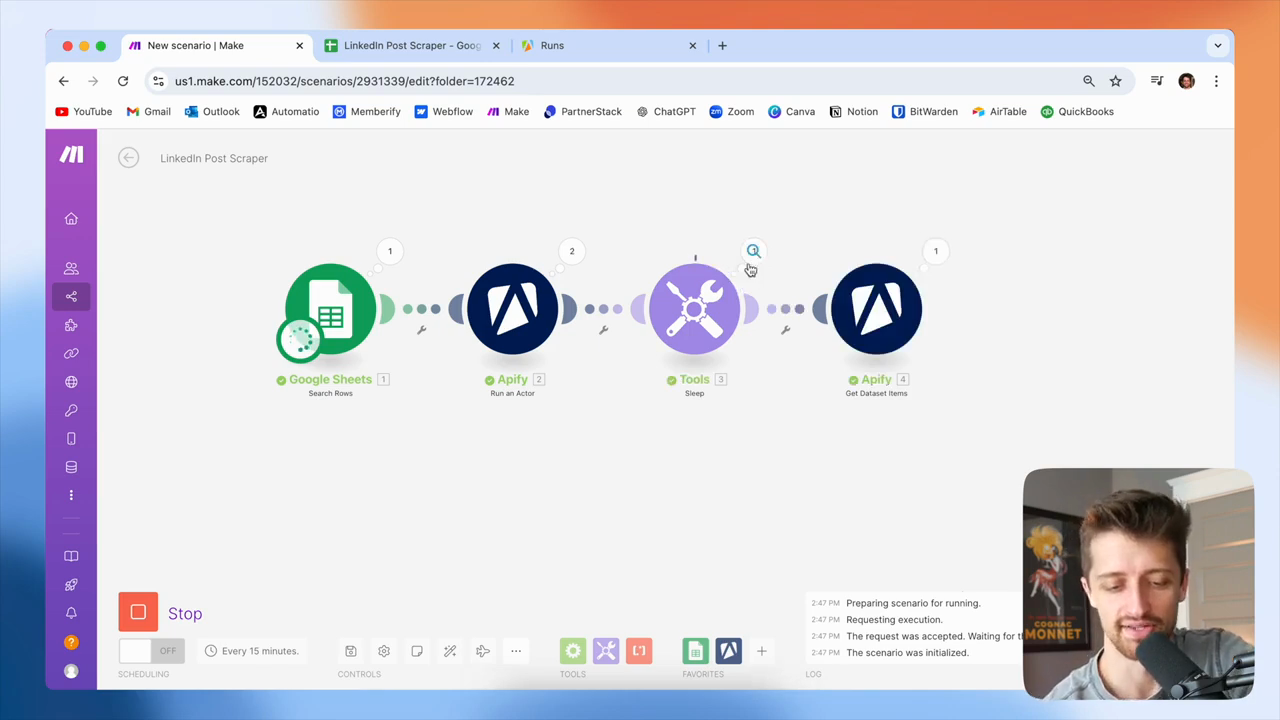

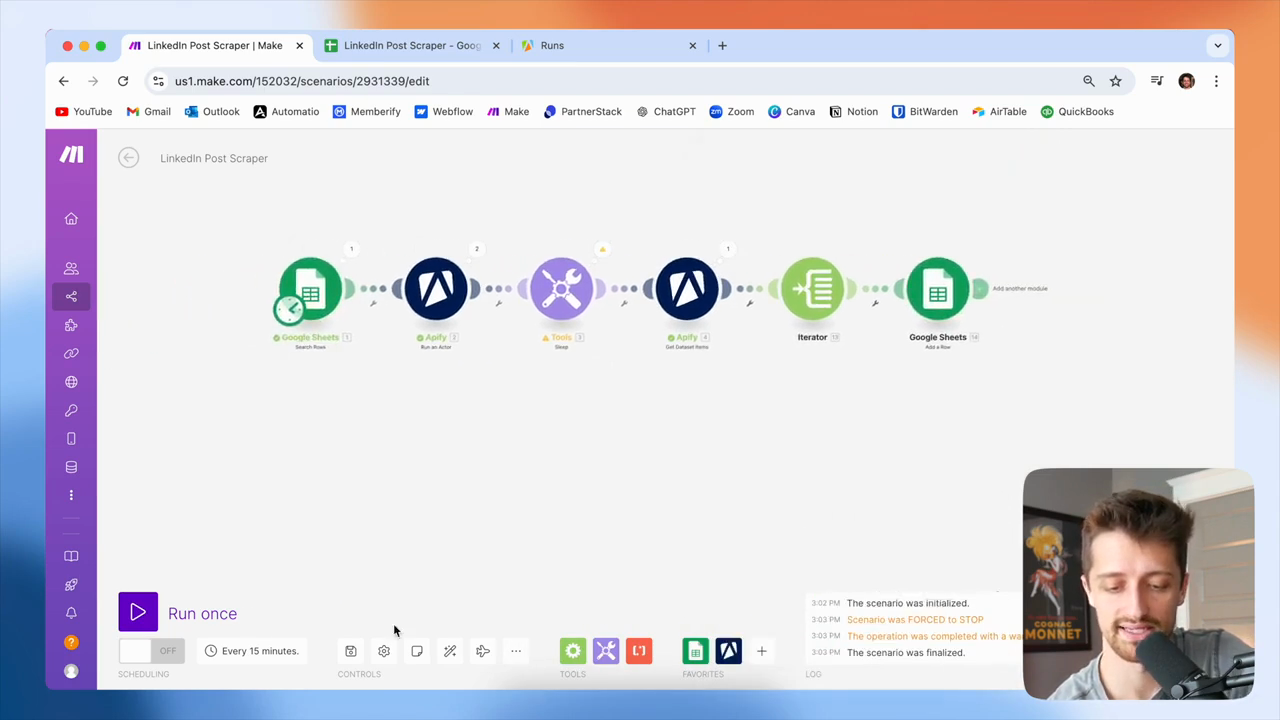

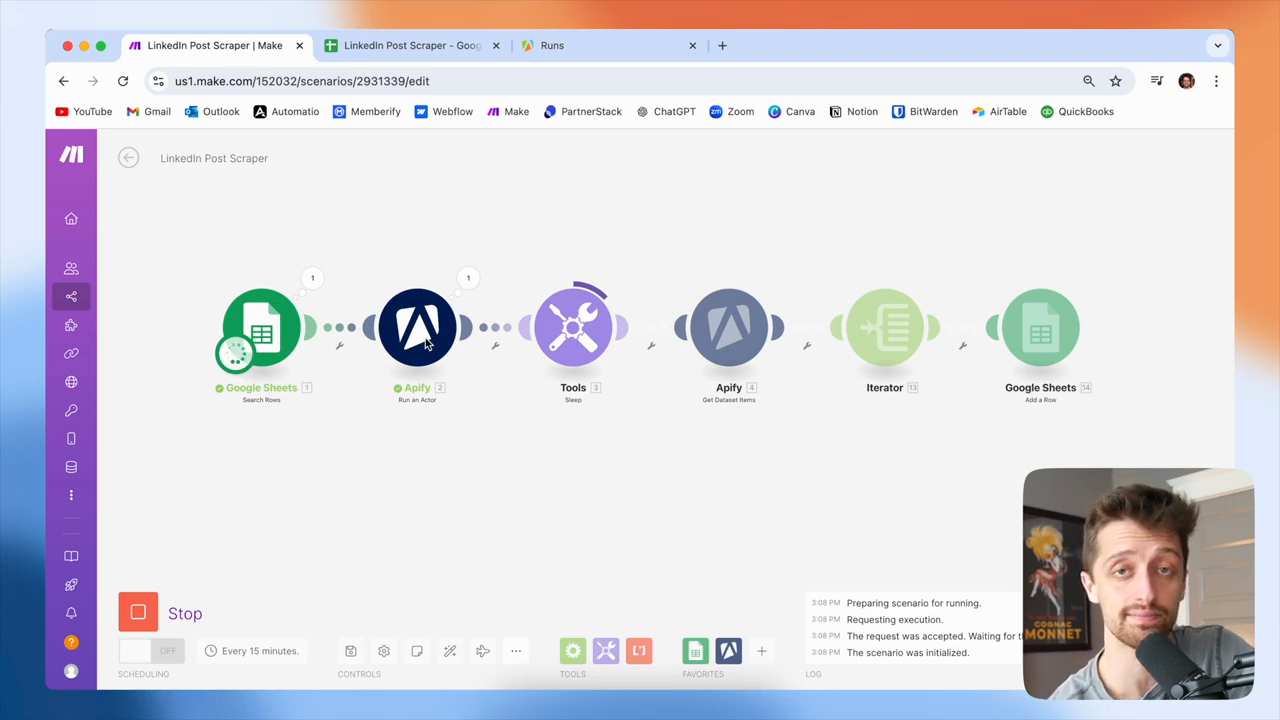

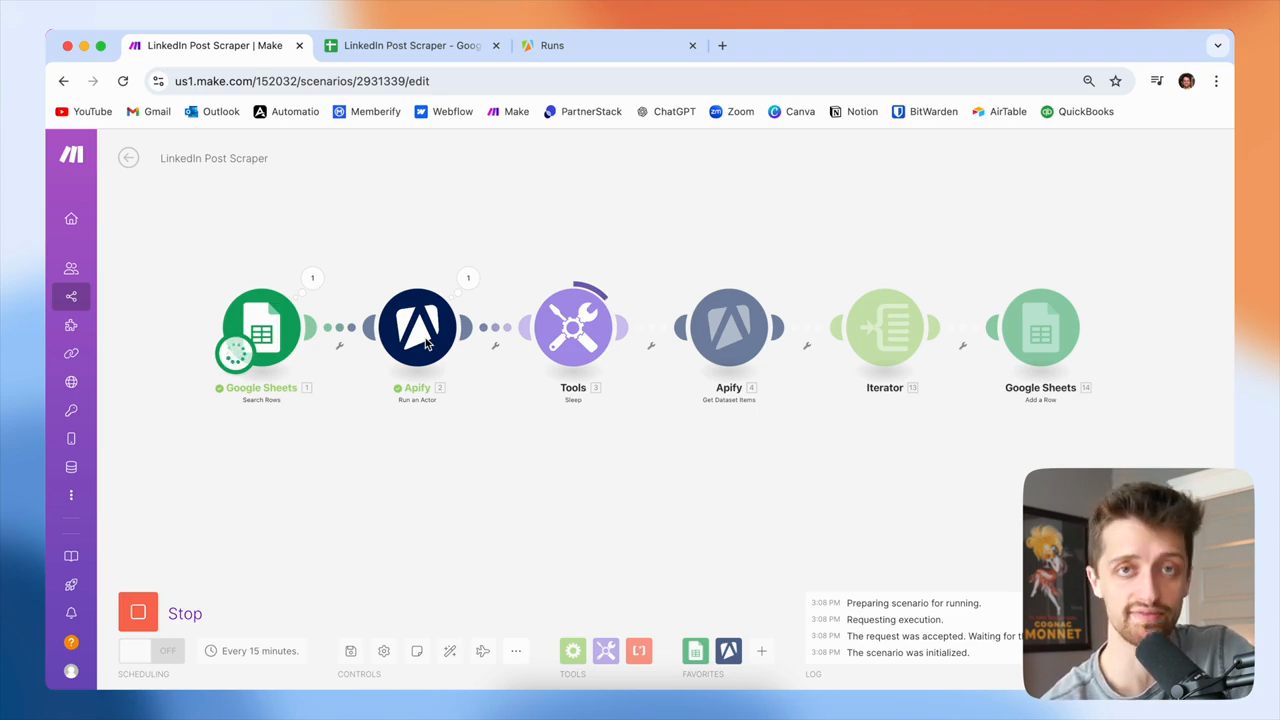

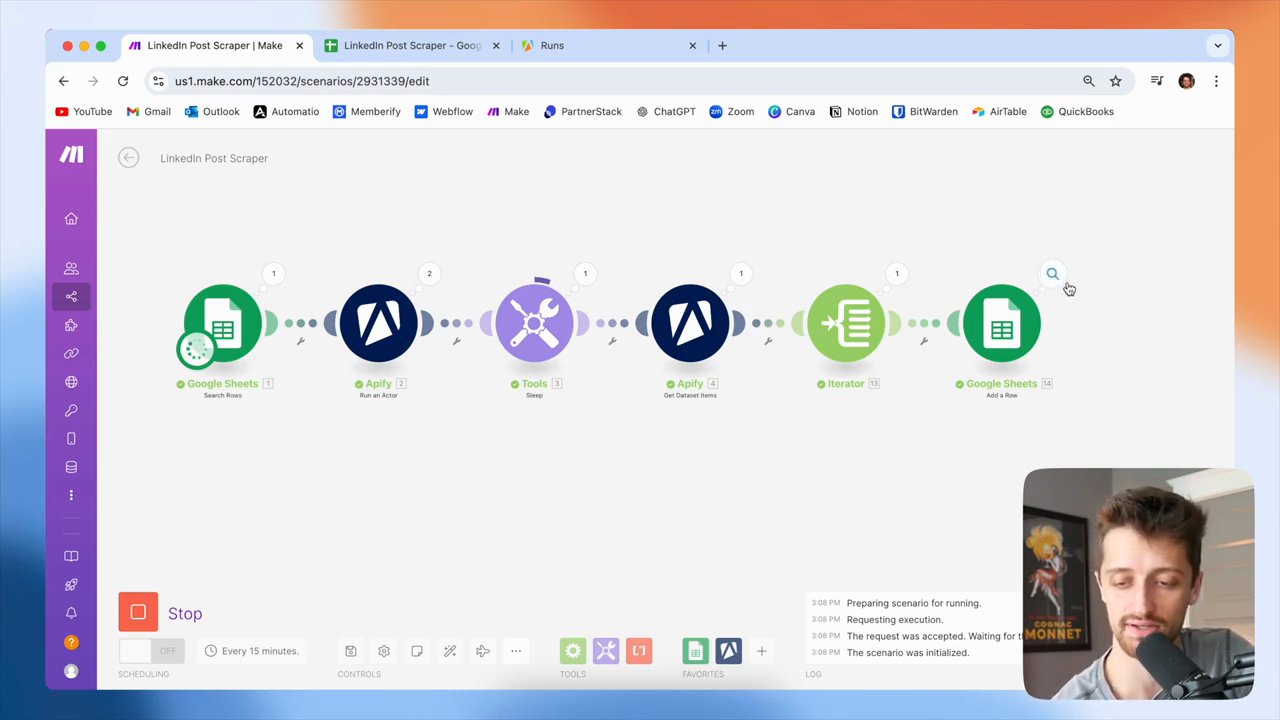

As this runs, I'll walk you through at a high level what we're doing.

It's running now. We just sent the NFL's LinkedIn profile URL to our Apify profile scraper. Okay? Apify will take the URL and scrape it for the latest posts.

And normally, that takes... It seems to take less than 10 seconds each time. Okay? I will hold here in my scenario for 60 seconds.

I can lower this to 25. It doesn't take long because I need to ensure this actor is finished and has retrieved the information before I can access it with my fourth module.

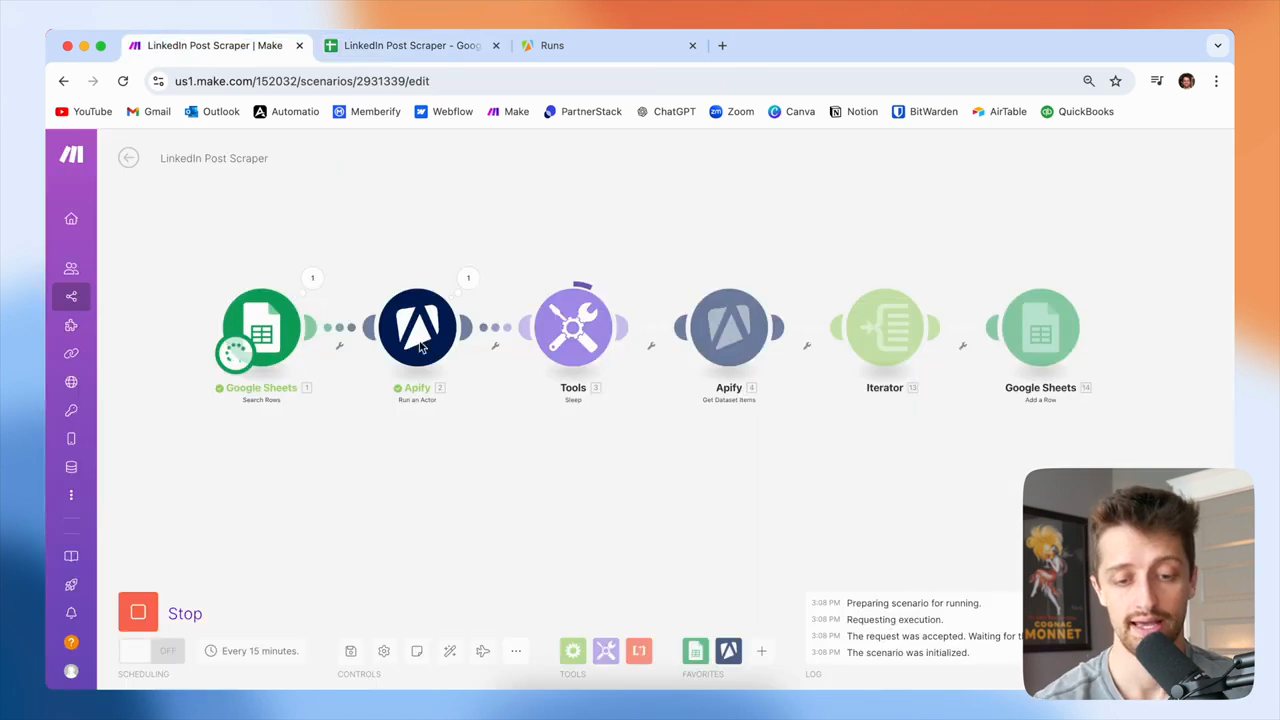

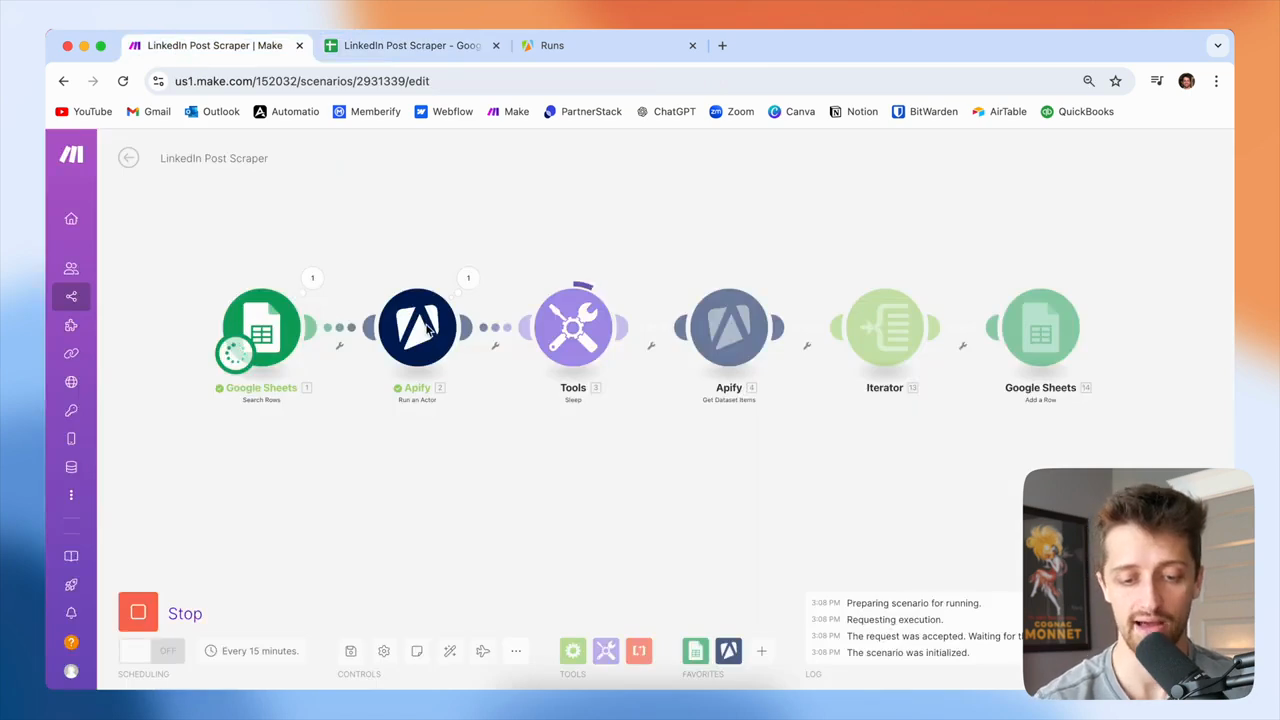

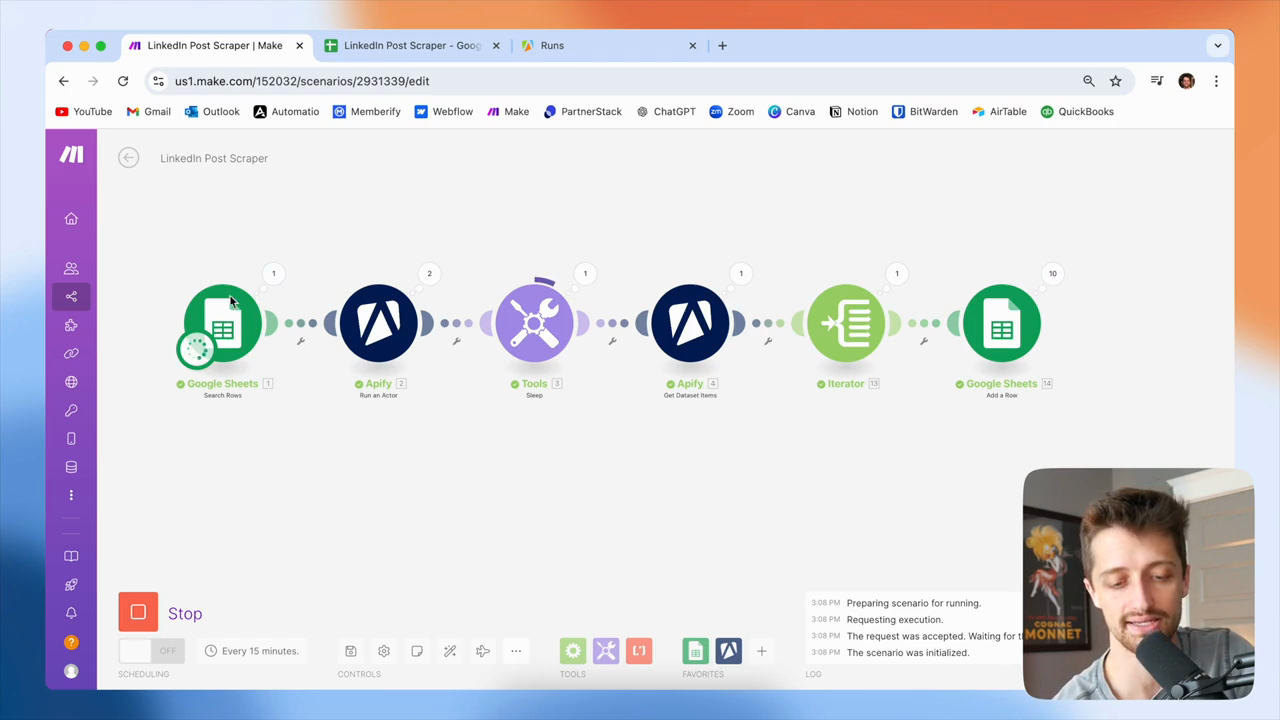

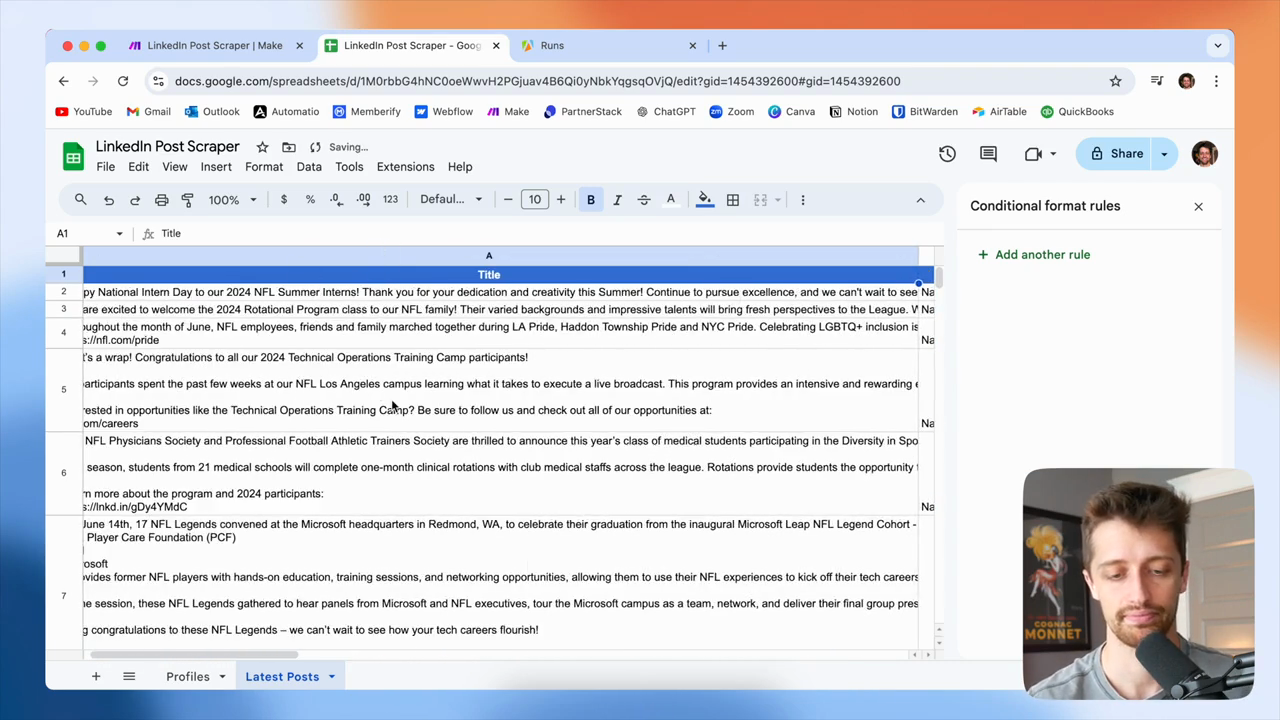

After the 60-second hold, this module retrieves all data from the first Apify module. This allows us to access and work with all the LinkedIn posts from the NFL. That happened perfectly on cue. Next, for each post in the array, we add them to our Google Sheet.

We used 10 operations to add all 10 posts to this Google Sheet.

Once we do that, we loop back.

We're still running because our Google Sheet has an auto iterator.

All these sheets with URLs come in bundles. We do not need to iterate through bundles. They just iterate automatically. This is running again.

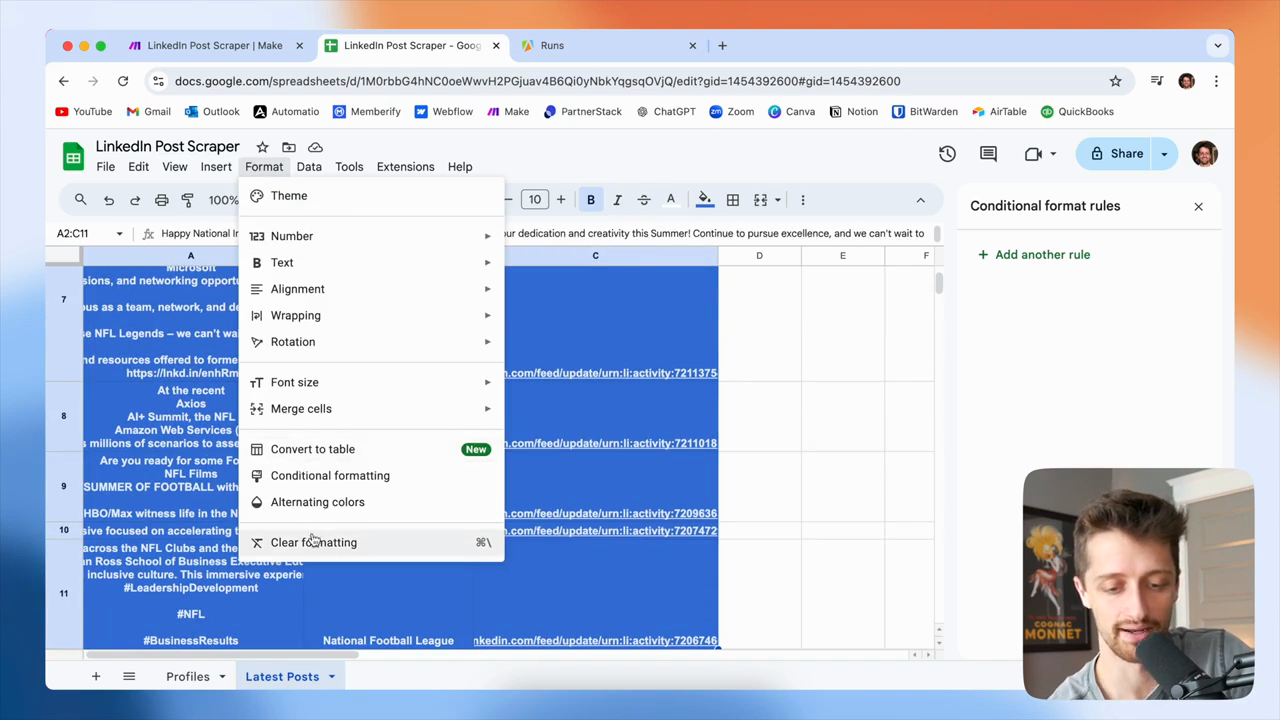

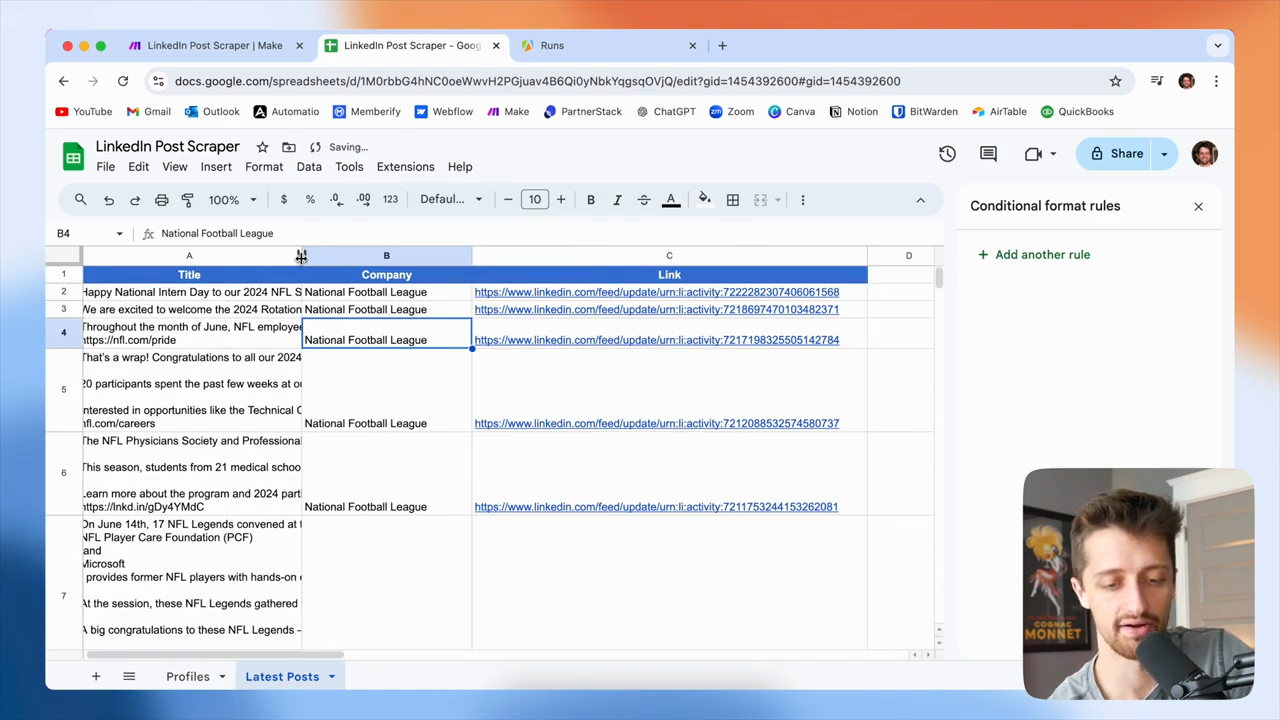

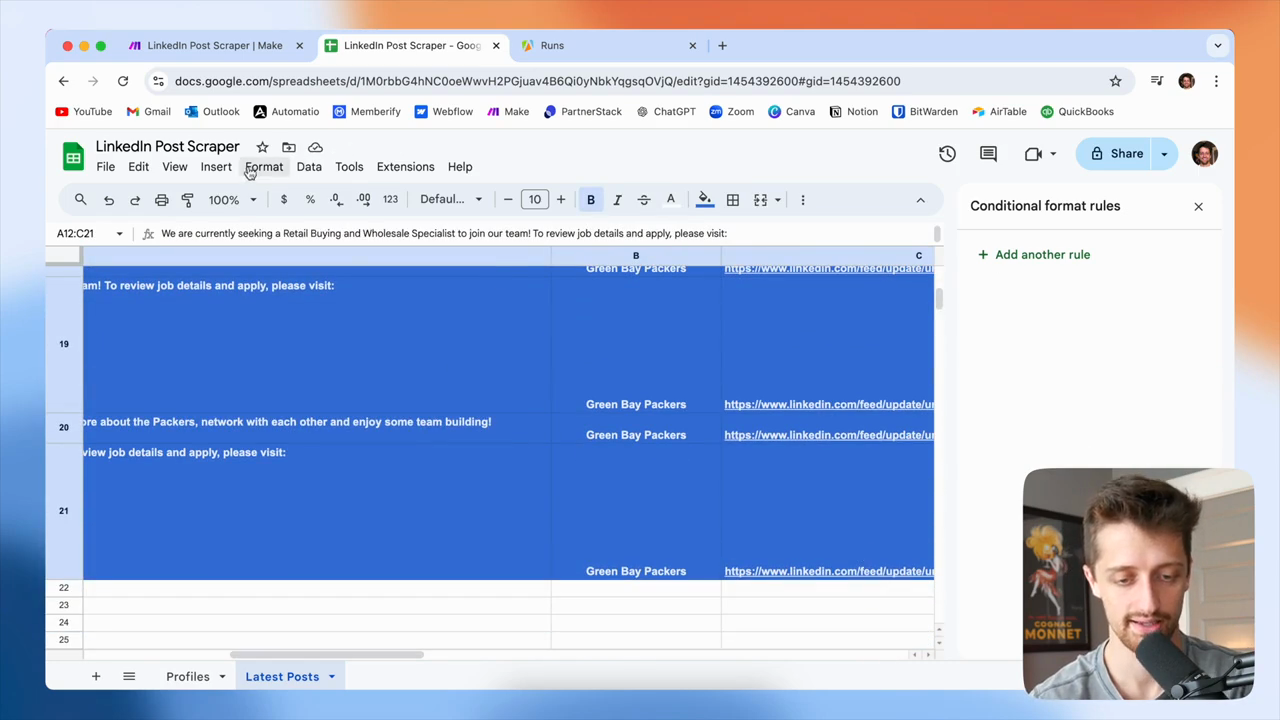

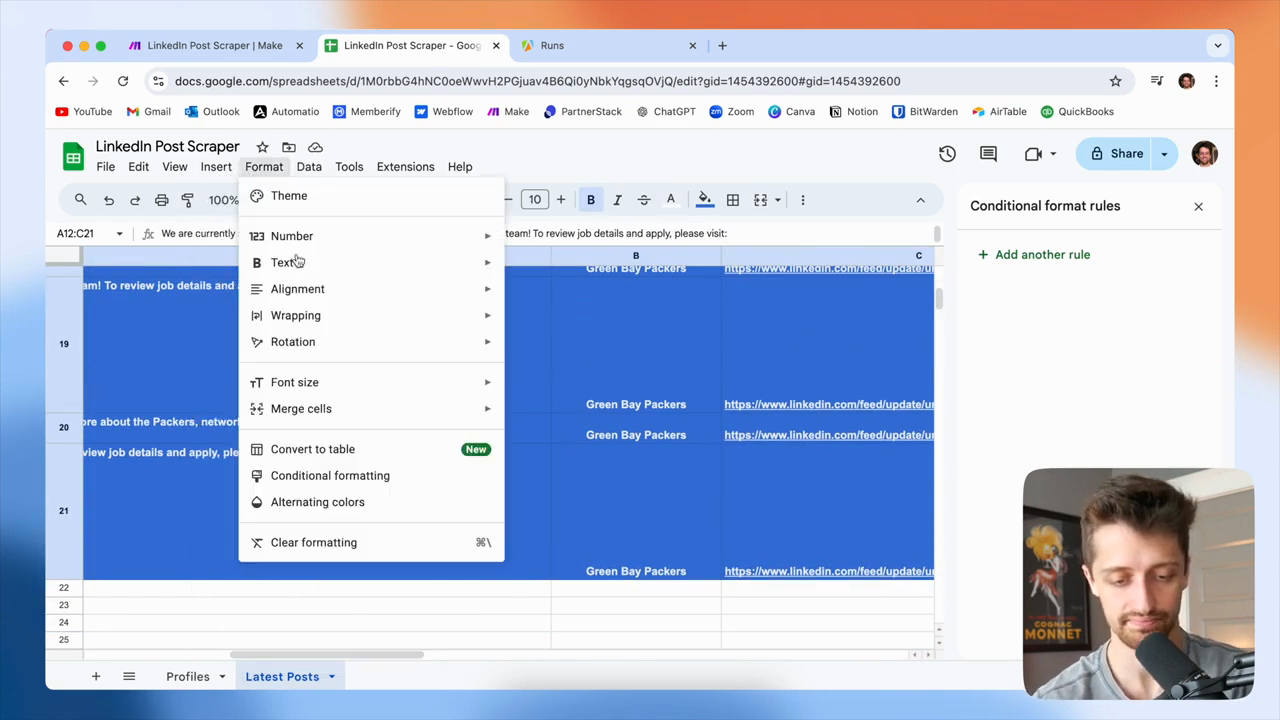

We entered the Green Bay Packers URL into our Appify bot. Hold for 60 seconds. Then, retrieve the latest Green Bay Packers posts and add them one by one to the Google Sheet. We'll let that go. In the meantime, we'll check out our latest posts here. I'll also format this to improve its appearance.

Give me a second here. Let me clear the formatting.

I have all 10 of the latest NFL LinkedIn posts. I have the text, the company name, and the link to each post.

Soon, we'll have the Green Bay Packers added. You can see these are coming in as well. I just need to fix the formatting again. Now, we will retrieve the latest 10 posts for each company or profile in this list. After that, we can use the data however we want.

We can use it to write content in ChatGPT, or send it to our sales team and suggest they review what others are doing. Why aren't we doing this? Or something similar. You can use this to get a comprehensive list of valuable data from your competitors, portfolio companies, or favorite LinkedIn profiles.

This is the best and likely the cheapest way to automatically scrape LinkedIn posts using make.com. If you enjoyed this video, please click the like button. I would really appreciate it. If you want to see more videos like this in the future, consider subscribing. That would be great.

If you have any questions, please leave a comment and let me know as soon as possible. I'll get back to you as soon as I can. Thank you all so much for being here. I'll see you in the next video. Peace.